Your domain authority is 70. Your keyword rankings are solid. But none of that tells you whether Perplexity is recommending your competitor instead of you. Google’s organic results and AI-generated answers are pulling from increasingly different sources, and the gap between “ranking well” and “being cited by AI” is widening every quarter. A high backlink count got you to the top of a results page. It won’t get you into a ChatGPT answer.

The unit of authority has changed. And most teams are still measuring the old one.

Your Backlink Profile Doesn’t Predict Your AI Citation Rate

This is the central paradox of modern search. Brands with strong SEO foundations are discovering their AI visibility is near zero, while newer sites with modest domain authority are getting cited consistently across ChatGPT, Perplexity, and Google AI Overviews.

The data makes this uncomfortable to ignore. While established domains with DA 60+ are cited 4x more frequently than new sites overall, the correlation between raw link quantity and LLM citations sits at roughly r = 0.10. That’s not a weak signal. That’s almost no signal at all.

The reason is structural. A traditional search engine asks: “What is the most popular page for this query?” A generative engine asks something different: “What is the safest, most verifiable thing I can repeat without being wrong?”

Those are not the same question. And they don’t produce the same results.

Approximately 31% of AI-cited pages rank outside the top 100 in traditional organic search. AI engines are surfacing “hidden gems” of structured, data-dense content that Google’s algorithm overlooks due to a lack of traditional backlinks. Your competitor with the clean FAQ structure and original research report may be getting cited constantly, while your 5,000-word pillar page sits invisible.

What AI Citations Actually Are (and Why Mentions Don’t Count)

Before tracking anything, it helps to be precise about what you’re tracking.

An AI citation is not the same as a brand mention. A mention is when an AI names your brand in its response — a recommendation, a comparison, a reference. Mentions drive brand awareness and share of voice, which matter. But they don’t drive traffic.

A citation is formal attribution. It’s the structured link embedded in an AI response that identifies the specific URL used as evidence for a claim. It’s the mechanism behind Retrieval-Augmented Generation (RAG), where the AI grounds its answer in a source it can point to.

| Feature | Brand Mention | AI Citation |

|---|---|---|

| Visual form | Plain text in response body | Clickable link or footnote |

| Primary mechanism | Entity recognition and training bias | RAG retrieval |

| Primary value | Brand awareness | High-intent referral traffic |

| Key metric | Share of Voice | Citation Rate and CVR |

| Optimization focus | Multi-source PR/social | Content structure and factual density |

There’s a pattern worth knowing called the “Mention-Source Divide”: an AI platform uses your brand’s data but names a competitor, or cites a third-party aggregator like Reddit or a review site instead of your original source. Brand mentions are 3x more predictive of overall AI visibility than backlinks, yet citations are the only mechanism that preserves the direct revenue pathway from the AI interface to your website.

The 3 Factors AI Engines Actually Weigh When Selecting a Source

AI visibility is less about link authority, more about what makes content safe for a machine to repeat. Three factors dominate the selection logic.

Format and extractability. AI platforms don’t read 3,000-word articles. They retrieve chunks of text, typically 75–300 words per section. Content must be modular. Leading each section with a direct, declarative statement — the core answer first — increases citation probability by 40%. Structured data (Schema.org markup) acts as a direct line to the AI, reducing ambiguity during extraction.

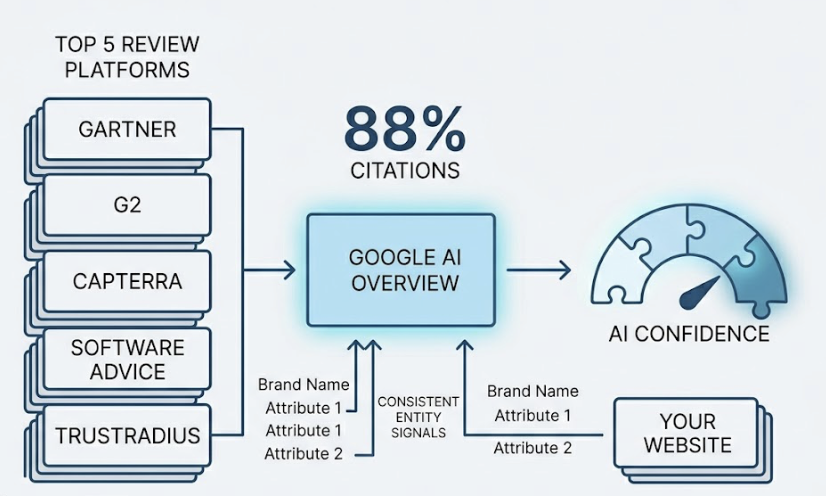

Source type and corroboration. For category-level queries, 88% of citations in Google AI Overviews go to just five major review platforms: Gartner, G2, Capterra, Software Advice, and TrustRadius. For many brands, the path to being cited doesn’t run through your own website first. It runs through the third-party platforms the AI already trusts. Consistent entity signals — your name, core attributes, and positioning — across multiple authoritative sources builds the AI’s “confidence” to cite your own content later.

Factual density and original research. Statistics are the primary currency of AI trust. Adding statistics to a piece of content improves AI visibility by 41%, making it the single most effective optimization technique tested in peer-reviewed research from Princeton and Georgia Tech. Websites hosting original research generate 4.31x more citation occurrences per URL than those that rehash existing information.

Original research, surveys, and benchmark reports are citation magnets precisely because they offer unique data points the AI cannot find elsewhere.

Most Brands Don’t Know If They’re Being Cited — or Ignored

This is where the problem gets operationally difficult.

Traditional web analytics weren’t built for AI search. Google Analytics 4 doesn’t have a native “AI referral” channel. A substantial portion of AI-referred traffic lands as “Direct” with no referrer — because when a user clicks a link inside the ChatGPT or Claude mobile app, referrer headers are frequently stripped. Users who read your AI citation, trust the reference, and type your URL into a browser hours later look like direct traffic. They’re not.

There’s also a decoupling between impressions and clicks that makes this harder to see. Organic CTR can drop by as much as 61% for informational queries when an AI Overview is present. But the visitors who do click through from an AI citation convert at 9x the rate of standard search traffic and bounce 23% less. They arrive pre-qualified by the AI, ready to act rather than browse.

The visibility is real. The standard measurement framework just can’t see it.

5 Things an AI Citation Tracker Should Actually Show You

Knowing you’re missing from AI answers is only the starting point. The tools that matter for this kind of tracking need to do more than confirm absence. Here’s what to look for when evaluating an ai citation tracker:

1. Cross-platform coverage. A tracker monitoring only ChatGPT sees less than 15% of the total citation landscape. Only 11% of domains are cited by both ChatGPT and Perplexity for the same set of queries. Professional tracking requires visibility across ChatGPT, Perplexity, Gemini, Google AI Overviews, Bing Copilot, and regional models. Each platform has its own retrieval logic and source preferences.

2. Statistical sampling at scale. AI responses are non-deterministic. There’s less than a 1-in-100 chance that an AI will produce the same list of brand recommendations twice in a row if asked 100 times. A single manual check is a snapshot, not a signal. Effective trackers run prompt matrices of 50 to 150 queries, each executed dozens of times across locations and timeframes to produce a statistically meaningful AI Visibility Score.

3. Source granularity and citation gap mapping. Knowing who got cited instead of you is more actionable than knowing you were absent. A tracker should map the exact third-party domains driving citations in your category. If the AI consistently cites a specific Reddit thread or a competitor’s comparison table, that’s your next content target.

4. Contextual and sentiment analysis. Being cited isn’t always a win. If an AI cites your brand alongside caveats about pricing or support, you’re accumulating reputation damage with every mention. Position rank matters too: being the first brand listed in an AI response carries significantly more authority than being fifth.

5. Source decay monitoring over time. The half-life of an AI citation for a non-network domain is roughly 4.5 weeks. Content that isn’t refreshed falls out of the retrieval pool on a rolling basis. A tracker needs to surface when a high-performing page has decayed and needs updating to regain its citation status.

How to Start Tracking AI Citations Without Starting From Scratch

Manual checks — typing prompts into ChatGPT or Perplexity yourself — are free and useful for initial exploration. They’re also easy to misread. Confirmation bias is a real problem: one positive citation creates the assumption of high visibility, while one negative result triggers an unnecessary content overhaul. Manual checks also can’t capture the “fan-out queries” — the 3 to 5 secondary searches an AI engine runs in the background to build a comprehensive answer.

The shift to automated monitoring is where real signal emerges.

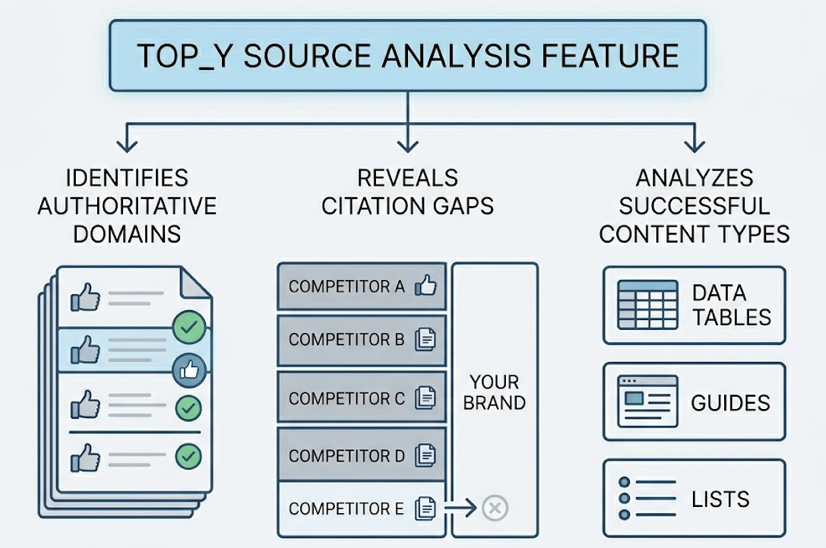

Topify addresses this through its Source Analysis feature, which reverse-engineers the retrieval logic behind AI citations at scale. Rather than telling you whether your brand appeared, it identifies which domains the AI is treating as authoritative for your category, which queries produce citation gaps where competitors appear and you don’t, and what content types are driving successful citations in your space.

The practical output: a prioritized list of third-party domains where your brand needs coverage. Not “what keyword should we target,” but “which authoritative site does the AI trust that doesn’t mention us yet?” That’s a fundamentally different — and more actionable — question.

Topify tracks performance across ChatGPT, Gemini, Perplexity, and other major AI platforms, covering seven key metrics: visibility, sentiment, position, volume, mentions, intent, and Conversion Visibility Rate (CVR). The CVR metric is particularly relevant here — it estimates the probability that an AI response will lead a user to meaningful brand interaction, which is the revenue signal that standard analytics can’t capture.

Turning Citation Data Into a Content Strategy That Compounds

The goal isn’t just to track citations. It’s to build a system where being cited more often creates the conditions for being cited even more.

The feedback loop works like this: consistent AI citations increase branded search volume, which search engines read as an authority signal, which increases the AI’s confidence in citing your content, which drives more branded searches. First-mover advantage is real here, and it compounds.

A few structural moves make a measurable difference:

Map the revenue visibility gap. Find the high-intent queries where your brand ranks #1 on Google but is absent from the AI response. That intersection is the highest-ROI target for optimization. You already have the domain authority. You need the content format.

Restructure for modular extraction. Rewrite H2 and H3 headers as specific questions. Lead each section with a direct answer. Keep sections focused — 75 to 300 words per idea. This is the content architecture that facilitates the chunking process RAG systems rely on.

Target the gatekeeper domains. Use citation gap data to identify the review sites, Reddit threads, and industry publications the AI treats as primary sources in your category. Building presence on those domains — through contributed content, product listings, or coverage — is often faster than outranking them.

Implement a 90-day refresh cycle. AI-cited content is, on average, 25.7% newer than traditional search results. High-value pages that go 90+ days without updates fall out of the active retrieval pool. A regular refresh cadence — updating statistics, adding new data points, expanding FAQ sections — is a core GEO tactic, not an optional hygiene step.

Unmask AI referrals in GA4. Implement custom channel groups using Regex to move “Direct” sessions with AI-platform referrer patterns into a distinct “AI Referrals” bucket. This is how you start calculating true CVR and attributing revenue to citation activity.

Conclusion

Backlinks built authority on the human web. AI citations are building authority on the machine-synthesized one. The selection logic is different, the content requirements are different, and the measurement infrastructure is different. What hasn’t changed is the first-mover advantage: the brands that start measuring now are building a gap that compounds.

The analytics infrastructure most teams rely on was built for a world where impressions and clicks moved together. In AI search, they’ve decoupled. Visibility often happens without a click. Influence precedes the session by hours or days. The brands winning in this environment aren’t just publishing more content. They’re measuring what the machine chooses to repeat — and optimizing for that signal specifically.

An ai citation tracker doesn’t replace your SEO stack. It fills the measurement gap your current tools can’t see.

FAQ

What is an AI citation tracker?

An AI citation tracker is a monitoring tool that simulates user prompts at scale to measure how often, where, and in what context a brand is referenced within AI-generated answers. Unlike traditional rank trackers, it analyzes the specific URLs used as evidence in an AI response and identifies citation gaps where competitors appear and you don’t.

How is an AI citation different from a backlink?

A backlink is a static hyperlink placed by a human editor to signal popularity or relevance. An AI citation is a dynamic, probabilistic attribution generated by an LLM during synthesis to ground a response in verifiable facts. The selection logic is fundamentally different: backlinks signal popularity, citations signal extractability and factual legitimacy.

Can I track AI citations for free?

Manual tracking — typing prompts into ChatGPT or Perplexity — costs nothing but produces unreliable signal. Because AI outputs are non-deterministic, a single check has less than a 1-in-100 chance of matching what the AI would say on the next prompt. Statistically meaningful tracking requires automated sampling across dozens or hundreds of prompt executions.

Does being cited by AI improve traditional SEO?

AI citations don’t pass link equity in the traditional sense. But they create an authority feedback loop: more citations drive more branded search volume, which Google reads as a topical authority signal, which improves organic rankings. The two systems are increasingly interconnected, even if the direct mechanism differs from classic link equity.

What content format gets cited most by AI?

Modular content with a clear inverted pyramid structure — direct answer first, supporting detail after — performs best. Original research with verifiable statistics generates 4.31x more citation occurrences per URL than derivative content. FAQ sections with specific, conversational questions also see high citation rates because they directly match how users phrase AI queries.