If you’ve ever tried to pick an AEO tool by reading vendor landing pages, you already know the problem. Every platform promises real-time tracking, multi-engine coverage, and “actionable insights.” But G2 reviews tell a different story.

G2’s AEO category has grown 2,000% in market interest since early 2025. That growth means more tools, more noise, and more ways to make an expensive mistake. This article cuts through the marketing layer and shows you what verified users are actually saying—what drives renewals, what causes cancellations, and which platforms are quietly setting a new standard.

Most Teams Read G2 Wrong. Here’s What to Look For Instead.

Most buyers skim star ratings and check the top three reviews. That’s a fast path to buyer’s remorse.

In the AEO category, the most useful signal isn’t the overall score. It’s the “What I Dislike” section. In a product category defined by non-deterministic AI outputs and constant model updates, no tool works perfectly all the time. A platform with zero critical feedback is either suppressing negative reviews or too shallow to have encountered real-world friction.

Look for reviews from Verified Current Users who mention specific failure scenarios: how the tool handled a Google AI Overview update, whether data refreshes kept pace with model changes, or what happened when Perplexity’s citation behavior shifted. That specificity is the signal. Generic praise is not.

Three other filters worth applying: check how recent the reviews are (anything older than six months is often outdated in a space where LLMs iterate monthly), look for reviewers who mention their company size and use case, and pay attention to whether the “Ease of Use” score might be masking data depth trade-offs.

A clean UI doesn’t mean accurate data. A high usability rating sometimes means the tool is hiding complexity behind a polished surface—including model latency gaps and averaged API outputs that miss real-world variability.

The AEO Category on G2 Is More Fragmented Than It Looks

G2 launched a dedicated Answer Engine Optimization category in March 2025. It now lists over 248 tools. That number sounds useful. In practice, it creates a categorization problem that most buyers don’t anticipate.

AEO, GEO, and AI Visibility are often used interchangeably on G2 listings—but they describe meaningfully different functions. AEO focuses on structuring content so that AI systems can extract and cite it directly. GEO targets brand presence and citation frequency in conversational AI responses. AI Visibility is a broader measurement layer covering brand sentiment and hallucination detection across platforms.

A tool might appear under all three tags while only reliably solving one of them.

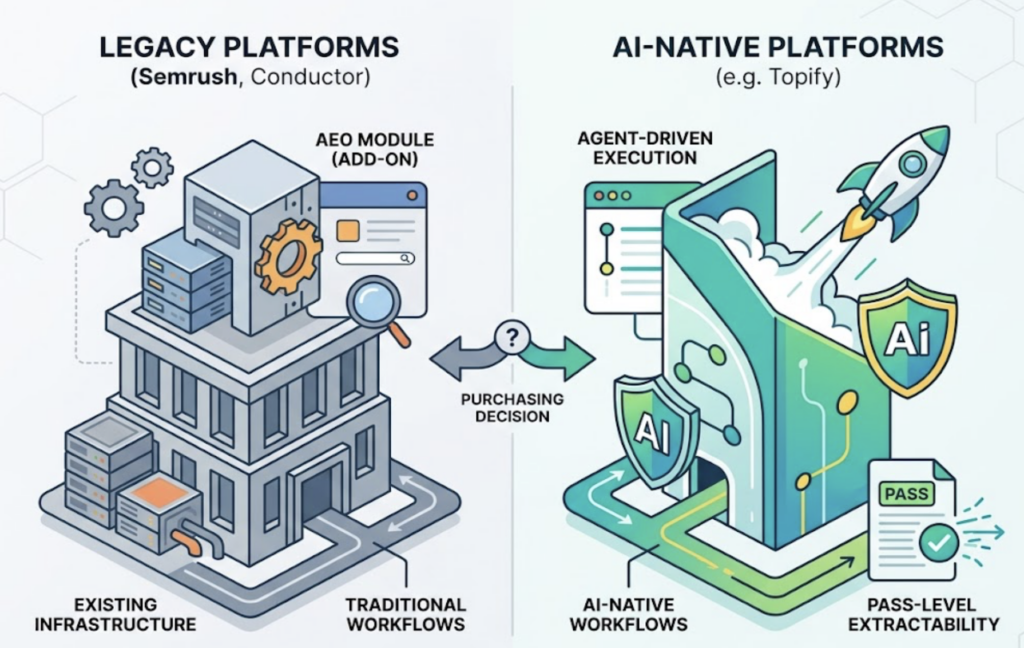

This distinction matters for purchasing decisions. Legacy SEO platforms like Semrush and Conductor have added AEO modules on top of existing infrastructure. These additions often work well for teams already embedded in those ecosystems, but they weren’t built from the ground up for AI-native workflows. Newer platforms like Topify were designed specifically for this environment—prioritizing what the research calls “pass-level extractability” and agent-driven execution over traditional keyword rankings.

Before shortlisting tools, decide what you’re actually trying to measure: AI mention frequency, brand sentiment in AI responses, citation source analysis, or conversion impact from AI-driven discovery. The right tool depends on which of these you’re accountable for.

What G2 Reviewers Keep Praising Across the Category

Strip away the platform-specific language and three themes dominate the positive reviews.

Multi-engine coverage is the most consistently praised capability. Users highlight tools that aggregate data from ChatGPT, Gemini, Perplexity, and Claude into a unified dashboard. The reason this matters: research shows only 11% of cited domains appear across multiple AI platforms. Each engine runs on a different indexing strategy. A tool optimized primarily for ChatGPT leaves a brand invisible on Perplexity—which cites nearly 3x more sources per response than ChatGPT and has grown 287% year-over-year in search volume.

Reporting clarity is the second pillar. Enterprise users consistently highlight platforms that translate complex visibility scores into formats their CMO can read—share of voice, competitor benchmarking, trend lines over time. Raw data without context doesn’t get budget renewed.

Prompt intelligence rounds out the list. Tools that surface which prompts are actually driving AI recommendations—not just generic tracking queries—receive meaningfully higher satisfaction scores. HubSpot’s AEO tool, for instance, is praised for pulling prompts directly from CRM data, ensuring that tracked questions reflect real buyer conversations rather than hypothetical ones.

Topify addresses all three dimensions through its 7-metric framework: Visibility, Volume, Position, Sentiment, Mentions, Intent, and CVR. The inclusion of CVR—Conversion Visibility Rate—is a differentiator that most category tools skip entirely. It connects brand visibility directly to downstream conversion probability, which is the metric that justifies AEO spend in a quarterly review.

The Complaints G2 Reviews Repeat Most

The negative patterns are just as consistent as the positive ones—and more instructive.

The actionability gap is the dominant complaint across first-generation AEO tools. Users describe having dashboards full of data with no clear path to improvement. Seeing a low visibility score is one thing. Knowing what content to create, which source gaps to close, or which prompts to prioritize is another. Tools that stop at tracking face abandonment when users realize the analysis doesn’t generate a next step.

Data latency is the second recurring frustration. Many tools refresh every 24-48 hours. In an environment where AI model updates can shift brand citation patterns overnight, that lag creates a meaningful blind spot. Users of tools like Ahrefs Brand Radar have independently documented significant undercounting of actual mentions versus manual verification—a gap that compounds when teams try to justify spend based on reported numbers.

Pricing opacity is the third pattern. Several enterprise-grade platforms advertise a base subscription but require additional per-engine add-ons that push real costs well above $800 per month per domain. Mid-market teams often discover this after signing. The G2 reviews make it visible upfront if you read past the star rating.

For teams evaluating options, the actionability gap is the most important filter. An “intelligence center” that shows you data is only half a product in 2026. The category is moving toward what the research describes as “execution engines”—platforms that identify visibility gaps and deploy fixes within the same workflow.

5 AEO Tools with G2 Presence, Compared by What Users Say

| Tool | G2 Status | Top User Praise | Top User Complaint | Best Fit |

|---|---|---|---|---|

| Topify | Emerging Standard | One-Click Agent Execution, 7-metric CVR framework | Newer platform, still building review volume | Teams that need tracking + execution in one workflow |

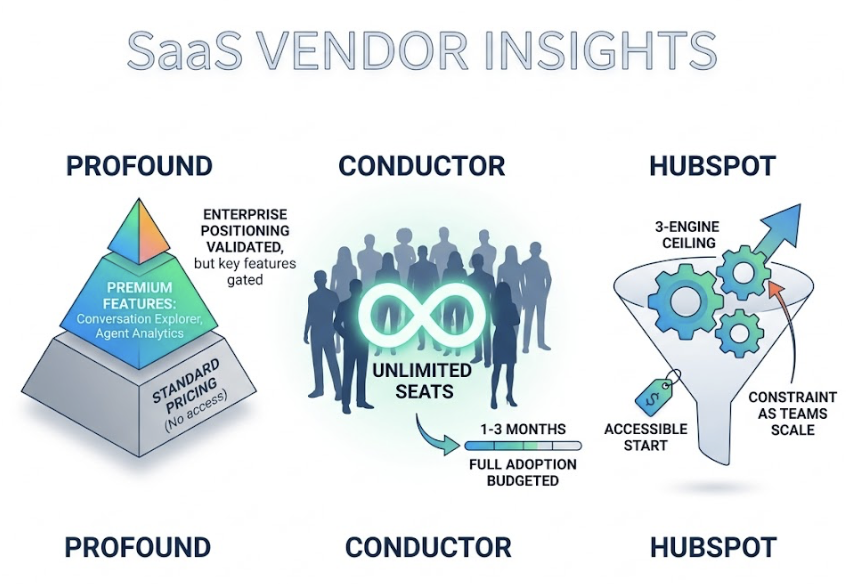

| Profound | G2 Leader | 10+ platform coverage, 200M+ prompt database, SOC 2 | High cost; advanced features gated behind enterprise tier | Fortune 500 brands with compliance requirements |

| Semrush | G2 Leader | Familiar interface; broad SEO/AEO integration | Credit add-on costs; AEO features feel secondary | Teams already in the Semrush ecosystem |

| Conductor | G2 Contender | Unlimited seats; strong customer support | Steep learning curve; UI feels overwhelming | Distributed enterprise teams with budget for onboarding |

| HubSpot AEO | High Momentum | CRM-integrated prompt mapping; $50/mo entry point | Limited to 3 engines; no Claude or Grok tracking | HubSpot users beginning their AEO journey |

A few notes on reading this table: Profound’s enterprise positioning is validated by users, but the features that differentiate it—Conversation Explorer, agent analytics, shopping visibility—sit behind premium tiers that aren’t accessible at standard pricing. Conductor’s “unlimited seats” model is genuinely praised, but users budget 1-3 months for full workflow adoption. HubSpot’s price point makes it an accessible starting point, but the three-engine ceiling becomes a real constraint as teams scale.

Topify’s positioning addresses the actionability complaint directly. Its One-Click Agent Execution allows teams to identify a visibility gap and deploy optimized content to close it within a single workflow, rather than exporting data and building a separate content strategy. Built by former OpenAI researchers and Google SEO practitioners, it targets the 95-98% citation accuracy benchmark that most category tools don’t publish.

The Cross-Platform Coverage Problem Most Buyers Underestimate

Single-engine myopia is the most common—and most costly—mistake in AEO tool selection.

The data is clear: only 11% of cited domains appear across multiple AI platforms. This isn’t a minor gap. It means a brand that dominates ChatGPT citations may be effectively invisible on Perplexity or Google AI Mode, which now has 34% adoption among active searchers.

The platform-level differences matter more than most teams realize:

| AI Platform | Avg. Citations per Response | Update Priority | Search Growth |

|---|---|---|---|

| Perplexity | 21.87 | High (< 30 days) | 287% YoY |

| ChatGPT | 7.92 | Medium (< 60 days) | 156% YoY |

| Google AI | ~5-10 | Low (< 90 days) | 34% adoption |

Perplexity’s citation density means it rewards high-frequency content updates differently than ChatGPT does. A strategy calibrated purely on ChatGPT behavior will underperform on Perplexity—and vice versa. Tools that treat all engines as interchangeable miss this structural difference.

The conversion argument reinforces the coverage case. Traditional Google organic search converts at an average of 1.76%. ChatGPT-driven discovery converts at 15.9%—a 9x difference. That gap reflects the intent of users who ask a conversational AI a specific question and receive a direct recommendation. When AI recommends your brand in that context, the user arrives pre-sold.

Multi-platform coverage isn’t a premium feature. It’s the baseline requirement for any AEO investment that’s expected to generate measurable results.

One Signal G2 Reviews Keep Pointing Back To

Across positive reviews for high-retention AEO tools, one pattern appears consistently: users stay when they can show results to stakeholders who don’t understand AEO.

That’s a more practical filter than it sounds. A tool might have excellent data accuracy and strong multi-engine coverage, but if the output requires a 30-slide deck to explain to a CMO, adoption stalls. The platforms with the strongest renewal rates translate technical visibility metrics into business language—share of voice, competitive gap analysis, conversion impact—without requiring the marketing team to become AI researchers.

Topify’s 7-metric framework is built around this translation layer. Visibility, Volume, and Position are tracking metrics. Sentiment and Mentions provide brand context. Intent and CVR connect the data to actual business outcomes. The framework is designed to be read across functional teams, not just by the person who set up the tracking.

That’s not a feature. It’s a retention mechanism.

Conclusion

The 2,000% growth in AEO category interest on G2 reflects a real shift in how B2B buyers evaluate purchasing options. Nearly 80% of modern B2B buyers now use AI-generated summaries to research purchases. If your brand isn’t cited in those summaries, you’re not in the consideration set—regardless of your Google rankings.

The G2 review signal for 2026 is consistent: teams are moving away from tools that only track and toward platforms that track, interpret, and execute. The “actionability gap” isn’t a minor UX complaint. It’s the primary driver of tool abandonment in this category.

For teams evaluating AEO platforms, the three filters that matter most are: multi-engine coverage across at least ChatGPT, Gemini, and Perplexity; a clear pathway from visibility data to content action; and reporting outputs that non-technical stakeholders can act on. The platforms that deliver all three are the ones with the strongest retention on G2—and the most repeat mentions in the “What I Like” sections.

Read the dislike sections first. That’s where the real product review starts.

FAQ

What is AEO and how is it different from SEO?

AEO (Answer Engine Optimization) structures content so AI assistants like ChatGPT or Perplexity select and cite it as the direct answer to a user’s question. Traditional SEO focuses on ranking in a list of links. AEO focuses on being the source of truth in a synthesized AI response—a fundamentally different targeting mechanism.

Are there AEO tools specifically reviewed on G2?

Yes. G2 launched a dedicated Answer Engine Optimization category in March 2025. It now includes over 248 listings, ranging from dedicated platforms like Topify and Profound to integrated toolkits from Semrush and Conductor.

What should I look for in G2 reviews for AEO tools?

Prioritize reviews from Verified Current Users who describe specific failure scenarios. Look for mentions of multi-platform coverage (ChatGPT, Gemini, Perplexity together), data refresh frequency, and whether the tool offers actionable outputs or just dashboards. Be cautious of any tool with no critical feedback in the “What I Dislike” sections.

How does Topify compare to other AEO tools on G2?

Topify is positioned around its 7-metric framework and One-Click Agent Execution, which addresses the primary complaint in the category: tools that provide data but no clear path to action. It’s particularly suited for teams that need tracking and execution in a single workflow, rather than exporting insights to a separate content process.

Why does multi-engine coverage matter so much?

Only 11% of cited domains appear across multiple AI platforms. Each engine—ChatGPT, Perplexity, Gemini—uses a different indexing and citation strategy. A brand that’s visible on one platform may be effectively absent on the others. At the same time, AI-driven discovery converts at up to 9x the rate of traditional organic search, which means each engine represents a high-intent audience worth tracking independently.