You rank on page one. Your content is technically clean. Your backlink profile took months to build.

And when someone types “what’s the best tool for [your category]” into ChatGPT, it names three of your competitors. Not you.

This isn’t a traffic anomaly. It’s a structural disconnect, and it’s one of the most common problems brands face in 2026. The signals that get you ranked in Google and the signals that get you recommended by AI are not just different. In several key ways, they’re almost opposite.

Google Ranks Pages. AI Recommends Brands. That’s Not a Small Distinction.

Google’s algorithm is built on a graph model. It crawls the web, indexes pages, and assigns authority based on who links to whom. The output is a ranked list of URLs. You compete for position.

AI platforms like ChatGPT, Perplexity, and Gemini use a fundamentally different mechanism: Retrieval-Augmented Generation (RAG). When someone submits a prompt, the system interprets intent, retrieves relevant documents, and synthesizes them into a single conversational answer. The output isn’t a list of links. It’s a verdict.

The user behavior shift backs this up. The average Google query is roughly 3.4 words. The average AI prompt runs about 23 words. Users aren’t just navigating the web. They’re asking for judgment calls, product picks, and vendor comparisons, and they expect a direct recommendation in return.

Google gives options. AI gives verdicts.

If your brand isn’t structured to earn verdicts, no amount of SEO work will fix the problem.

The 5 Reasons AI Skips You (Even When You Rank #1)

You’re not in the sources AI actually learns from

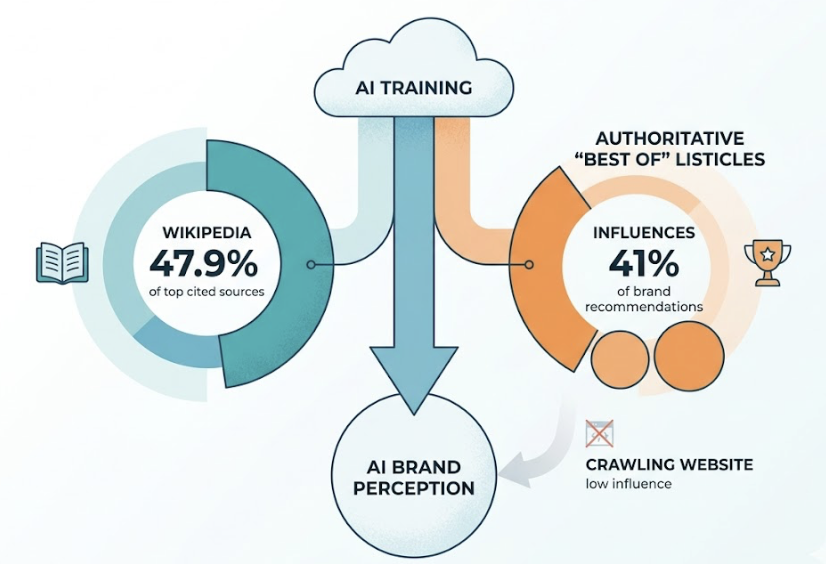

AI systems don’t discover brands by crawling your website. They develop confidence about brands through a training process that weights certain sources far more than others. Wikipedia alone accounts for 47.9% of ChatGPT’s top cited sources. Authoritative “Best of” listicles influence 41% of brand recommendations in ChatGPT.

If your brand isn’t present in those reference-grade sources, the model’s internal confidence in your brand is low regardless of your domain authority.

You can rank #1 on Google and still be effectively unknown to an AI.

Your brand has no story outside your own domain

LLMs treat brands as entities in a knowledge graph, not just as URLs to index. An entity isn’t just a name. It’s a cluster of attributes: what the brand does, who it’s for, how it compares, and what independent users say about it.

If that entity profile only exists on your website, the AI can’t build a reliable picture. Brands described consistently and positively across at least four non-affiliated forums or publications are 2.8 times more likely to appear in ChatGPT responses. Without cross-platform reinforcement, the model doesn’t have enough data to confidently surface your brand when it counts.

Real users aren’t talking about you where AI listens

Traditional SEO values backlinks. AI systems look for social validation through authentic community engagement. Reddit accounts for 46.7% of Perplexity’s top citations. If real users aren’t discussing your brand in relevant subreddits, comparison threads, or Q&A forums, AI registers that as an absence of endorsement.

That absence is enough for it to name someone else.

Your content is built for clicks, not extraction

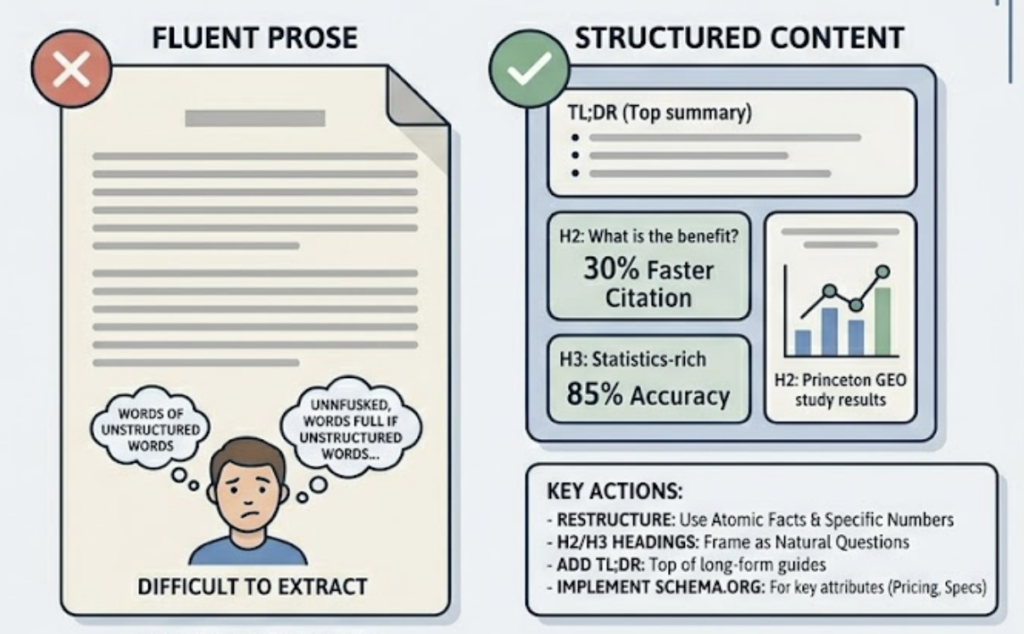

A lot of SEO content is designed to keep readers engaged. AI doesn’t need engagement. It needs extractable facts. Research from the Princeton GEO study, which tested 10,000 queries, found that adding statistics increases AI visibility by up to 40%. Adding citations to credible sources adds another 40% lift. Expert quotes contribute around 30%.

Most brand content continues to publish prose-heavy, keyword-optimized text that gives AI models nothing concrete to cite or synthesize.

If the model can’t chunk your content into verifiable claims, it won’t use it.

Competitors are earning AI citations while you optimize title tags

Around 41% of ChatGPT’s brand recommendations come from list mentions in “Best of” articles and industry roundups. Traditional backlinks, the metric most SEO teams track carefully, have near-zero influence on AI citation probability.

That’s the gap most brands still can’t see.

What AI Brand Visibility Actually Measures

The standard SEO dashboard won’t surface any of this. AI brand visibility is a separate set of metrics, and the gap between them and your current reporting is where most brands are flying blind.

Mention Frequency tracks what percentage of relevant queries include your brand in the AI’s response. Think of it as impressions, except it measures presence inside the answer, not on the results page.

Sentiment Score measures how the AI describes you when it does mention you. Being named isn’t the win. If AI consistently pairs your brand with phrases like “limited integrations” or “better for smaller teams,” that framing affects user decisions downstream, even if your product outperforms the description.

Position Index captures where you land in an AI recommendation list. First mention and fourth mention are not comparable outcomes. AI responses operate with a steeper winner-take-all dynamic than anything in traditional search.

Entity Confidence is newer, and it’s arguably the most telling. Only 30% of brands maintain consistent visibility across multiple regenerations of the same query. If you appear in AI responses sometimes but not reliably, your brand has an entity confidence problem, not just a coverage problem.

Together, these metrics form what’s called Share of Model: the AI-era equivalent of Share of Voice. You measure it by testing a set of relevant prompts, tracking how often your brand appears across multiple runs, and comparing that rate against competitors in your category.

You Can’t Fix What You Can’t See

Most brands today have no idea what their AI visibility looks like. Unlike Google Search Console, which gives you a direct feedback loop of impressions, clicks, and positions, AI platforms are black boxes. The same query can produce different answers at different times. Your brand might appear consistently in ChatGPT and be completely absent from Perplexity.

This is the traceability gap, and it’s the reason most GEO efforts stall before they start.

Topify addresses this directly. Its Visibility Tracking lets you run specific prompts across major AI platforms and see exactly where your brand appears, or doesn’t, across ChatGPT, Gemini, Perplexity, and others. The Source Analysis feature goes further, identifying which third-party domains are driving AI recommendations for your competitors. You can see the citation gap in concrete terms rather than hypothesizing about it.

The starting point isn’t optimization. It’s establishing a baseline. Which prompts trigger competitor mentions? Which ones ignore your brand entirely? Which third-party sources are building the AI citations you don’t have yet?

Track it. Map it. Then act.

3 Moves That Actually Improve AI Brand Visibility

Build content AI can actually cite

The Princeton GEO study confirmed that structured, statistics-rich content consistently outperforms fluent prose for AI citations. The practical implication: restructure your cornerstone content around atomic facts. Each section should contain standalone, extractable claims backed by specific numbers. Use H2 and H3 headings framed as natural questions. Add a TL;DR at the top of long-form guides. Implement Schema.org markup so AI systems can extract your brand’s attributes, pricing, and product specs without inferring from prose.

The bar isn’t “informative.” It’s “extractable.”

Expand your third-party footprint

Between 82% and 85% of AI citations come from third-party sources. Your own domain contributes less than most marketing teams expect. The brands earning AI recommendations are investing in authentic community presence on Reddit, inclusion in industry roundups and authoritative listicles, and publishing original research with verifiable data points.

This isn’t about gaming AI. It’s about building the kind of cross-platform brand presence that AI systems interpret as consensus, not self-promotion. Those are different things, and the model can tell the difference.

Monitor sentiment, not just mentions

Visibility alone isn’t the goal. If an AI mentions your brand but consistently frames it with negative attributes, that’s a messaging problem dressed up as a visibility win. Topify’s Sentiment Analysis tracks how AI platforms characterize your brand compared to competitors, so you can identify where the framing is off and correct it through targeted content and external PR.

Brands that run systematic GEO campaigns show what’s possible. A building materials supplier achieved a 540% increase in Google AI Overview mentions after restructuring content around user intent and AI-friendly structure. An e-commerce brand saw a 312% increase in organic traffic after a six-month GEO campaign. Visitors arriving from AI sources also tend to convert at significantly higher rates than standard organic traffic, with estimates ranging from 4x to 23x, because they’ve already received a recommendation before clicking.

The opportunity is large. But only if you can measure your starting point first.

Conclusion

SEO built the foundation. It’s not being torn down.

But the rules for what gets built on top of it have shifted. AI systems don’t reward the brands that optimized hardest for crawlers. They recommend the brands with the clearest entity definition, the strongest cross-platform consensus, and the most extractable content. Those are different skills, and most SEO playbooks haven’t caught up yet.

The gap between ranking and being recommended is real, measurable, and closeable. But only if you can see it first.

FAQ

Is GEO replacing SEO?

No. GEO is layered on top of traditional SEO, not replacing it. Your existing rankings and domain authority are part of the “source discovery” phase, where AI systems identify which pages to retrieve. Many AI citations still come from pages already ranking in Google’s top 10. But GEO determines whether a page, once found, gets synthesized and named in the AI’s actual response. You need both layers working.

How long does it take to improve AI brand visibility?

Most brands see measurable movement within three to six months. A practical starting sequence: establish your baseline in the first 10 days, implement structural content changes (statistics, schema markup, expert citations) in the following two weeks, then shift focus to third-party expansion through Reddit, media outreach, and industry publications. Some brands have reported significant AI Overview lift within six months of systematic implementation.

Which AI platforms should I prioritize?

It depends on your audience. B2B and enterprise brands typically get more value from prioritizing Perplexity and ChatGPT. B2C and e-commerce brands should focus on Google AI Overviews and ChatGPT. Technical audiences tend to use Claude and Perplexity for source-heavy queries. The practical answer: track all major platforms first, then allocate optimization effort based on where your target audience is actually making decisions.