Your Google Alerts are firing. Mention is tracking sentiment. Brandwatch is pulling social data. And somewhere in your pipeline, deals are quietly dying because a buyer asked ChatGPT “which CRM should I use” and your brand never showed up.

That’s the blind spot traditional brand monitoring can’t fix.

The problem isn’t that your tools are broken. It’s that they were built for a different version of the internet — one where information lived in crawlable pages and “being mentioned” meant something. In the generative era, AI doesn’t retrieve your brand. It synthesizes a recommendation, on the fly, in a private session that no spider ever indexes. If you’re not tracking what those recommendations say, you’re not doing brand monitoring. You’re doing archaeology.

Your Brand Monitoring Dashboard Is Missing an Entire Channel

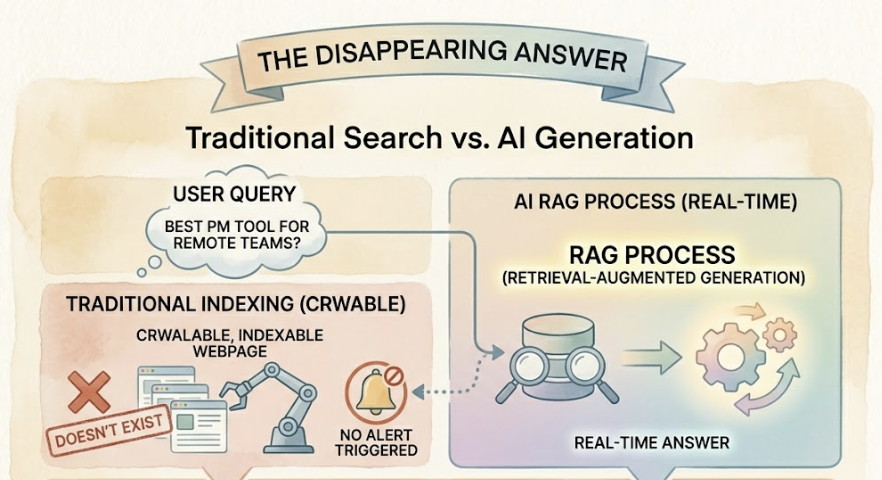

Traditional tools were designed around one core mechanic: web crawling. They index static, publicly accessible text and match it against keywords. That worked fine when search was deterministic — when ranking in position one meant a predictable percentage of clicks.

AI search is probabilistic. When a user asks ChatGPT “which project management tool is best for remote teams,” the resulting answer is generated in real time through a process called Retrieval-Augmented Generation (RAG). That answer doesn’t exist as an indexable webpage. It never gets crawled. It never triggers an alert.

The scale of this gap is significant. AI search queries now average 23 words, compared to four for traditional search. Sessions run about six minutes on average. These aren’t quick lookups — they’re discovery conversations. And brands that rely on legacy “set it and forget it” dashboards are invisible for all of them.

That’s not a tool configuration problem. It’s a structural mismatch.

The 6 Metrics That Actually Matter in AI Brand Monitoring

Moving from traditional monitoring to AI visibility monitoring means replacing one question — “are we being mentioned?” — with six better ones.

1. Visibility Rate: Are You in the Answer at All?

Visibility Rate measures the percentage of relevant prompts where your brand appears in the AI response. It’s the foundational metric, and the one most teams discover they’ve been ignoring.

Unlike organic rankings, AI visibility is probabilistic. Your brand might appear in 40% of responses to a specific prompt one week and 60% the next, depending on how the model’s retrieval weights shift. Benchmarking helps put your number in context:

- 0-10%: Invisible. Your brand has no meaningful presence in the AI discovery layer.

- 10-30%: Low. Significant gaps exist in your entity authority.

- 30-60%: Moderate. You’re a known player but not a default recommendation.

- 60-80%: Strong. You’re consistently included.

- 80%+: Dominant. You’re effectively the AI’s default answer.

Most brands that check for the first time land between 10% and 30%. That’s the gap.

2. Sentiment Score: Being Mentioned Isn’t Enough

An AI can mention your brand and still hurt you. “Reliable but expensive.” “Powerful but difficult to integrate.” “Worth considering if budget isn’t a concern.” These are visibility wins that erode purchase intent.

Sentiment scoring uses NLP to quantify how the AI frames your brand within its answer — not just whether you appear, but whether the AI is acting as an advocate or a cautious recommender. A brand with high visibility and consistently neutral or negative sentiment has a reputation problem inside the knowledge graph, and traditional social listening is unlikely to surface it before it hits the pipeline.

3. Position Tracking: First Is Not the Same as Fifth

In a synthesized AI response, order carries weight. Being the first brand ChatGPT recommends is fundamentally different from appearing as the fourth item in a “you might also consider” list. First-position brands earn higher user trust and better retention.

Position Tracking also includes Word Count Share — how much of the AI’s response is actually about your brand versus your competitors. A brand that gets two sentences while a rival gets two paragraphs is losing even when both names appear.

4. Competitor Share: Who AI Recommends Instead of You

Competitor Share measures how often rivals appear in the same prompt universe where you’re trying to win visibility. This is where the real strategic intelligence lives.

If a competitor holds 54% visibility for “best CRM for startups” while you hold 22%, that gap doesn’t close with better homepage copy. It requires understanding what the AI is retrieving for them that it isn’t retrieving for you. Competitor Share points directly to that question.

5. Source Analysis: Why AI Recommends Them, Not You

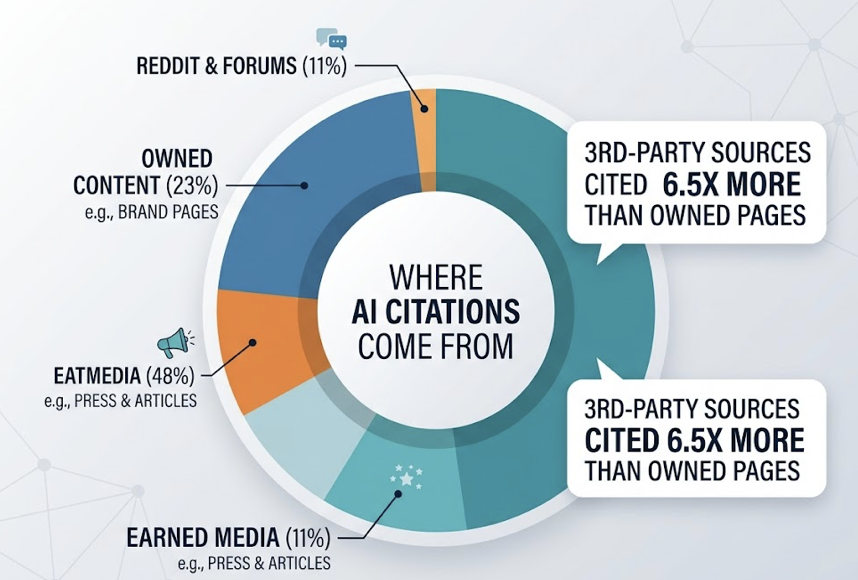

AI models ground their answers in retrieved sources. Source Analysis maps which specific domains and URLs the AI is citing when it recommends your brand — or your competitors.

The research on this is unambiguous: third-party sources are cited 6.5 times more often than brand-owned pages. Earned media accounts for 48% of AI citations. Review platforms like G2 and Capterra account for 11%. Reddit and forums account for another 11%. Owned content, despite being the asset brands invest most heavily in, accounts for just 23% — and primarily for technical specifics, not recommendations.

If your competitor is being cited because they have a G2 Leader badge and 500 fresh reviews, and your profile is two years old, Source Analysis tells you exactly what to fix.

6. CVR (Conversion Visibility Rate): Does Any of This Drive Revenue?

Not all AI visibility converts equally. CVR estimates the likelihood that a specific AI recommendation drives a user toward a brand interaction. It accounts for recommendation prominence, the intent alignment of the prompt, and whether the AI’s answer is referential (encouraging a visit) or summarized (ending the search right there).

The conversion upside is real: AI-referred traffic converts at 4.4 to 11 times the rate of traditional search traffic. But up to 70.6% of that traffic gets misclassified as “Direct” in Google Analytics because AI platforms frequently strip referrer headers. Brands that don’t track CVR can’t see this traffic, and they can’t optimize for it.

What “Set It and Forget It” Tools Actually Get Wrong

The failure of legacy monitoring isn’t just a feature gap. It’s three specific logical errors that compound over time.

The Static Text Fallacy. Traditional tools track what’s published. AI brand monitoring tracks what’s synthesized. A brand can have a top-ranking Google page and still be absent from ChatGPT summaries — because 80% of AI Overview sources don’t rank organically for the queried keyword. High Google rankings don’t predict AI inclusion.

Equating mentions with recommendations. A brand name appearing in a list of “troubled companies” reads as a win in a traditional media monitoring dashboard. In AI search, that mention can actively damage purchase intent. Legacy tools lack the semantic depth to distinguish between being praised and being used as a cautionary example.

The attribution vacuum. Because AI platforms strip referrer headers, up to 70.6% of AI-referred traffic registers as direct in Analytics. Brands see flat organic traffic and assume their content isn’t working. In reality, they may be winning the highest-intent buyers in their market — buyers who searched through AI and arrived pre-qualified. Without AI visibility tracking, that signal is invisible.

Building an AI Brand Monitoring Stack That Actually Works

The right approach isn’t to replace traditional tools. It’s to add a layer of semantic intelligence on top of them.

Layer 1 — Traditional monitoring (reactive): Keep using social listening and media monitoring for immediate crisis response, community engagement, and viral trend detection. These tools still do their original job well.

Layer 2 — AI visibility monitoring (strategic): This is where the six metrics above get tracked. Platforms like Topifyuse a method called Swarm Probing — sending thousands of prompt variations across different query nodes — to stabilize the probabilistic data and produce statistically reliable Visibility Scores across ChatGPT, Gemini, Perplexity, and AI Overviews.

The monitoring cadence that works for most teams:

- Weekly: Check prompt-level visibility to catch volatile shifts or competitor surges.

- Monthly: Review sentiment trends and citation share to guide content updates.

- Quarterly: Run a full competitive benchmarking audit to inform executive strategy.

The weekly check catches emergencies. The monthly review drives content decisions. The quarterly audit aligns the team on where to invest.

What These Metrics Look Like in Practice

A B2B SaaS company selling CRM software to startups noticed something off. Traditional dashboards showed stable organic traffic. But pipeline targets were consistently missed.

They ran an AI visibility audit and found their Visibility Rate for “best CRM for startups” was 22%. A major competitor held 54%.

Source Analysis told them why. For 65% of AI recommendations in that prompt cluster, the model was citing G2 and a 2023 TechCrunch article. The competitor had a G2 Leader badge and 500+ recent reviews. The brand’s G2 profile hadn’t been updated in two years.

They also discovered their competitor’s landing page followed what’s called the “Ski Ramp” pattern — 44.2% of AI citations come from the first 30% of a page’s text. Their competitor front-loaded answers and statistics. Their own pages buried the value proposition below scroll.

The intervention was structured. They launched a campaign to gather 100 new G2 reviews focused on the startup use case. They rewrote product pages to increase entity density from 5% to 18%, placing direct answers above the fold. They added Author Schema and JSON-LD markup to improve entity clarity.

Six weeks later: Visibility Rate moved from 22% to 38%. Average position improved from 4th to 2nd. CVR increased by 115% as the AI shifted from describing the brand as “an alternative option” to “a top-tier choice for high-growth startups.” Direct traffic increased by 25%, converting at 10.21% — matching the profile of pre-qualified AI referral traffic.

None of that would have been visible without AI brand monitoring.

Conclusion

The “set it and forget it” era of brand monitoring made sense when brand discovery happened in crawlable, static text. That world is gone.

AI doesn’t retrieve your brand. It synthesizes a recommendation, draws from sources you may not control, and delivers it to a buyer who may never click through to verify. If you’re not tracking Visibility Rate, Sentiment, Position, Competitor Share, Source Authority, and CVR, you’re managing half the game.

The teams building AI visibility monitoring into their stack now aren’t waiting for traditional search to come back. They’re learning to measure influence in the channel that’s already driving the highest-converting traffic in digital marketing history.

Start tracking your AI brand visibility with Topify.

Frequently Asked Questions

Is AI brand monitoring different from social listening?

Yes. Social listening is reactive — it tracks what humans write about your brand on public platforms. AI brand monitoring is proactive — it queries generative models directly to understand how your brand is synthesized and recommended during the AI discovery phase. One reads human conversations. The other reads what AI has learned.

How often should I check AI brand monitoring metrics?

A weekly-monthly-quarterly cadence works well for most teams. Weekly checks catch volatile shifts in visibility or sudden competitor surges. Monthly reviews guide content and citation strategy. Quarterly audits produce the competitive benchmarking data that informs budget allocation and executive reporting.

Can AI brand monitoring show me why competitors rank higher in AI answers?

Yes. Source Analysis identifies the “source gap” — the specific third-party domains the AI is retrieving for your competitors that it isn’t retrieving for you. That list tells you exactly where to focus PR, review acquisition, and content investment.

How do I get started if I have no baseline data?

Start by defining a Prompt Universe of 20-50 conversational questions your customers actually ask. Run a manual audit across ChatGPT, Gemini, and Perplexity to record your initial Visibility Rate and Sentiment Score. That baseline identifies your most urgent gaps and builds the business case for automated tracking with a platform like Topify.