Your competitor just got recommended by ChatGPT to thousands of potential buyers. Your brand didn’t show up once.

You didn’t lose a Google ranking. You didn’t get a bad review. You simply don’t exist in the answer the AI gave — and you had no idea it happened.

That’s the core problem with AI brand monitoring today. Most marketing teams are watching the wrong channels.

Your Brand Might Be a Ghost in AI Search Right Now

According to research, approximately 60% of brands are currently misrepresented or ignored by AI models. Not penalized. Not ranked lower. Just absent.

This isn’t a niche problem. ChatGPT reached 810 million monthly active users by November 2025, with 800 million weekly active users by April of the same year. These aren’t early adopters experimenting with a toy. These are your buyers, using AI as their first stop for product research.

Among B2B decision-makers, 42% now use an LLM as the very first step in their procurement process. For consumer brands, 50% of shoppers actively seek out AI search engines when making buying decisions.

If you don’t know what those AI systems are saying about your brand, you’re flying blind on a channel that’s already influencing your pipeline.

Why Social Listening Won’t Save You Here

Here’s the thing most marketing teams get wrong: they assume their existing brand monitoring stack covers AI.

It doesn’t.

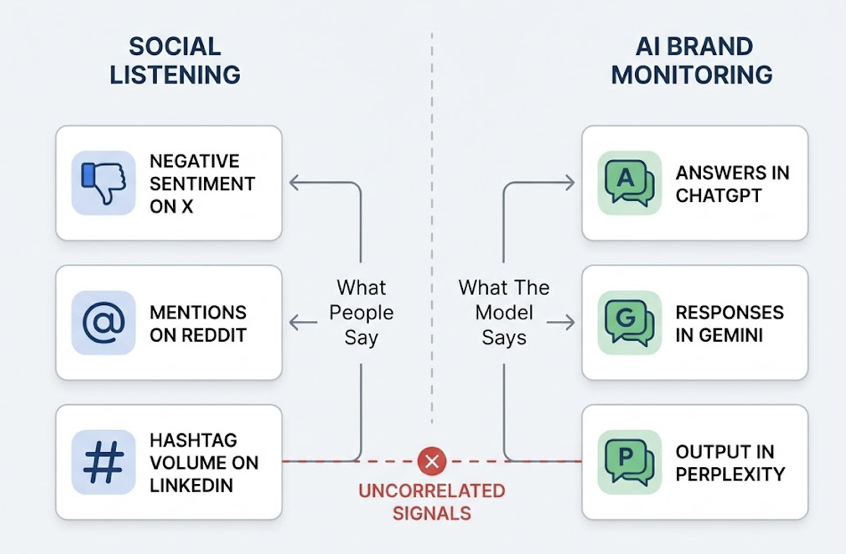

Social listening tracks what people say — sentiment on X, mentions on Reddit, hashtag volume on LinkedIn. AI brand monitoring tracks what the model says across ChatGPT, Gemini, Perplexity, and similar platforms. These two signals are frequently uncorrelated.

A brand can run a viral campaign that spikes social sentiment to 80% positive while remaining invisible to ChatGPT, because viral social content doesn’t automatically feed into the model’s authoritative training data or structured knowledge base.

| Social Listening | AI Brand Monitoring | |

|---|---|---|

| Data Source | Social APIs, forums, blogs | LLM outputs, RAG retrieval, training data |

| What It Tracks | Mention volume, hashtags, human sentiment | Brand visibility, citation rate, AI recommendation accuracy |

| Core Signal | Peer-to-peer influence | Algorithm-to-user synthesis |

| Trust Factor | Social proof | Authoritative synthesis |

The difference matters. Research from Bain & Company shows 62% of consumers now trust AI to guide their brand decisions, putting AI recommendations on par with traditional search during key purchase moments.

When ChatGPT recommends a vendor, it’s not linking to ten options and letting the user decide. It’s synthesizing reviews, specs, and industry sentiment into a single narrative. The user often accepts that narrative without further research.

That’s not a mention. That’s a verdict.

The 5 Metrics That Actually Matter for AI Brand Visibility

Tracking brand performance in AI platforms requires a different measurement framework than anything you’re using today. Here are the five metrics worth building around.

1. Brand Mention Rate

The percentage of relevant queries where your brand appears in the AI response. If 20 prompts about “best enterprise security software” generate 12 responses that mention your brand, your mention rate is 60%.

Watch your rate on unbranded discovery queries — questions like “What are the best tools for X?” — not just branded ones. A 100% rate on branded queries with a near-zero rate on category queries signals a serious GEO gap.

2. AI Brand Sentiment Score

This isn’t standard sentiment analysis. It evaluates how the model frames your brand. Does it describe your product as a reliable solution, or as a “legacy tool with high switching costs”?

Advanced platforms score this on a 0-100 scale. Above 80 indicates a consistently positive recommendation pattern. Below 50 means the AI experience for your brand is net-negative — and you probably don’t know it yet.

3. Brand Share of Voice in AI

Your mention rate in isolation tells you very little. What matters is how it compares to your top three to five competitors. If you appear in 40% of category responses but a competitor appears in 75%, that gap is costing you pipeline — quietly, every day.

The formula: (Your brand mentions ÷ Total mentions of all brands in category) × 100.

4. Position and Ranking in AI Responses

AI answers aren’t a flat list. Position 1-2 means the model leads with your brand. Position 6-9 means you’re an afterthought. Users rarely engage with anything beyond the first few recommendations in a generated response.

Where you rank within the answer matters as much as whether you appear at all.

5. Source Coverage and Citation Frequency

This tells you why the AI knows what it knows about your brand. Earned media — editorial coverage, forums like Reddit, review sites like G2 — accounts for roughly 48% of AI citations. Your own website content accounts for only about 23%.

If the AI is citing a three-year-old TechCrunch article and a handful of Reddit threads to build its picture of your brand, that’s both a vulnerability and an opportunity.

How to Set Up AI Brand Monitoring Across ChatGPT, Gemini, and Perplexity

Setting up a real monitoring operation involves four concrete steps. The earlier you establish a baseline, the more useful your trend data becomes.

Step 1: Build your prompt corpus.

Don’t just track your brand name. You need to track the “discovery queries” buyers actually use before they know which brand to choose. These include category queries (“Best software for [task]”), competitor comparison queries (“[Competitor] vs alternatives”), and use-case queries (“How to solve [specific problem]”).

A working corpus typically needs 50-100 prompts to surface meaningful pattern data.

Step 2: Choose a monitoring tool that covers multiple platforms.

Manual monitoring is not a viable long-term approach. Research shows a team manually checking 14 competitor pages daily spends over an hour per day on a single platform. Automated tools reduce that to minutes with 24/7 coverage.

Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, running up to 100 customized prompts and analyzing up to 9,000 AI answers per month on the Basic plan. The platform tracks visibility, sentiment, position, and source data in a single dashboard rather than requiring you to stitch together data from five different tools.

Step 3: Establish a 30-day baseline.

Your first month of data is less about optimization and more about understanding your starting position. Track mention volatility (how much your visibility fluctuates day-to-day), platform bias (does Gemini mention you more than ChatGPT?), and citation gaps (which third-party URLs are your competitors owning that you’re absent from?).

Step 4: Set up alerts for meaningful shifts.

A ±10-point swing in your composite visibility score warrants investigation. So does a competitor’s share of voice jumping more than 15% in a single week. During product launches or PR events, move from weekly checks to daily monitoring.

What Negative Brand Mentions in AI Look Like

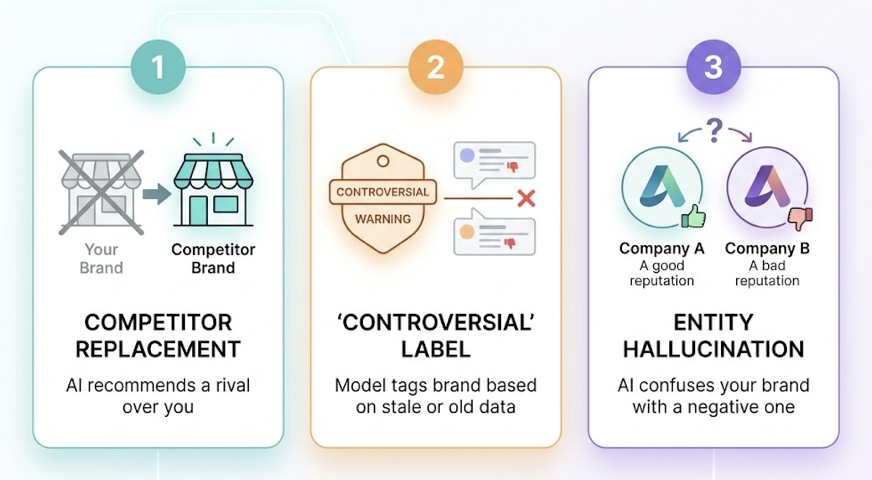

AI negativity doesn’t always look like a one-star review. It’s often more subtle — and more damaging because of it.

The most common patterns: competitor replacement (the AI recommends a rival over you by name), the “controversial” label (the model tags your brand with “unresolved customer service issues” based on a stale forum thread), and entity hallucination (the AI confuses your brand with a similarly named company that has a poor reputation).

None of these will show up in your social listening dashboard.

What makes this particularly problematic is persistence. Social media crises are often intense but short-lived. AI negativity isn’t. Once a model incorporates a negative framing — whether from an outdated review or a training data artifact — it repeats that framing to every user who asks a relevant question, until the underlying data ecosystem is corrected.

Topify’s Sentiment Analysis clusters negative mentions and identifies the specific “source of truth” the AI is pulling from — whether it’s a particular Reddit thread, an old review site article, or a technical documentation gap. That makes fixing the problem a targeted operation rather than a guessing game.

Benchmarking Your Brand Against Competitors in AI Search

In AI search, you don’t need to be perfect. You need to be more cite-worthy than the alternatives the AI is already recommending.

Benchmarking reveals exactly where the gaps are. A visibility gap (you appear in 20% of category queries, a competitor appears in 80%). A sentiment gap (the AI calls you “functional” and the competitor “innovative”). A position gap (you’re consistently listed third or fourth).

The methodology is straightforward: select three to five direct competitors, run the same 50 prompts across ChatGPT, Gemini, and Perplexity for all brands, then map which third-party domains are generating AI citations for each brand.

If 80% of AI citations for a rival come from high-authority review sites you’re not on, your next move is clear.

Topify’s Competitor Monitoring automates this process, delivering weekly reports on competitor share of voice with cross-platform breakdowns. You can see if a competitor is gaining ground specifically on Gemini while you hold steady on Perplexity — and trace it back to which sources are driving the divergence.

Turning Monitoring Data into GEO Strategy

Monitoring is the diagnostic. What you do with the data is where the actual value gets created.

There are three paths from insight to action.

Path 1: Close the prompt gap with targeted content. If your brand is absent from discovery queries about your category, create content that directly addresses those queries with statistics, expert perspectives, and structured data. Research shows adding statistics increases AI visibility by 37%, and citing authoritative sources by up to 40%.

Path 2: Close the source gap with earned media. If the AI is citing Wikipedia and review sites instead of your content, your priority is building presence on those platforms. Earned media accounts for 48% of AI citations — editorial coverage, relevant subreddits, review platforms. That’s where AI models are looking for “objective” information about your brand.

Path 3: Close the sentiment gap with narrative correction. If the AI has absorbed a flawed or outdated narrative about your brand, you need to flood the ecosystem with accurate, structured information. Practical starting points include updating your llms.txt file, correcting stale documentation, and pushing accurate product specs to high-authority review platforms.

Topify’s one-click execution connects monitoring data directly to strategy deployment. You can generate AI-optimized product descriptions and FAQs designed specifically to be cited by LLMs, without building a separate workflow for each platform.

Track. Fix. Repeat.

Conclusion

The AI search channel isn’t experimental anymore. With 800 million weekly active users on ChatGPT alone, the question isn’t whether AI is influencing your buyers — it’s whether you have any visibility into how.

Traditional organic traffic is already under pressure, with AI Overviews driving a 34.5% drop in click-through rates and some high-traffic keywords losing up to 64% of their volume. Meanwhile, the buyers you do reach through AI convert at 27% — more than 10x the average search conversion rate — because the AI has already done the evaluation for them.

AI brand monitoring gives you the data to compete in this environment. Start with the five core metrics. Build a prompt corpus. Establish a baseline. Then use what you learn to make your brand the answer AI gives by default.

FAQ

How do you track brand visibility trends over time in AI search?

You need a stable corpus of 50-100 prompts queried weekly across ChatGPT, Gemini, and Perplexity. Log your mention rate and position score consistently over time in a centralized dashboard. Model updates can introduce sudden shifts in visibility, so longitudinal data is what separates a real trend from a one-week anomaly.

How do you identify which AI platforms mention your brand most?

Multi-platform monitoring tools compare your answer inclusion rate across different engines. This matters because platform behavior varies significantly: Gemini often favors brands with strong Google Search presence, while Perplexity prioritizes academic and technical citations. Knowing which platform is your weakest link tells you where to focus your GEO effort first.

How do you measure the impact of content on AI brand mentions?

Run a controlled comparison. Update a set of pages with GEO-focused content — statistics, expert quotes, structured schema — and keep a comparable set unchanged. Monitor the citation rate for both groups over 60 days across Perplexity and Google AI Overviews. High-performing content typically sees a 30-40% increase in AI citation frequency within that window.

How do you build a brand monitoring dashboard for AI search?

A functional dashboard integrates four data streams: mention rate (how often you appear), sentiment score (the 0-100 quality of how you appear), competitive share of voice (your percentage vs. rivals), and AI-referred traffic (tracked via GA4 using Perplexity and ChatGPT as referral sources). These four together give you both a leading indicator (AI signals) and a lagging indicator (actual traffic impact).

Why is AI brand monitoring fundamentally different from social listening?

Social listening is reactive and human-centric — it tracks what people say about you. AI brand monitoring is proactive and algorithmic — it tracks what the model has been trained or prompted to say about you. They use different data pipelines, surface different problems, and require different solutions. You need both, but they don’t replace each other.

How do you detect negative brand mentions in AI search responses before they compound?

Set up weekly sentiment scoring across your core prompt corpus and flag any response where the model qualifies your brand with words like “however,” “despite,” “limited,” or “controversial.” These linguistic markers often signal the AI is pulling from a negative or outdated source. Once you identify the framing, trace it back to its citation origin and correct the source directly.