Your SEO dashboard looks fine. Rankings are solid. Organic traffic is holding steady. Then someone on your team types a product category question into ChatGPT, and your brand doesn’t appear once. Not in the answer. Not even as a mention.

That’s not a content gap. It’s a measurement gap. A 2025 study by Chatoptic analyzing 1,000 queries across 15 brands found that Google’s first-page rankings and ChatGPT mentions have a correlation of just 0.034. Statistically, your Google ranking tells you almost nothing about whether AI systems are recommending you.

An AI answer tracking system is what closes that gap.

What an AI Answer Tracking System Actually Monitors

An AI answer tracking system isn’t an extension of your existing SEO stack. It’s a separate measurement layer built specifically to monitor how AI-generated responses mention, position, and describe your brand over time.

Where traditional tools track keyword rankings on static search result pages, an AI answer tracking tool monitors dynamic, probabilistic outputs. The same prompt can return different answers on different days, across different AI platforms, and with different brand outcomes. That variability is exactly what makes persistent tracking necessary.

The core coverage of a reliable AI answer tracking platform spans four dimensions: visibility (whether your brand appears in relevant responses), position (where in the answer your brand is mentioned relative to competitors), sentiment(how AI models characterize your brand’s quality, pricing, or positioning), and source (which domains AI platforms are citing when they recommend or describe you).

None of these signals are available in Google Search Console.

Why Monthly SEO Reports Miss What’s Actually Happening Now

The problem isn’t just that AI search is growing. It’s that AI search operates on a completely different trust logic than traditional search.

Research by Seer Interactive analyzing 3,119 informational queries and 25.1 million organic impressions found that when AI Overviews appear at the top of search results, organic CTR drops by up to 65.2% for brands that aren’t cited in the AI answer. Brands that are cited still see a 49.4% organic CTR decline, but their paid CTR runs 91% higher than uncited competitors on the same page.

That’s not a gradual shift. That’s a structural redistribution of traffic.

Your monthly SEO report tracks impressions, rankings, and clicks. It doesn’t tell you whether you’re one of the cited brands or one of the invisible ones. Without an AI answer tracking analytics layer, you’re optimizing for a metric that no longer determines the outcome.

What makes this harder is the drift problem. According to the same research landscape, 40% to 60% of the domains cited in AI answers change within a single month. A brand that was cited consistently in January may lose those citations by March, not because its content changed, but because an AI model updated its internal weighting. You can’t catch that kind of shift in a quarterly review.

5 Metrics That Define a Reliable AI Answer Tracking System

Not every AI answer tracking dashboard measures the same things. Here’s what a well-built system should actually track.

Mention Rate is the baseline. It measures how often your brand name appears across a defined set of prompts relevant to your category. Think of it as your visibility score in AI-generated content: expressed as a percentage, it tells you how reliably AI platforms surface your brand when users ask about your space.

Position in AI Answers goes a level deeper. Appearing in an AI response as the third option after two competitors is meaningfully different from being the primary recommendation. A position-weighted AI SOV (Share of Voice) formula gives first-position mentions full credit, second-position 50%, and third-position 33%. Mention rate alone flattens that distinction.

Sentiment Score matters more in AI search than in traditional search because AI models describe brands, not just list them. A system that tracks whether ChatGPT calls your product “enterprise-grade” versus “a budget alternative” can catch positioning drift before it influences purchase decisions.

Citation Source Coverage answers the question: why is AI saying what it’s saying about you? Every AI recommendation is downstream of a source. Knowing which domains drive your positive mentions, and which ones are getting cited without mentioning you at all, is what separates diagnostic data from a vanity dashboard.

Cross-Platform Consistency rounds it out. Yext’s analysis of 6.8 million AI citations shows that Gemini draws over 52% of its citations from brand-owned domains, while ChatGPT pulls 48.7% from third-party directories like Yelp and TripAdvisor. A brand can be dominant on Gemini and nearly invisible on ChatGPT, or vice versa. Tracking each platform separately is not optional.

How These Metrics Work Together as an AI Answer Tracking Dashboard

Single metrics are interesting. Combined metrics are actionable.

Here’s a scenario: your mention rate on Perplexity is 68%, which looks strong. But your sentiment score on the same platform is trending negative over six weeks, with AI descriptions shifting from “professional-grade” to “a solid option for smaller teams.” That’s a signal your content positioning needs work, and you’d only catch it with a dashboard that connects visibility and sentiment in the same view.

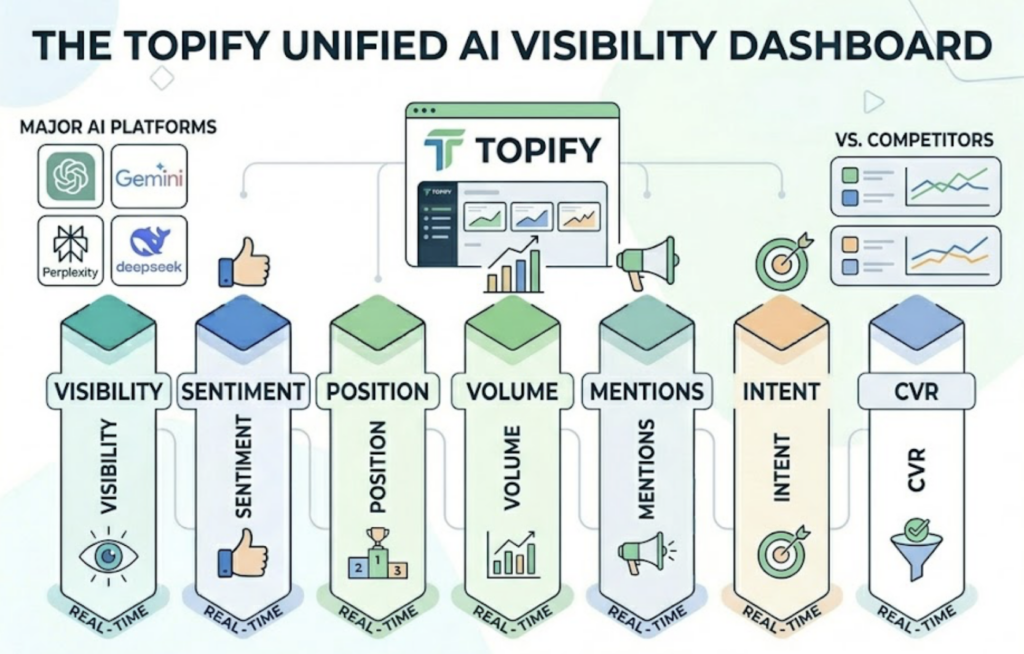

Topify is built around exactly this kind of multi-metric visibility. Its AI answer tracking platform monitors seven core dimensions: visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate), all in a unified dashboard across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms. Instead of switching between tools or manually sampling prompts, you get a single view that shows how your brand is performing, relative to competitors, in real time.

How to Track Brand Performance in ChatGPT Responses Over Time

Tracking brand performance in ChatGPT responses over time is harder than it sounds. ChatGPT’s outputs are non-deterministic, which means the same prompt can return different answers on different runs. One-time manual checks tell you almost nothing about trends.

A systematic approach starts with prompt library definition. Not all prompts are equal. The most valuable prompts to track are the ones that mirror how your actual buyers search: category comparison questions (“What are the best tools for X?”), problem-based queries (“How do I solve Y?”), and brand-direct questions (“Is Z a good option for enterprise teams?”). Define 30 to 100 prompts that map to your highest-intent buying scenarios, and lock them in as your tracking set.

From there, baseline capture is the next step. Run your full prompt library across your target AI platforms and record current mention rate, position, and sentiment scores. This is your week-zero benchmark. Without it, you have no reference point for whether future changes represent progress or regression.

Tracking cadence matters more than most teams expect. Because AI citation patterns shift monthly, weekly tracking is the minimum viable frequency. After major model updates (GPT-4o, Gemini 2.0, Perplexity Sonar Pro releases), run an immediate off-cycle check. Model updates are the most common trigger for sudden drops in brand mention rate.

Finally, closed-loop content attribution is what turns tracking into a growth system. When you publish a new piece of content optimized for a specific prompt cluster, record the publish date. Four to six weeks later, compare mention rate and sentiment scores for that prompt group against your baseline. Princeton University’s GEO research found that adding authoritative citations to content can lift AI visibility by up to 115.1%, while adding statistical data drives a 22% increase. Tracking gives you the before-and-after data to know if your content changes actually moved the needle.

Topify’s platform automates this entire workflow. Its Basic plan supports 100 prompts tracked continuously across major AI platforms, with 9,000 AI answer analyses per month. The Pro plan scales to 250 prompts and 22,500 analyses. You can get started with Topify and have a tracking baseline running within the same day, rather than spending weeks setting up manual monitoring scripts.

What Most Teams Get Wrong When Building an AI Answer Tracking Solution

The most common mistake is treating AI answer tracking as a one-time audit.

Teams run a batch of prompts, get a snapshot of where their brand stands, and file it as a Q3 project. Three months later, the data is already stale, because according to available research, citation domain turnover in AI answers runs at 40% to 60% per month. A June audit tells you almost nothing about what ChatGPT is saying in September.

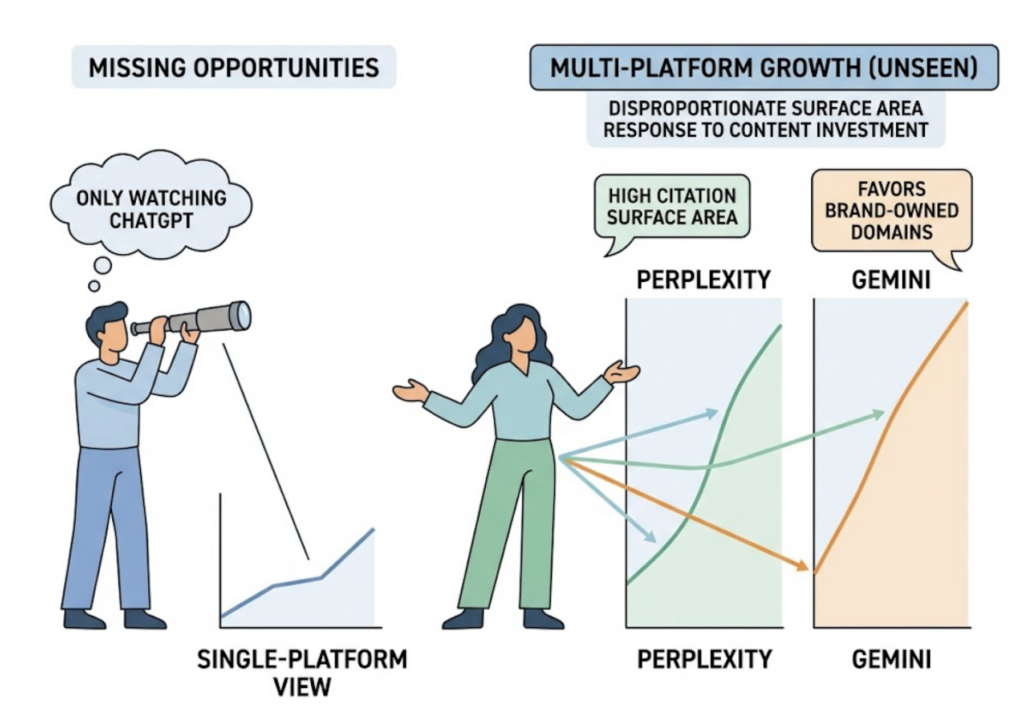

The second mistake is single-platform tracking. Because ChatGPT has the highest brand recognition in the AI space, many teams track only there. But Perplexity averages approximately 22 citations per response (significantly more than other platforms), which creates disproportionate surface area for mid-market and specialist brands. Gemini’s citation logic favors brand-owned domains, which responds directly to your content investment. A brand that only watches ChatGPT can be growing fast on Perplexity and Gemini without knowing it, or losing ground silently.

The third mistake is confusing citation with recommendation. Research on AI citation behavior shows that brands are cited as supporting evidence approximately three times more often than they’re cited as a primary recommendation. Your brand URL may appear in a ChatGPT response as a reference that backs up a competitor’s recommendation. Without a tracking system that distinguishes citation type from mention type, you might interpret that as positive visibility when it’s actually the opposite.

An AI answer tracking software worth using will surface all three of these distinctions, not just count how many times your brand name appears.

Choosing the Right AI Answer Tracking Platform for Your Team

The evaluation framework for AI answer tracking tools comes down to three practical questions.

How many AI platforms does it cover? Tools that only track ChatGPT are giving you at best a partial picture. Look for platforms that cover ChatGPT, Gemini, Perplexity, and at least one or two emerging engines like DeepSeek or Doubao, depending on your market.

Does it track continuously or only on demand? On-demand tools require your team to manually initiate tracking runs. Continuous platforms run automated checks on a fixed schedule and alert you when significant changes occur. For teams managing competitive categories, manual tracking doesn’t scale.

Does it include competitor benchmarking? Your mention rate means more when you can see it relative to your top three competitors in the same prompt set. A 40% mention rate is a strong signal in a category where the category leader sits at 45%. It’s a warning sign if competitors average 70%.

Topify checks all three. It covers the major global AI platforms including ChatGPT, Gemini, Perplexity, and DeepSeek, with continuous automated tracking rather than on-demand snapshots. Its competitor benchmarking feature automatically surfaces who AI platforms are recommending in your category, what position those competitors hold, and which source domains are driving their visibility.

On pricing, the Basic plan runs at $99/month and includes tracking for up to 100 prompts across 4 projects, making it a reasonable starting point for in-house marketing teams. The Pro plan at $199/month supports 250 prompts and 8 projects, designed for teams managing multiple brand lines or reporting across business units. Enterprise plans start at $499/month for agencies and large organizations that need custom configuration and dedicated support. Full pricing details are available on Topify’s site.

Conclusion

Google rankings and AI visibility are no longer the same thing. A correlation coefficient of 0.034 between Google’s first page and ChatGPT recommendations means the two are effectively independent signals. Optimizing one without tracking the other leaves a significant portion of your brand’s search presence unmonitored.

An AI answer tracking system doesn’t replace your SEO stack. It fills the measurement gap that your SEO stack was never designed to cover. The brands that build this tracking layer now, establish baselines, run continuous monitoring, and close the loop between content changes and AI visibility outcomes, are the ones that will have a compounding advantage as AI search handles an increasing share of high-intent buyer queries. By 2028, over $750 billion in consumer spending is projected to flow through AI search channels. That’s not a future trend to monitor. It’s a current infrastructure decision.

Start by defining your prompt library. Build your baseline. Then track it the same way you’d track any channel that drives qualified traffic.

FAQ

Q: What’s the difference between AI answer tracking and traditional SEO monitoring?

A: Traditional SEO monitoring measures how your website ranks in static search result pages based on keyword queries. AI answer tracking monitors how AI-generated responses, which are dynamic and synthesized rather than ranked, mention and describe your brand. A brand can hold strong Google rankings while being completely absent from ChatGPT or Perplexity recommendations. The two systems require separate measurement approaches.

Q: How often should I run AI answer tracking for my brand?

A: Weekly is the minimum viable cadence for category-level prompts. Brand-specific reputation queries (direct brand name searches) benefit from three checks per week, particularly in competitive categories where rivals may be actively optimizing to appear in your brand’s query space. After major AI model updates, run an off-schedule check immediately, since model updates are the most common trigger for sudden shifts in brand mention rate.

Q: Can an AI answer tracking system help me understand why competitors rank higher in ChatGPT?

A: Yes, and this is one of its most practical applications. A system with source analysis capabilities can show you which domains ChatGPT is citing when it recommends a competitor instead of you. That points directly to the content gaps or third-party authority signals you need to close. Competitor benchmarking within the same prompt set tells you not just that you’re behind, but by how much and in which specific query clusters.

Q: How to track brand performance in ChatGPT responses over time without manual checking?

A: The practical answer is a dedicated AI answer tracking platform that automates prompt execution on a fixed schedule. Manual checking is not repeatable at scale: ChatGPT’s non-deterministic outputs mean a single manual run doesn’t give you statistically reliable data, and running 100+ prompts weekly across multiple platforms is not a human-sustainable workflow. Platforms like Topify run this automatically, store historical data for trend analysis, and surface significant changes without requiring manual intervention from your team.