Your content team spent months building pillar pages and earning editorial backlinks. Your domain authority is solid. Your core keywords rank on page one. Then someone opens ChatGPT, types in the exact question your buyers ask every week, and your brand isn’t in the answer. Not mentioned. Not compared. Just absent.

That’s not a content problem. It’s a visibility gap that traditional analytics tools weren’t built to detect. An AI answer tracking solution exists specifically to close it.

Rank in Google, Invisible in ChatGPT: The Gap an AI Answer Tracking Solution Fills

Google rankings and AI search visibility operate on completely different logic. In traditional search, algorithms sort URLs by authority signals and keyword relevance. In generative engines like ChatGPT or Perplexity, the system synthesizes facts from across the web into a narrative answer, often without linking to a single result.

The consequence is striking. Research shows only 11% to 12% of domains cited by AI overlap with traditional top-10 search results. Your Google ranking doesn’t transfer to AI answers. The two channels measure entirely different things.

McKinsey estimates that roughly $750 billion in consumer spending will be influenced by AI-driven search by 2028. Yet only 16% of brands currently track their AI search performance in any systematic way. The gap between what’s happening in AI answers and what most brand teams actually know is wide, and widening fast.

What Does an AI Answer Tracking Solution Actually Track?

The short answer: a lot more than whether your brand name shows up.

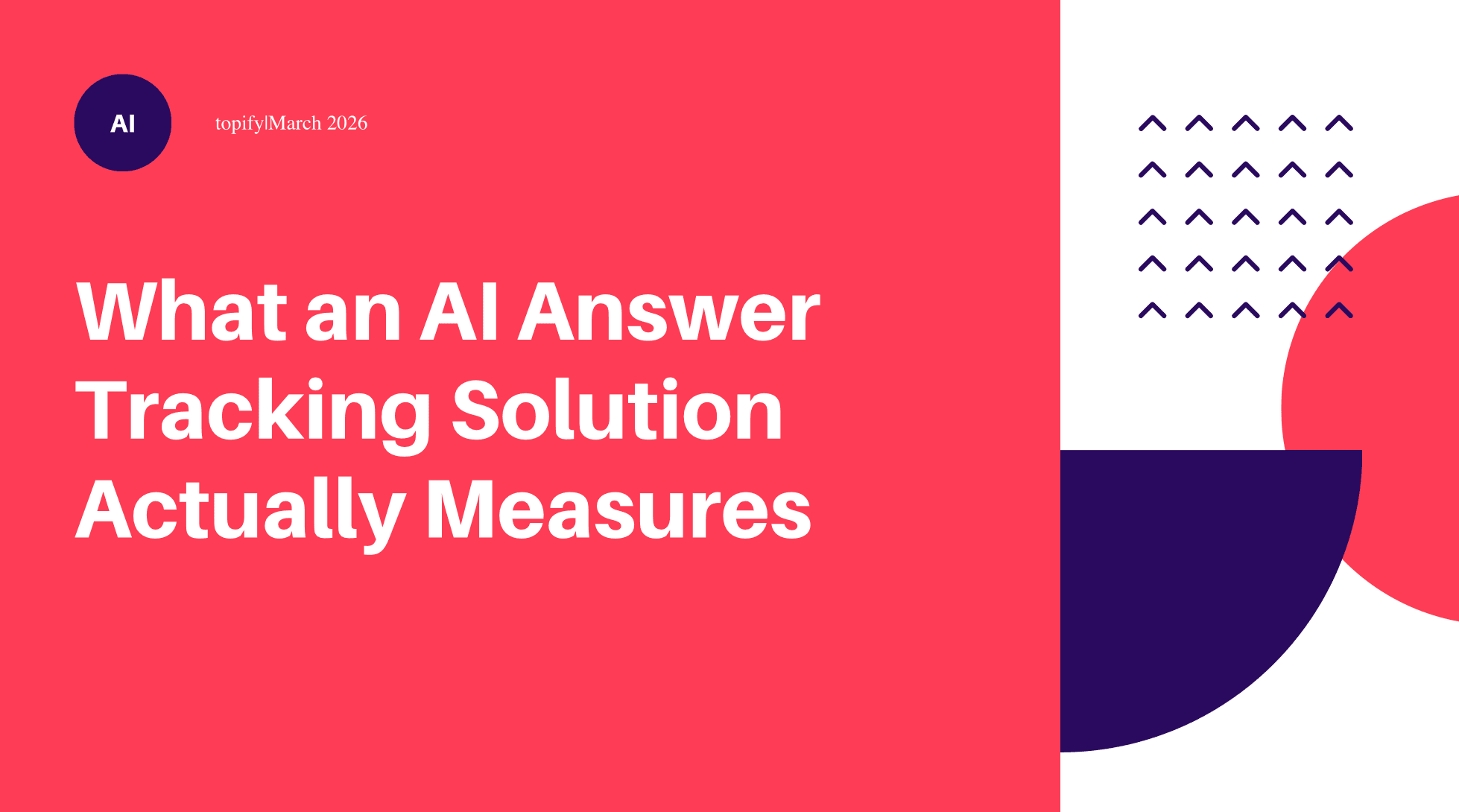

A mature AI answer tracking tool monitors at least four distinct dimensions. Visibility measures how often your brand appears across a defined set of test prompts. Position tracks whether you’re the first recommendation, listed in the middle, or mentioned as an afterthought. Sentiment analyzes how AI describes your brand, whether the language is positive, neutral, or quietly undermining. Source analysis maps which specific domains AI is actually citing when it forms an opinion about you.

Each dimension tells you something different. High visibility with negative sentiment is worse than low visibility. First-position recommendations carry significantly more trust weight than mid-list appearances. And source analysis regularly reveals that AI is building its view of your brand from sources you’ve never thought to monitor: Reddit threads, G2 reviews, third-party listicles.

An AI answer tracking analytics layer ties all four together. Your web dashboard can’t show you any of it.

How an AI Answer Tracking Solution Works: The Technical Layer You Don’t See

The mechanics matter here, because understanding them changes how you interpret the output.

A tracking solution works by sending a library of test prompts to AI platforms on a scheduled basis, recording the full responses, and extracting brand mentions, position, sentiment signals, and cited sources. It’s not a one-time audit. It’s a continuous feedback loop.

One critical design detail: the same prompt sent twice can produce different answers. AI responses are probabilistic, not deterministic. Reliable AI answer tracking software accounts for this by running multiple samples per prompt across different time windows and computing an averaged stability score. A single snapshot is a photo. A tracking system is a time-lapse.

The prompt library itself is what separates useful data from misleading data. Tracking only your brand name captures one slice of reality. The real signals live in category-level prompts: “best tools for [use case],” “how do I solve [problem],” “which platform works better for [scenario].” Those are the prompts where you either win new consideration, or lose it to a competitor.

5 Metrics Your AI Answer Tracking Dashboard Should Show (And 2 That Are Overrated)

Not all metrics on an AI answer tracking dashboard deserve equal attention. Here’s how to prioritize.

Share of Model (SoM) is the closest thing to a north star metric for AI search. It measures how often your brand appears across the full set of relevant prompts, relative to competitors. Think of it as market share, measured inside AI responses rather than in sales data.

Citation Authority asks where AI is sourcing its claims about you. A mention backed by a Forbes feature or an industry benchmark carries different weight than one from a low-authority directory. According to BrightEdge, brands that appear consistently in AI Overviews tend to see a 19% lift in branded search volume, and citation quality is a major factor behind that consistency.

Sentiment Polarity tracks the narrative tone. This is where AI answer tracking diverges most sharply from traditional SEO. A search engine ranks your page regardless of whether the content is favorable or critical. An LLM reads that content and incorporates its tone. If your brand is frequently cited as a cautionary example, your visibility score is working against you.

Brand Association Accuracy measures whether AI describes your product the way you’d describe it yourself. If your positioning is enterprise-grade but ChatGPT consistently calls you “a solid option for small teams,” there’s a messaging gap worth investigating.

Branded Search Lift closes the loop between AI visibility and downstream behavior. When AI repeatedly mentions your brand in category research contexts, Google-side branded queries tend to follow.

The two overrated metrics: raw impression count and single-run rank. Appearing on a screen without being cited or recommended generates minimal commercial intent. And a single high-ranking response tells you nothing about consistency, which is the only form of visibility that compounds.

Common Mistakes in AI Answer Tracking (Most Teams Make at Least 2 of These)

73% of marketers are either tracking the wrong signals or misreading the data they already have. Here’s where it breaks down in practice.

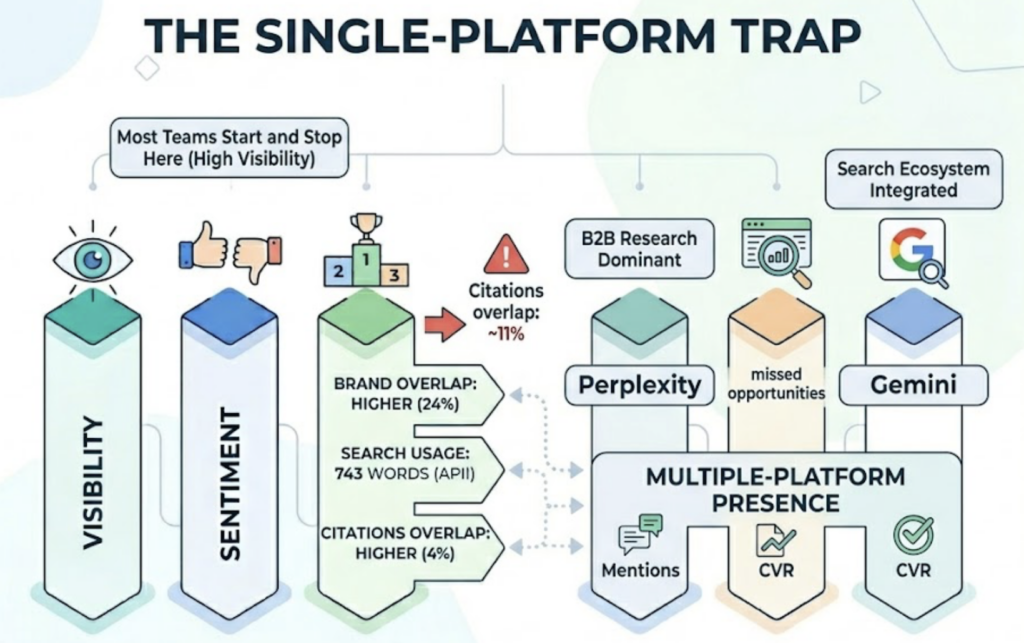

Single-platform focus. Most teams start with ChatGPT and stop there. Perplexity dominates B2B research workflows. Gemini is tightly integrated with Google’s broader search ecosystem. And since the citation overlap between platforms is only around 11%, a brand that looks strong in ChatGPT can be nearly invisible in Perplexity without anyone noticing.

Tracking brand name only. If your brand appears every time someone searches your exact name but disappears when someone searches your category, you’re defending, not growing. Category-level prompt tracking is where growth signals actually live.

Skipping competitor citation analysis. You need to know not just that a competitor outranks you, but why. Are they being pulled from a high-authority case study? A specific Reddit thread? A third-party benchmark report? Without understanding the citation path, you can’t identify what to build.

Confusing visibility with favorability. High mention frequency paired with cautionary language is a brand risk, not a brand asset. Sentiment analysis isn’t optional. It’s the difference between knowing you exist in AI answers and knowing what AI is actually saying about you.

That’s the gap most brands still can’t see.

How to Build a Strategy Around Your AI Answer Tracking Solution

Data only has value if it feeds a repeatable feedback loop. Here’s a three-step framework that works in practice.

Step 1: Define your prompt library and establish a baseline. Start with 20 to 50 prompts that reflect how your actual buyers research problems, not just your product name. Run these across platforms for one to two weeks to record your baseline visibility scores, sentiment distribution, and source landscape. You can’t measure improvement without knowing where you started.

Step 2: Identify citation gaps and rebuild content toward them. Find the prompts where competitors appear and you don’t. Then analyze what they’re being cited for: specific data points, structured comparisons, third-party media coverage. Those are your content gaps. Fill them with the kind of material AI models are trained to extract from: fact-dense, clearly structured, and sourced from domains the platforms already trust.

Step 3: Set up continuous monitoring and a regular review cadence. AI engines update their citation behavior regularly. What worked three months ago may have already shifted. Topify provides a unified dashboard that tracks your brand across ChatGPT, Gemini, Perplexity, DeepSeek, and other major platforms simultaneously. Its seven-metric framework covers visibility, sentiment, position, volume, mentions, intent, and CVR in a single view, with competitor monitoring and source analysis built in.

For teams building from scratch: Princeton researchers found that GEO-optimized content improves AI visibility by up to 40%. That’s a meaningful lift, but only measurable if you’re tracking both before and after.

What to Look for in an AI Answer Tracking Solution: A Quick Checklist

Before committing to any AI answer tracking platform, run through these criteria.

Multi-model coverage. Does it track at least five major AI models? ChatGPT, Perplexity, Gemini, DeepSeek, and Claude express meaningfully different citation behaviors. A tool covering only one or two will consistently undercount your real exposure gaps.

Prompt volume and sampling method. How many prompts can you run? How often are results refreshed? Single-run snapshots are not tracking. Look for multi-sample averaging to smooth out the probabilistic noise built into every AI response.

Sentiment analysis depth. A basic positive/negative flag is a starting point. What you actually need is keyword-level extraction: which topics or phrases are triggering cautionary framing, and which prompt types generate your strongest endorsements.

Citation path resolution. Can the platform identify the exact URLs and domains AI cites when describing your brand? This is what makes gap analysis and content strategy actionable.

Competitor benchmarking. Relative data matters more than absolute scores. A 30% visibility rate means something very different if your closest competitor sits at 15% versus 60%.

Pricing that fits your stage. Enterprise-tier AI monitoring tools often start above $5,000 per month. Topify’s Basic planstarts at $99/month, covering 100 prompts and up to 9,000 AI answer analyses per month. That’s a practical entry point for growth-stage teams building a GEO monitoring foundation without an enterprise budget.

| Criteria | What to Look For |

|---|---|

| Platform Coverage | 5+ AI models (ChatGPT, Gemini, Perplexity, DeepSeek, Claude) |

| Prompt Volume | 100+ prompts with multi-sample averaging |

| Sentiment Analysis | Keyword-level extraction, not just positive/negative |

| Citation Path | Exact URL and domain resolution |

| Competitor Monitoring | Relative visibility benchmarking vs. rivals |

| Pricing | Entry plans under $200/mo for growth teams |

Conclusion

ChatGPT now has 800 million weekly active users processing over a billion queries per day. 50% of consumers are already using AI tools for product research. The question isn’t whether AI is influencing your buyers’ decisions. It’s whether you know what AI is saying about your brand when those searches happen.

An AI answer tracking solution gives you that visibility. Start with a prompt library that reflects real buyer intent. Establish a baseline. Use the data to identify citation gaps, then close them with content AI platforms are trained to trust. Get started with Topify to run your first cross-platform visibility report and find out where your brand actually stands in AI answers today.

FAQ

Q: What is an AI answer tracking solution?

A: It’s a software system that monitors how your brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, and Gemini. Unlike traditional SEO tools that track webpage rankings, an AI answer tracking solution measures citation frequency, sentiment, position, and source attribution inside AI-synthesized answers.

Q: How do I measure the effectiveness of an AI answer tracking solution?

A: Track two things in parallel. First, data depth: does it cover multiple AI platforms, multiple prompt types, and sentiment analysis? Second, downstream impact: as your AI visibility improves, are you seeing a corresponding lift in branded search volume and higher-intent referral traffic? Both should move together over a 4-to-8-week optimization cycle.

Q: How is an AI answer tracking solution different from traditional SEO tools?

A: Traditional SEO tools measure deterministic outputs. A URL either ranks at position 3 or it doesn’t. AI answer tracking software measures probabilistic outputs: a brand appears in 35% of relevant AI responses, with a 72% positive sentiment score. The data type is fundamentally different, which means the optimization strategy has to be different too.

Q: What’s the typical pricing for an AI answer tracking solution?

A: It varies significantly by capability and scale. Entry-level platforms like Topify start at $99/month for core tracking across major AI platforms. Mid-market solutions typically range from $300 to $500/month. Full-scale enterprise or agency platforms can run several thousand dollars per month depending on prompt volume, platform breadth, and reporting depth.