Your domain authority is solid. Your keyword rankings are strong. But none of that tells you whether ChatGPT just recommended your competitor instead of you.

That’s the gap traditional SEO tools weren’t built to cover. As AI-powered search platforms captured 12% to 15% of global market share by the end of 2025, up from just 5% at the start of that year, standard dashboards kept reporting impressions and clicks while missing the conversations that actually shape purchase decisions. AI answer tracking software fixes that blind spot directly.

What AI Answer Tracking Software Actually Measures

AI answer tracking software monitors how your brand appears inside AI-generated responses, not just whether your URL ranks on a results page.

The distinction matters because AI search operates differently. When someone asks ChatGPT “what’s the best project management software for remote teams,” the platform doesn’t return ten blue links. It synthesizes an answer, names specific products, and explains why. Your brand either appears in that answer or it doesn’t. Traditional SEO tools have no way to capture this.

A proper AI answer tracking solution measures several things at once: how often your brand gets mentioned across a defined set of prompts (visibility rate), where it appears in the AI’s response relative to competitors (position), how the AI describes your product in terms of tone and accuracy (sentiment), and which external sources the AI cited to support that description (source analysis).

That’s a fundamentally different data layer than keyword rankings.

How AI Answer Tracking Works: The Data Layer Behind the Dashboard

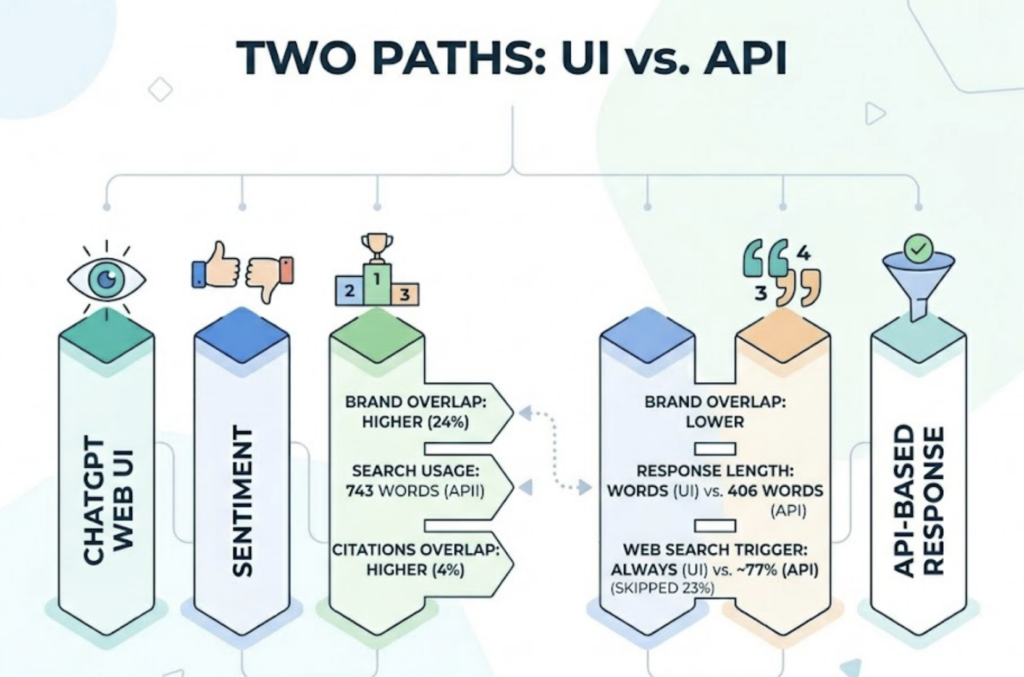

The mechanics behind an AI answer tracking system come down to one core design choice: whether it pulls data from the actual user interface or from an API.

This distinction is more consequential than most teams realize. A study of 1,000 prompt executions found that only 24% of brands identified in the real ChatGPT interface also appeared in API-based responses. For citations specifically, the overlap dropped to just 4%. Consumer-facing interfaces apply proprietary system prompts, trigger real-time web searches, and return responses averaging 743 words. API calls return 406 words on average and skip web search in roughly 23% of cases.

The bottom line: if your AI answer tracking tool pulls from the API, you’re optimizing for an environment your customers never actually use.

Quality AI answer tracking platforms simulate real user behavior instead. They send structured prompts to each AI platform’s consumer interface, parse the returned answers, extract brand mentions and citations, and aggregate the results into a dashboard that reflects what users genuinely see.

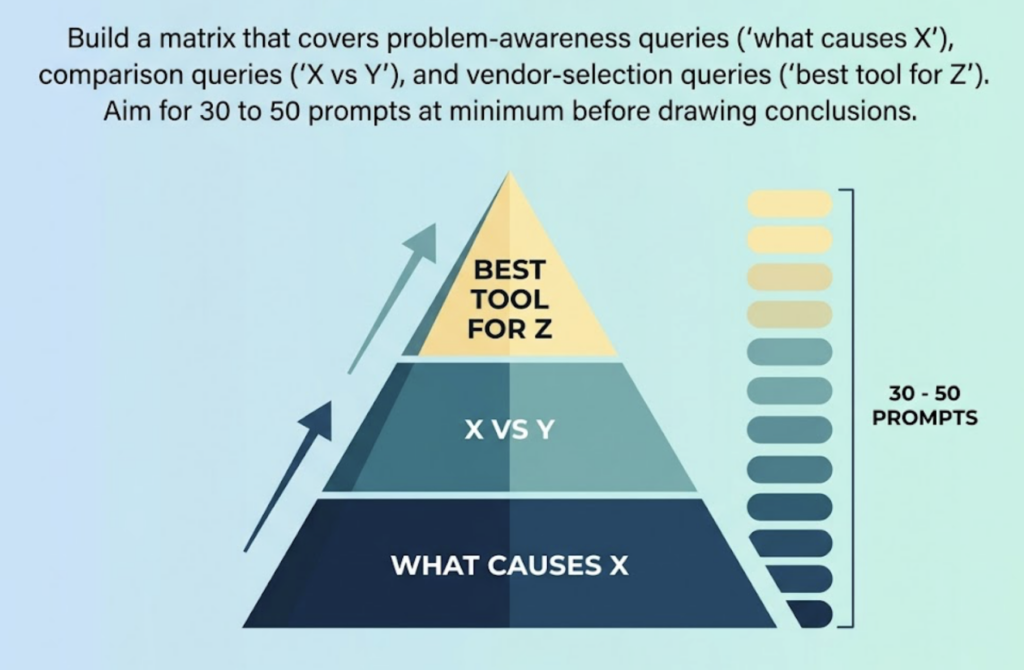

The prompt set design also matters. Tracking too few prompts gives you an incomplete picture. Tracking the wrong prompts (brand name queries only, for example) tells you nothing about category-level visibility, which is where most purchase decisions actually start.

5 Metrics Your AI Answer Tracking Dashboard Can’t Skip

Not all dashboards surface the same data. Here are the five metrics that directly affect revenue, and why each one earns its place.

Visibility Rate (Answer Share of Voice) measures how often your brand appears across your tracked prompt set. The formula is straightforward: prompts including your brand divided by total prompts tested, multiplied by 100. This becomes your baseline. Everything else is measured against it.

Position tracks where your brand lands within the AI’s response. Being the first product mentioned versus the third carries real weight. Research shows that being cited within an AI summary drives 35% more organic clicks and 91% more paid clicks compared to queries where the AI ignores your brand entirely.

Sentiment Score catches something visibility alone misses. An AI answer tracking analytics setup that only flags brand mentions without evaluating tone will let a problem go unnoticed. If ChatGPT is describing your enterprise product as “great for small teams on a budget,” high visibility is actually working against your positioning.

Source Analysis is the most actionable metric for content teams. It shows which domains the AI cited to support its recommendations. If your competitors dominate those citation sources and you don’t appear on them, you’ve identified exactly where to focus next.

Competitor Share provides context for all of the above. Your visibility rate at 40% means something different if competitors are sitting at 70% versus 15%.

Topify tracks all five of these within a single dashboard, alongside Volume, Intent, and CVR, covering the full picture of how AI systems interact with your brand across ChatGPT, Gemini, Perplexity, DeepSeek, and others.

Common Mistakes That Quietly Break Your AI Answer Tracking Strategy

Most teams don’t get bad data from their AI answer tracking platform. They get bad data from how they use it.

Tracking only one platform is the most common error. ChatGPT currently holds 60-68% of AI chatbot traffic share, which makes it the obvious starting point. But research consistently shows little overlap between what different AI engines cite. A brand that appears prominently in ChatGPT answers may be nearly invisible on Perplexity, which runs on a freshness and community-consensus model. Monitoring one platform gives you a partial picture and a false sense of security.

Using API-based monitoring tools creates the accuracy problem described above. If the tool doesn’t replicate real user sessions in the actual interface, the data it returns reflects a version of the AI your customers never encounter.

Ignoring sentiment while chasing visibility rate optimizes for the wrong outcome. A high mention rate paired with negative or inaccurate AI descriptions is worse than low visibility because it actively shapes perception before the user even visits your site.

Treating AI answer tracking as a monthly report rather than ongoing monitoring misses the pace at which AI citation patterns shift. Platform algorithms update, new competitors enter the space, and source preferences change. Weekly visibility snapshots catch these shifts before they compound.

Skipping source analysis removes the clearest signal for content prioritization. Without knowing which domains the AI trusts in your category, you’re improving content in the dark.

A Practical Strategy for AI Answer Tracking: From Setup to Ongoing Optimization

The goal of an AI answer tracking system isn’t to produce reports. It’s to create a feedback loop between what AI says about your brand and what your content team does next.

Here’s how that loop works in practice.

Start with prompt architecture. Your tracked prompt set should reflect how your target audience actually researches your category, not how you wish they’d describe it. Build a matrix that covers problem-awareness queries (“what causes X”), comparison queries (“X vs Y”), and vendor-selection queries (“best tool for Z”). Aim for 30 to 50 prompts at minimum before drawing conclusions.

Establish a baseline in month one. Before optimizing anything, document your current visibility rate, position distribution, and sentiment scores across each platform. This becomes the benchmark everything is measured against.

Run source gap analysis. Pull the domains your AI answer tracking tool surfaces as citation sources. Map these against your own content and backlink profile. The domains where competitors appear and you don’t are your highest-leverage content targets.

Align content output to citation signals. Pages with a clear H1 to H2 to H3 hierarchy are 40% more likely to be cited by AI systems, and sections between 120 and 180 words see 70% more citations than thin content under 50 words. Structured, information-dense content isn’t just good writing practice. It’s a technical requirement for AI visibility.

Review every two weeks, not every month. Well-structured pages can begin appearing in AI answers within 2 to 4 weeks of publication or update. Bi-weekly check-ins let you catch early wins and course-correct before a full reporting cycle ends.

Topify‘s One-Click Execution feature closes the loop between analysis and action. You define your goals in plain English, review the proposed GEO strategy, and deploy without manual workflows. In practice, this compresses the gap between spotting a citation opportunity and acting on it.

AI Answer Tracking Software Checklist: What to Look for Before You Commit

Not all platforms in this category deliver the same value. Here’s what separates the tools worth paying for from those that produce noise.

| Evaluation Criterion | Why It Matters | What to Look For |

|---|---|---|

| Platform coverage | Different AI engines cite different sources | ChatGPT, Perplexity, Gemini, and ideally DeepSeek |

| Data source (UI vs API) | API data is only 24% accurate vs real user experience | Browser-based scraping, not API calls |

| Prompt depth | Shallow prompt sets miss category-level visibility | 100+ prompts minimum; custom prompt builder |

| Sentiment analysis | High visibility with negative tone harms positioning | 0–100 sentiment scoring per platform |

| Competitor monitoring | Context for interpreting your own metrics | Auto-detection of new competitors in AI answers |

| Source analysis | Identifies content gaps and citation targets | Domain-level citation tracking with trend data |

| Actionability | Data without direction doesn’t move the needle | Strategy recommendations or automated execution |

Topify’s Basic plan at $99/month covers ChatGPT, Perplexity, and AI Overviews tracking with 100 prompts and 9,000 AI answer analyses per month. This is typically enough for a single brand establishing its first visibility baseline. The Pro plan at $199/month expands to 250 prompts and 22,500 analyses, which suits teams running parallel campaigns or managing multiple product lines. The 30-day trial on both plans means you can run a full baseline audit before committing.

That’s worth noting because the first 30 days of AI answer tracking are almost entirely diagnostic. You’re not optimizing yet. You’re finding out where you actually stand.

AI Answer Tracking Software Pricing: What Teams Actually Pay in 2026

The pricing landscape for AI answer tracking platforms spans a wide range, and the right tier depends less on company size than on how many prompts you need to track and how many AI platforms matter to your audience.

Entry-level tools like Otterly.ai start at $29/month and are useful for getting a directional sense of brand presence. At this tier, platform coverage and prompt limits are usually narrow. The data is a starting point, not a system.

Mid-market platforms typically price between $79 and $199/month. Topify’s Pro plan at $199/month sits here, covering 250 prompts, 10 seats, and eight projects, with UI-based tracking rather than API-dependent data. This tier makes sense for in-house marketing teams or agencies managing a handful of client brands.

Enterprise-grade tools carry a different cost structure. BrightEdge starts at $30,000 per year. Profound.ai, which raised $35 million in Series B funding, runs from approximately $499/month up to $5,000+ for larger organizations. At this level, you’re typically paying for compliance features, deep workflow integrations, and dedicated account management rather than fundamentally different tracking accuracy.

One practical note on budget: organizations with 1,000 to 5,000 employees are spending an average of $90,000 to $110,000 per month on AI software broadly. AI answer tracking is a small line item within that context, but it’s the one that tells you whether the rest of the AI investment is showing up where buyers actually look.

Topify’s usage-based pricing model is designed to reflect how teams actually use the product. Start with the prompt volume you need now. Expand as your GEO strategy matures and the data starts driving content decisions.

Conclusion

Traditional SEO dashboards were built for a world where visibility meant a URL near the top of a results page. That model still works for navigational queries. But 58.5% of US searches in 2025 were resolved without an external click, and AI Overviews push that zero-click rate to 83%. The question is no longer whether AI search affects your brand. It’s whether you’re tracking it.

AI answer tracking software gives teams a structured way to answer that question. Start with the right data source (UI-based, not API), build a prompt set that reflects real user behavior, and establish a baseline before optimizing anything. The conversion upside is real: AI-referred traffic converts at 4.4 times the rate of standard organic search, because users who discover your brand through an AI answer have already been partially pre-qualified. Getting the tracking right is step one. Get started with Topify to run your first visibility audit in under 30 minutes.

FAQ

Q: What is AI answer tracking software?

A: AI answer tracking software monitors how your brand appears inside AI-generated responses across platforms like ChatGPT, Perplexity, and Google AI Overviews. It tracks metrics like visibility rate, position, sentiment, and citation sources, data that traditional SEO dashboards aren’t designed to capture.

Q: How do I measure the effectiveness of my AI answer tracking system?

A: The primary metric is Answer Share of Voice (ASoV): the percentage of tracked prompts where your brand appears. Track this alongside position (where in the response your brand lands), sentiment score, and source citation coverage. Improvement across all four signals over 30 to 60 days indicates an effective tracking and optimization loop.

Q: How much does AI answer tracking software cost?

A: Entry-level tools start around $29/month with limited platform coverage. Mid-market platforms like Topify range from $99/month (100 prompts, 4 platforms) to $199/month (250 prompts, 10 seats). Enterprise platforms such as BrightEdge and Profound.ai run from $499/month to $100,000+ per year depending on scale and compliance requirements.

Q: What’s the difference between an AI answer tracking tool and traditional SEO monitoring?

A: Traditional SEO tools track keyword rankings, impressions, and click-through rates on search results pages. AI answer tracking tools track brand mentions inside synthesized AI responses, where there is no URL to rank and no click to measure. The two systems measure different things, and as zero-click AI search grows, the gap between them widens.