Every day, millions of users ask ChatGPT, Perplexity, and Gemini which tools to use, which brands to trust, and which products to buy. The AI answers. Your brand either appears in that answer or it doesn’t.

Most enterprise marketing teams don’t know which one is happening.

According to Gartner, 51% of consumers have already changed their research habits due to generative AI. Traditional search volume is projected to decline 25% by 2026. The traffic doesn’t disappear — it migrates into conversational AI interfaces. And in those interfaces, there’s no page two. There’s only the answer.

That’s the gap most brands still can’t see.

Your SEO Dashboard Won’t Show You This

The instinct to layer AI tracking onto existing SEO infrastructure makes sense, but it misses the fundamental difference between the two systems.

Traditional SEO monitoring tracks URLs in a ranked list. The algorithm is deterministic: a page either ranks or it doesn’t, and crawlers can verify that. AI answer tracking works differently. Platforms like ChatGPT and Perplexity use Retrieval-Augmented Generation (RAG) to synthesize responses dynamically. The output is non-deterministic text, not a stable SERP link. There’s nothing for a standard crawler to index.

The practical consequence: a brand can rank #1 on Google for a keyword and be completely absent from the AI summary for the same query. The AI might cite a third-party review site, a Reddit thread, or an industry whitepaper instead of the brand’s own page. SEO monitoring would show no problem. AI answer tracking would show a serious one.

| Dimension | Traditional SEO Monitoring | AI Answer Tracking |

|---|---|---|

| Detection Method | Web crawler / keyword index | Real-time prompt analysis |

| Response Type | Static, permanent SERP links | Dynamic, non-deterministic text |

| Primary Goal | Traffic/clicks to owned site | Citations/authority within AI answers |

| Visibility Metric | Keyword ranking (1-100) | Share of Model / Citation Rate |

| Key Variable | Backlinks and site speed | Entity clarity and machine-scannable facts |

SEO asks: did I rank? AI answer tracking asks: how was my brand synthesized? Those are different questions, and they need different infrastructure to answer.

The 5 Metrics That Tell You Where Your Brand Actually Stands in AI

Building an AI visibility index requires moving past vanity metrics. Here’s what enterprise teams need to track, and what each metric actually tells you.

Visibility Rate (Share of Model)

This is your foundational KPI. Visibility Rate measures how often your brand appears in AI-generated responses across a defined universe of prompts. Because AI outputs are probabilistic, a single manual check is statistically meaningless. Research from SparkToro shows that if you ask an AI tool for brand recommendations 100 times, there’s less than a 1% chance you’ll get the same list in the same order twice.

Visibility Rate is calculated at scale: divide the number of responses mentioning your brand by the total number of responses generated, across thousands of runs. That’s the only way to get a number you can actually act on.

Sentiment Score

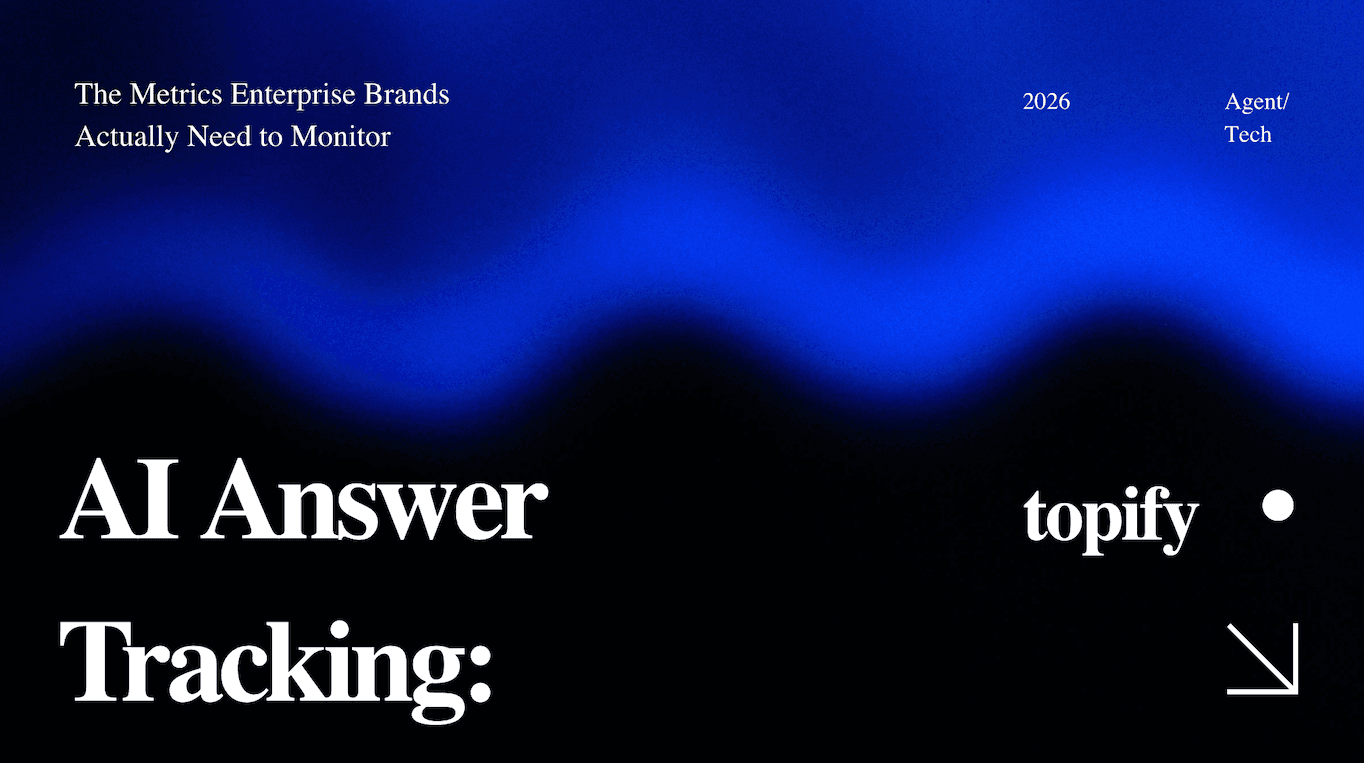

AI doesn’t just mention brands — it characterizes them. Sentiment tracking evaluates whether the model describes your brand as a leader, lists it neutrally as one option among several, or (worst case) associates it with outdated data or incorrect pricing. The last scenario is what practitioners call “hallucination risk,” and it’s more common than most brands realize. If the AI confidently states the wrong price point for your enterprise plan, that misinformation reaches every user who asks.

Position Rank

In a conversational answer, the first brand mentioned typically carries the highest implied endorsement. Position Rank tracks where your brand sits relative to competitors across purchase-intent prompts. On queries like “best AI monitoring tool for large brands,” being third instead of first is a measurable competitive disadvantage.

Source Attribution

AI models don’t generate information from nothing. They pull from specific URLs and domains via RAG. Source Attribution identifies which sources the AI is actually citing when it mentions your brand. Often, it’s not your own website — it’s a G2 review, a Reddit thread, or an industry comparison post. Knowing which sources the model trusts tells you exactly where to invest in PR and content placement.

Prompt Coverage

This metric maps how broad your brand’s presence is across different user intents. Discovery prompts (“what are the best tools for X?”), educational prompts (“how do I solve Y?”), and transactional prompts (“compare Brand A and Brand B pricing”) each represent a different stage in the buyer journey. A brand that only appears in one category has a fragile visibility position.

Why Monthly Reporting Is Too Slow for AI Visibility

Most enterprise teams still run monthly AI visibility reports. That cadence made sense for SEO. It doesn’t work for AI.

AI models are not static. Model retraining shifts how authority is weighted. RAG cache cycles mean new content may take days or weeks to appear in responses. Non-deterministic sampling means outputs vary even within the same day. The result: 40-60% of cited sources in AI answers change month-to-month.

A monthly report gives you a single data point in a moving system. If a competitor publishes a strong comparison post on a Thursday and the AI starts favoring it by Friday, your monthly report in two weeks won’t tell you that. You’ll find out when you notice the pipeline has dried up.

The standard for enterprise-grade monitoring is daily. Topify runs 9,000+ AI answer analyses continuously, using high-N sampling to calculate a statistically significant probability of visibility. That’s not a snapshot — it’s a trend line you can actually use to make decisions.

One more thing worth flagging: AI referral traffic converts at 4.4x the rate of traditional organic search. The brands that track this channel daily are capturing high-intent pipeline. The ones doing monthly check-ins are consistently late.

What Enterprise Brands Need That Lightweight Tools Can’t Deliver

There’s a growing category of lightweight AI visibility tools that offer quick checks and basic snapshots. For small teams tracking a handful of prompts, that’s often enough. For enterprise brands, it typically isn’t.

The reasons come down to three structural requirements.

Multi-platform coverage. A brand’s visibility is rarely consistent across AI platforms. It’s common to dominate Perplexity while being absent from ChatGPT, or to appear in Gemini but be mischaracterized in Claude. Enterprise monitoring needs to cover the major platforms simultaneously: ChatGPT, Perplexity, Gemini, Claude, Copilot, and Google AI Overviews. Lightweight tools often track one or two of these. That leaves significant blind spots.

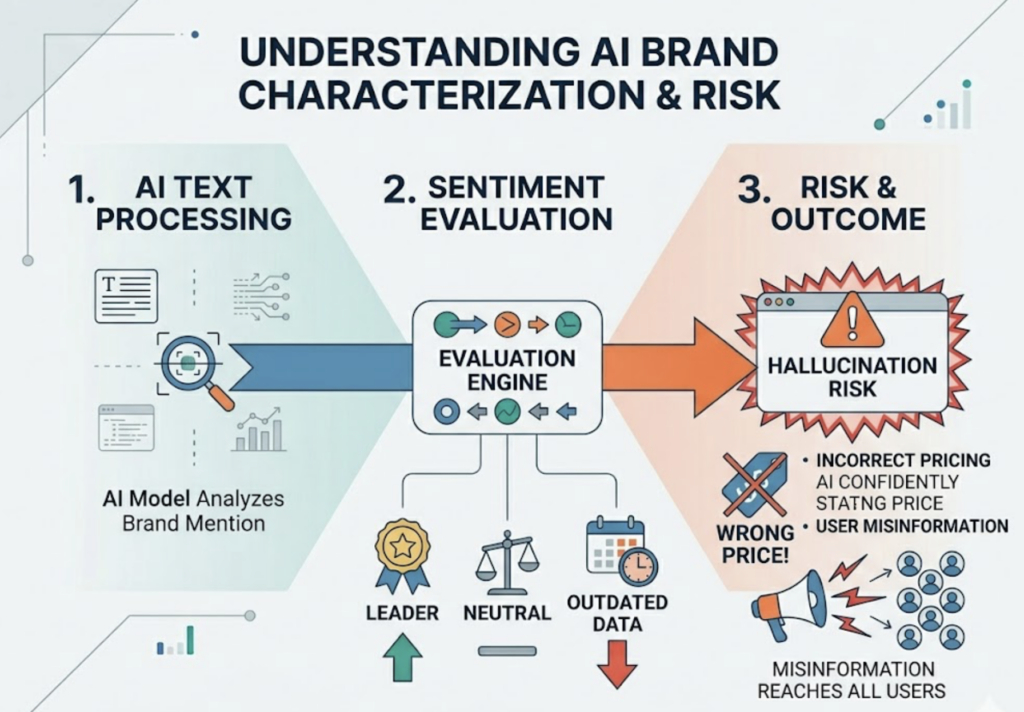

Prompt scale. A startup might track 10 to 20 core prompts. An enterprise brand with multiple product lines, regional markets, and buyer segments needs to monitor hundreds or thousands of long-tail prompt variations. Topify’s AI visibility tracking tools for enterprise brands support up to 250 prompts in the Pro tier, with concurrent analysis across platforms to surface what the research calls “Dark Queries” — prompts where your brand should appear but currently doesn’t.

Collaborative infrastructure. AI visibility tracking isn’t one team’s job. The PR team needs to monitor third-party citation health. The content team needs to track educational prompt coverage. The brand team watches sentiment. Topify provides team seats, shared dashboards, and role-appropriate views so each function sees what’s relevant to their work — without rebuilding reports from scratch every month.

According to Gartner, 82% of consumers have already noticed AI-generated overviews in search results. The brands that will compete for those consumers’ attention are the ones with the infrastructure to monitor and respond at scale.

A Workflow That Actually Scales: 6 Steps for AI Answer Monitoring

Setting up a sustainable AI visibility monitoring program doesn’t require rebuilding your entire marketing stack. Here’s what a practical enterprise workflow looks like.

Step 1: Define your prompt universe. Start by mapping your customer journey to conversational prompts. Brand-specific queries (“What do users say about [your brand]?”) and unbranded category queries (“Best enterprise AI tracking tools in 2025”) both belong in your baseline set. The target is covering discovery, educational, and transactional intent across each product line.

Step 2: Establish your baseline. Before you optimize, you need to know where you stand. Run your full prompt set across all target platforms and generate an AI Visibility Score, a composite metric that accounts for mention frequency, sentiment, and position rank. This is your reference point for everything that follows.

Step 3: Run a source and gap analysis. Identify which URLs the AI is actually citing when it mentions your brand (or your competitors). If a competitor is being cited more often, trace the source. Often it’s a specific review aggregator, a Reddit discussion, or a third-party comparison post. That source becomes your content or PR target.

Step 4: Re-architect content for machine readability. Princeton GEO research found that incorporating quantifiable data points increases citation probability by 37%, adding attributed expert quotes boosts visibility by 30%, and referencing authoritative sources improves inclusion by 40%. These aren’t soft improvements — they’re structural changes to how your content gets picked up in RAG pipelines.

Step 5: Set up automated alerts. Once your baseline is established, configure alerts for material changes: a Sentiment Score drop on a key product, a competitor displacing your brand in a high-value prompt, a new citation source appearing. You want to know about those shifts within hours, not weeks.

Step 6: Report to the business, not just the marketing team. AI visibility data needs to connect to business outcomes. Track AI referral traffic in GA4. Tie Share of Model movement to pipeline impact. Topify generates reports that translate visibility metrics into executive-level KPIs — the kind of data that makes the case for GEO investment at the CMO or VP level.

Conclusion

AI answer tracking isn’t a new feature of SEO. It’s a parallel discipline with different mechanics, different metrics, and a different competitive landscape. Brands that treat it as an extension of their existing search monitoring will consistently operate with blind spots.

The core framework is straightforward: track Visibility Rate, Sentiment, Position, Source Attribution, and Prompt Coverage — daily, across multiple platforms, at a scale that produces statistically meaningful data. Then close the gaps systematically.

Topify is built for exactly this workflow. The platform covers major AI engines, runs 9,000+ analyses to filter model noise, and surfaces the specific content and citation gaps that explain why your brand is or isn’t appearing in the answers that matter.

Gartner projects that by 2028, 60% of brands will use autonomous AI agents for direct customer interactions. Those agents will recommend brands they can verify. The brands building their AI visibility infrastructure today are the ones that will be in scope when that happens.

The win isn’t the ranking anymore. It’s the citation.

Read More: