Your domain authority is solid. Your target keywords rank on page one. You’ve built the content machine everyone says you need.

Then someone types a question into ChatGPT, and five competitors get recommended. Your brand isn’t there. And nothing in your current analytics stack can tell you why, or even that it happened.

That’s not a content problem. It’s a measurement problem. AI answer tracking analytics exists specifically to fill this gap.

What AI Answer Tracking Analytics Actually Measures (And Why It’s Not Your Existing Dashboard)

Traditional SEO analytics tracks where your pages rank in a list. AI answer tracking analytics tracks whether your brand appears inside a synthesized response, what it says about you, and how that compares to competitors.

The distinction matters more than it sounds. Zero-click searches now account for roughly 60% of all US searches as of mid-2025. That means the majority of search interactions never produce a click for anyone. The AI engine resolved the query on its own, and your ranking data recorded nothing useful.

The architectural gap between the two approaches is significant:

| Dimension | Traditional SEO Dashboard | AI Answer Tracking Analytics |

|---|---|---|

| Primary Unit | Keywords and URLs | Prompts and Citations |

| Success Indicator | SERP Ranking (Position 1–10) | Share of Voice and Citation Rate |

| Output Evaluated | Fixed list of blue links | Synthesized text with inline references |

| User Behavior Signal | Click-Through Rate | Brand mention and LLM recommendation |

| Visibility Scope | Owned domain only | Cross-platform (LLMs, Reddit, UGC) |

This also directly addresses the SEO AI-driven search differences debate that many marketing teams are still working through. It’s not that SEO stops mattering. It’s that SEO data and AI visibility data measure fundamentally different things, and confusing the two leads to blind spots.

The 5 Core Metrics Every AI Answer Tracking Setup Should Capture

Getting this right starts with understanding what you’re actually measuring. There are five metrics that matter most.

Visibility (Share of Voice): The percentage of relevant prompts where your brand appears, compared to all brands mentioned. This is the closest equivalent to “market share” in AI search. It’s the single most important indicator of long-term brand authority in generative search.

Sentiment Score: Whether the AI describes your brand positively, neutrally, or negatively. A brand can have high visibility but consistently negative framing. You won’t catch that without sentiment tracking.

Position: Where in the AI response your brand appears. Being mentioned fifth in a “top alternatives” list is very different from being the primary recommendation. Most basic trackers don’t distinguish between the two.

Source / Citation Rate: Which domains the AI is pulling from when it mentions your brand. This tells you what content is actually influencing how AI talks about you, and where the gaps are.

AI Search Volume: The actual volume of AI-platform queries related to your category, product, or brand. Not Google search volume. These are often different numbers, and the difference tells you where user behavior is already shifting.

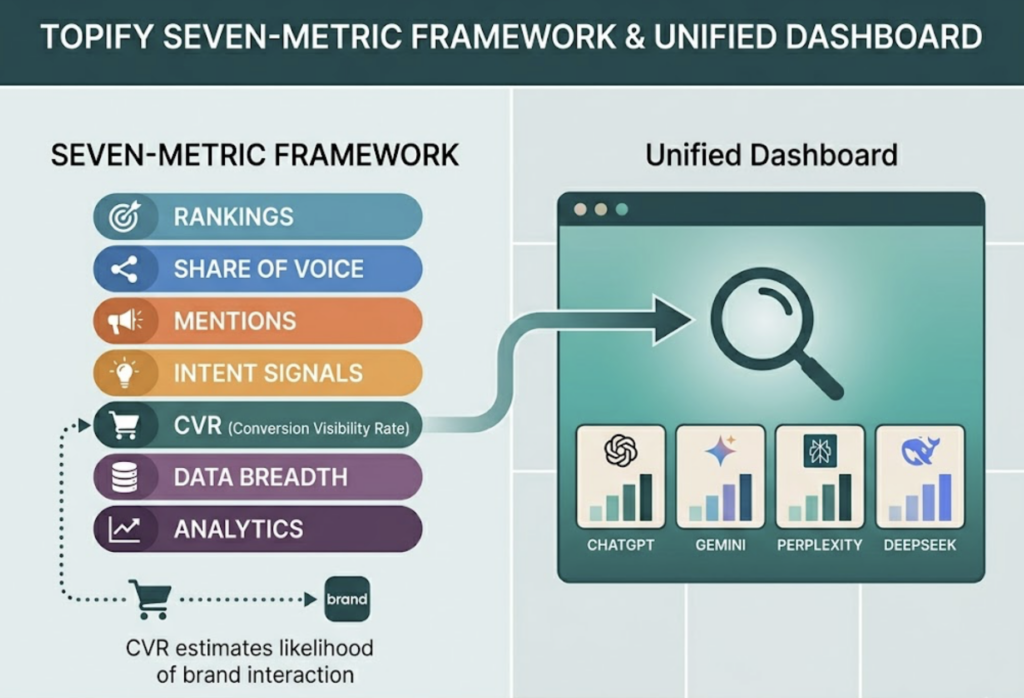

Platforms like Topify extend this further with a seven-metric framework that also includes mentions, intent signals, and conversion visibility rate (CVR), which estimates the likelihood that an AI answer is driving users toward a brand interaction. For teams that need a single dashboard across ChatGPT, Gemini, Perplexity, and DeepSeek, that kind of breadth matters.

How AI Answer Tracking Analytics Actually Works

The technical logic behind AI answer tracking is worth understanding, because it explains why the data looks different from anything you’ve used before.

Most commercial AI engines use a Retrieval-Augmented Generation (RAG) architecture. When a user submits a query, the engine retrieves relevant content chunks from its training data or the live web, evaluates credibility signals, and synthesizes a response. Visibility, at this level, is about being the most credible source retrieved for a given context, not about keyword matching.

Tracking this involves three phases. First, query fan-out: a single keyword gets converted into dozens of related conversational prompts, then fired at multiple AI platforms simultaneously. Second, element-level parsing: the unstructured text response is analyzed to identify brand mentions, explicit recommendations, and URL citations. Third, sentiment analysis: an LLM evaluates the tone and accuracy of what was said about the brand.

Here’s the thing: AI responses are non-deterministic. The same prompt submitted twice, from different sessions or geolocations, can produce meaningfully different answers. That’s why manual spot-checking (“I Googled us on ChatGPT this morning”) is not a measurement methodology. It’s a sample size of one from a system that doesn’t repeat itself.

Reliable AI answer tracking analytics requires volume, persistence, and cross-platform coverage. Without those three, you’re not measuring anything you can act on.

Why SEO Rankings and AI Visibility Tell Different Stories About Your Brand

The clearest way to see the AI-driven search SEO differences is to look at what happened to brands that had strong SEO but no AI visibility strategy.

HubSpot is the most documented example. A long-time leader in inbound content, HubSpot saw traffic decline between 70% and 80% between 2024 and 2025, with monthly organic visits dropping from an estimated 13.5 million to roughly 6 million. The core cause: a massive library of top-of-funnel informational content, which AI engines now synthesize directly without requiring a click. High rankings, zero visibility in the answer.

The broader trend confirms this. On queries where AI Overviews appear, traditional organic CTR dropped 61%, falling from a 1.76% baseline in June 2024 to just 0.61% by September 2025. CNN and Forbes both reported traffic declines between 27% and 50% from the same dynamic.

On the flip side, the traffic that does come through AI citations is higher quality than traditional organic. AI-referred visitors convert at 4.4 times the rate of standard organic traffic, with conversion rates around 14.2% versus a 2.8% baseline. They also spend 68% more time on-site.

That’s the real picture: AI search is reducing volume but improving quality. Brands that track AI visibility can capture that quality traffic intentionally. Brands that only watch rankings will keep watching their numbers look fine while revenue quietly erodes.

4 Mistakes That Make Your AI Answer Tracking Analytics Worthless

Most teams make at least one of these. Some make all four.

Tracking only your brand name. If you’re only monitoring when your brand gets mentioned by name, you’re missing every query where a customer was looking for a solution in your category and never heard your name at all. Category-level prompts and competitor-adjacent queries are often where the biggest visibility gaps live.

Single-platform monitoring. ChatGPT’s citation patterns are not the same as Perplexity’s, and neither matches Gemini’s. A tool that only tracks one engine is giving you partial data and presenting it as complete. The Princeton, UPenn, and IIT Delhi GEO research found that citation behavior varies significantly by domain type and platform, which means coverage gaps translate directly into strategy gaps.

Replacing systematic tracking with manual tests. Because AI responses are non-deterministic, a manual check produces a result that may not repeat. Structured AI answer tracking analytics requires running prompts at volume, across platforms, on a consistent schedule, and recording the variance. Spot-checking tells you what happened once, not what typically happens.

Treating visibility as the only number that matters. A brand can appear in 80% of relevant AI responses and still be losing ground if the sentiment is neutral-to-negative and the position is always fifth on the list. Visibility without sentiment and position context is misleading data.

A Practical Checklist for AI Answer Tracking Analytics

This is adapted from the implementation framework used by teams making the transition from traditional SEO dashboards to AI visibility measurement.

Phase 1: Baseline Setup (Month 1)

- Identify 20–30 high-intent prompts that represent how your target audience asks about your category

- Verify that key AI crawlers (GPTBot, PerplexityBot, ClaudeBot) are allowed in your robots.txt

- Run a manual baseline across ChatGPT and Perplexity to establish your current Share of Voice

- Audit your Organization and FAQ schema for machine-readability

- Map “zero-click” queries in Search Console that likely indicate AI summarization

Phase 2: Content Optimization (Months 2–3)

- Add credible source citations to your top 10 priority pages (research shows this can improve citation rates by up to 115% for lower-ranked content)

- Implement the BLUF structure: put the direct, factual answer in the first 40–60 words of each section

- Add statistics and expert quotes to informational content

- Ensure content is served as static HTML, not JavaScript-rendered, for AI crawler accessibility

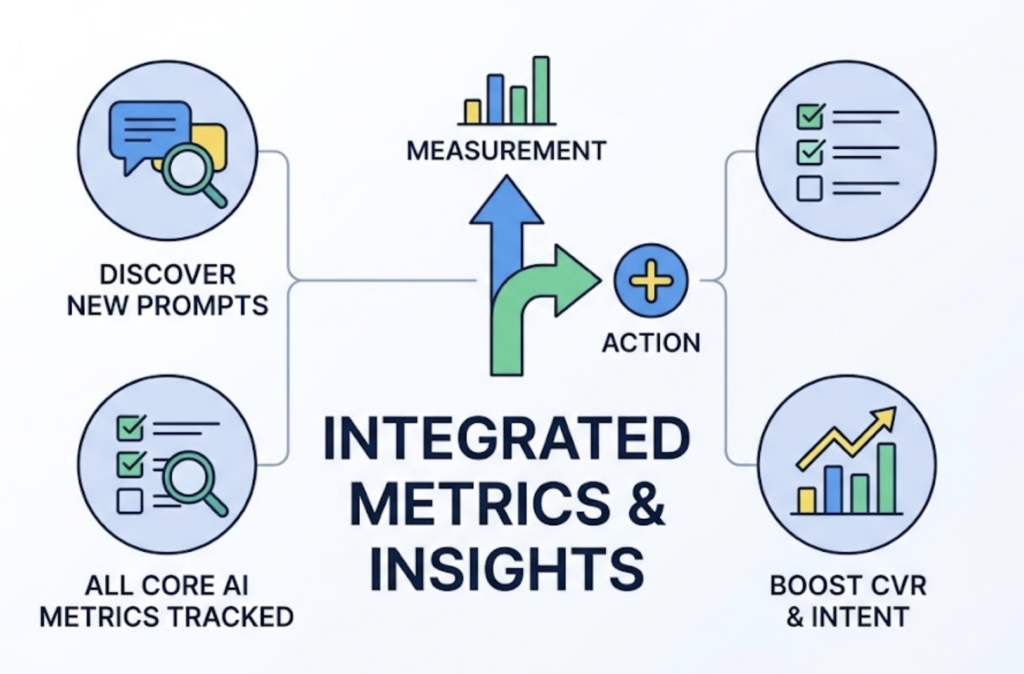

Phase 3: Analytics Integration (Months 3–6)

- Deploy a dedicated AI answer tracking tool for automated daily or weekly reporting

- Connect AI visibility data to GA4 to correlate citation changes with revenue impact

- Set up competitor benchmarking on a monthly cadence

- Configure alerts for incorrect brand descriptions or outdated pricing in AI responses

The checklist for AI answer tracking analytics is deliberately sequential. Phase 1 without Phase 3 gives you a one-time audit. Phase 3 without Phase 1 gives you data without a baseline to compare against. Both matter.

Tools That Actually Do AI Answer Tracking Analytics

The market for these tools is still maturing. Here’s what to look for and how the leading options compare.

Three criteria separate useful tools from ones that produce dashboards full of noise: platform coverage (how many AI engines, not just ChatGPT), metric depth (visibility alone is insufficient), and update frequency (stale data in a non-deterministic environment is worse than no data).

| Tool | Platform Coverage | Key Differentiator | Target User |

|---|---|---|---|

| Topify | ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen + more | 7-metric framework + prompt discovery + one-click optimization execution | Marketing teams and agencies |

| Profound | ChatGPT, Perplexity, AI Overviews | Enterprise security, SOC 2 compliance | Fortune 500 and regulated industries |

| LLMrefs | 11+ engines | Widest engine coverage; keyword-focused approach | SMBs and startups |

| Rankscale AI | 8 engines | Pay-as-you-go credit model | Budget-conscious agencies |

| Peec AI | ChatGPT, Perplexity | Actual prompt/response pair recording | Competitive benchmarking |

| Ahrefs Brand Radar | Search and web | Add-on to existing SEO toolset | Traditional SEOs expanding into AI |

For teams that need both measurement and action in the same platform, Topify tends to be the strongest fit. It covers the major AI platforms, tracks all five core metrics discussed above plus intent and CVR, and includes a prompt discovery feature that surfaces high-volume AI queries you may not be monitoring yet. The Basic plan starts at $99/month with a 30-day trial, covering 100 prompts and 9,000 AI answer analyses. Pro scales to 250 prompts and 22,500 analyses at $199/month.

For enterprise teams with compliance requirements, the tradeoff between platform coverage and security certification is a real consideration. For teams just starting out, beginning with 20–30 well-chosen prompts on a mid-tier plan is typically more useful than broad coverage with shallow metrics.

Conclusion

Your SEO dashboard was built to answer questions that search engines used to answer with a list of links. That mechanism is being replaced, steadily and measurably, by synthesized responses that your current tools cannot see.

AI answer tracking analytics isn’t a trend metric or a bonus KPI. It’s the baseline measurement layer for brand visibility in AI-driven search. Without it, you’re optimizing against data that describes a world that’s already changed.

The practical starting point is smaller than most teams expect: 20–30 prompts, a baseline Share of Voice measurement across two or three platforms, and a tool that can run those prompts consistently. That first report tells you more about your actual competitive position in AI search than months of rank tracking data.

FAQ

Q: What is AI answer tracking analytics?

A: AI answer tracking analytics is the practice of measuring how often a brand appears in AI-generated responses (from platforms like ChatGPT, Perplexity, and Gemini), what those responses say about the brand, where the brand is positioned relative to competitors, and which sources the AI is citing. It fills the measurement gap left by traditional SEO dashboards, which track rankings but not AI-synthesized visibility.

Q: How is AI answer tracking analytics different from traditional SEO analytics?

A: Traditional SEO analytics measures where your pages rank in a list of blue links. AI answer tracking analytics measures whether your brand is included in a synthesized response, the sentiment of that inclusion, and the citation sources driving it. The two can diverge significantly: a brand can hold a first-page ranking while being entirely absent from AI answers in the same category.

Q: How do I measure AI answer tracking analytics for my brand?

A: Start by defining 20–30 prompts that reflect how your target audience asks questions in your category. Run those prompts across at least two major AI platforms and record whether your brand is mentioned, at what position, and with what sentiment. For ongoing measurement, a dedicated tool like Topify automates this at scale and tracks changes over time.

Q: What’s the typical pricing for AI answer tracking analytics tools?

A: Pricing varies by platform coverage and prompt volume. Entry-level plans from specialized tools typically start around $49–$99/month for a limited prompt set. Topify’s Basic plan is $99/month and includes 100 prompts and 9,000 AI answer analyses with a 30-day trial. Enterprise-grade platforms with compliance features start considerably higher, often from $499/month.