G2 doesn’t lie. Unlike vendor whitepapers or conference keynotes, the reviews on G2 come from people who actually paid for the software, ran into its limits, and had to explain the results to a CMO.

That’s what makes G2 data one of the most honest signals we have right now for understanding where Answer Engine Optimization actually stands in 2026.

And the picture is complicated.

AEO Demand Exploded. The Terminology Didn’t Keep Up.

Since G2 officially created the AEO software category in March 2025, the growth has been hard to ignore. Demand in the category grew over 2,000% in less than a year, and G2’s Winter 2026 report introduced the first-ever AEO Grid, featuring nine products competing for the same buyers.

But here’s the thing: a lot of those buyers still aren’t sure what they’re buying.

In G2 search data, “AEO” regularly gets conflated with GEO (Generative Engine Optimization), AI Search Optimization, and even SXO. These terms overlap in meaningful ways, but they’re not the same thing. AEO tends to refer to optimization at the extraction layer, getting your content pulled into direct answers. GEO, as framed in research from Princeton and Georgia Tech, is a broader strategy around building semantic authority and citation density so AI systems treat your brand as a trusted source.

The practical consequence: teams that can’t fluently navigate these distinctions execute AEO changes 2.3x slower than those who can, according to audits of 40+ B2B SaaS companies tracked in early 2026.

For vendors, this is a land-grab moment. Whoever defines the vocabulary wins the market. Profound, Yext, and Conductor are already publishing benchmark reports and KPI frameworks to establish that kind of definitional authority.

The 3 Complaints That Keep Showing Up in Low-Star Reviews

Pull the 1-to-3-star reviews on G2 for AEO tools and a pattern emerges fast. Three issues come up so consistently they’ve become the defining failure modes of first-generation products.

Data that’s always a week behind.

AI models update their RAG datasets constantly, often by the hour. But many monitoring tools still run on SEO-era weekly cycles. When a brand corrects a piece of content that’s causing AI hallucinations, its visibility dashboard might not reflect that change for days.

That’s not a minor inconvenience. In a landscape where a competitor can close the gap on you overnight, a week-old snapshot is practically useless for tactical decisions. High-rated tools in this category are the ones offering hourly updates or real-time browser-based capture.

Dashboards that tell you what happened, not what to do.

This is the one G2 reviewers phrase differently every time but mean the same thing: the tool showed them their citation rate dropped 15%, then left them alone with a CSV file.

The gap between “we detected a problem” and “here’s how to fix it” is where most AEO tools fall apart. Users want prioritized action, not more data layers. One reviewer put it plainly: if a tool can’t tell you which H2 tag to rewrite or which third-party domain to target for coverage, it’s a monitoring tool pretending to be a strategy tool.

“Full platform coverage” that turns out to mean ChatGPT.

Several tools marketed as cross-platform have been called out in G2 reviews for thin coverage outside of ChatGPT. Perplexity weights real-time news sources differently than ChatGPT’s pre-training. Gemini has its own citation logic. DeepSeek and Claude behave differently still.

A tool that optimizes for one engine and exports the results as “AI visibility” is giving you an incomplete picture, and sophisticated buyers on G2 have figured that out.

What Every High-Rated AEO Tool Has in Common

Across the 4.5-star-and-above reviews on G2, the pattern isn’t feature count. It’s three shared commitments.

Real browser capture, not modeled estimates.

Top tools like Profound and Topify don’t rely on statistical modeling to infer visibility. They use distributed, large-scale browser rendering to capture actual AI responses across geographies and conversation contexts. This matters because AI answers are non-deterministic: the same query returns different answers for different users. Modeled estimates smooth over that variance. Real capture preserves it.

Closed-loop execution.

Products like Quattr and Topify earn high marks because they close the loop between insight and action. When the system detects that a competitor is getting cited more often on a specific prompt, it doesn’t just flag it. It generates structured content recommendations and, in some cases, pushes updates directly to the user’s CMS.

That one-click execution model is solving a real organizational problem: marketing teams don’t have the bandwidth to manually respond to AI ranking shifts that happen multiple times per week.

Metrics that connect to revenue, not just rankings.

The tools getting the best reviews in 2026 have moved beyond citation rate as the primary KPI. They’re surfacing indicators like sentiment polarity (is the AI describing your brand as “expensive and unreliable” or “efficient and trusted”?) and conversion-intent signals that tie AI visibility to actual business outcomes.

| Capability | What high-rated tools do | What legacy SEO tools miss |

|---|---|---|

| Citation tracking | Pinpoints source URLs inside AI responses | Shows search result page rankings only |

| Sentiment analysis | Detects whether AI describes your brand positively or negatively | Records brand mention presence only |

| Source mapping | Reveals how Reddit, G2, and third-party media contribute to AI citations | Focuses on owned domain authority only |

| Hallucination detection | Flags false statements AI generates about your brand | Can’t assess content accuracy |

The Gap CMOs Feel but Can’t Always Name

Gartner projected a 25% decline in traditional search volume by 2026. Semrush data shows 93% of AI searches end with zero clicks. These numbers have made legacy traffic metrics almost meaningless for justifying AEO spend.

And yet a lot of AEO tools are still reporting estimated visit counts as their headline metric.

G2 reviewers in 2026 are pushing back on this hard. What CMOs actually want is visibility into brand mention weight and intent share, metrics that reflect influence over AI-driven decisions rather than clicks that no longer happen. What they’re getting from many tools is another dashboard with more charts.

The deeper issue is decision fatigue. Marketing teams in 2026 already manage data from 15+ tools on average. An AEO tool that adds more graphs without adding prioritization, “fix this first, it’ll move the needle by X%,” gets abandoned fast. The reviews make this clear.

What’s gaining traction is a different product category entirely: diagnostic tools, not monitoring tools. The distinction matters. A monitoring tool tells you what’s happening. A diagnostic tool tells you why and what to do about it.

Where Topify Fits in the G2 AEO Picture

Topify has built its product around the specific failure modes that G2 reviews keep surfacing.

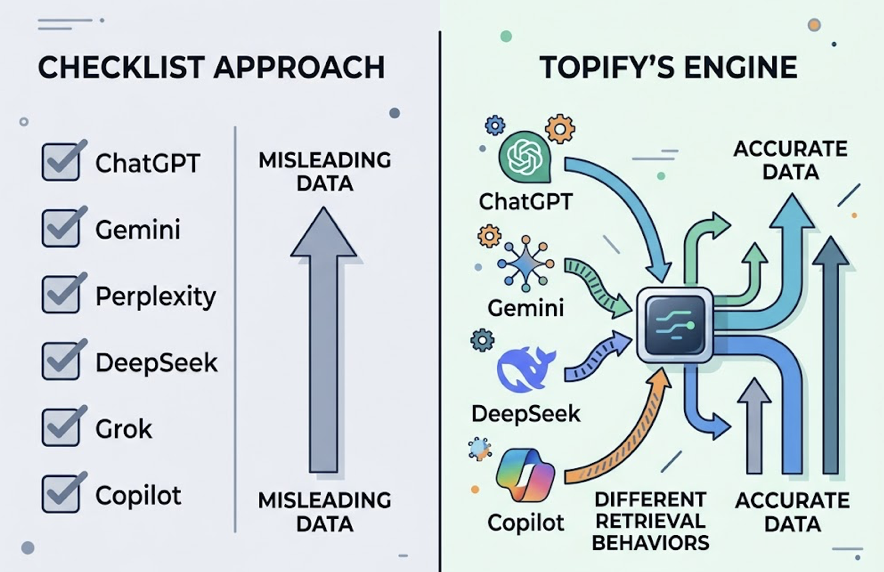

On the coverage problem: Topify tracks across ChatGPT, Gemini, Perplexity, DeepSeek, Grok, and Copilot. That’s not a checklist feature. Each platform has different retrieval behavior, and treating them as equivalent produces misleading data. Topify’s engine accounts for those differences at the data layer.

On the actionability problem: Topify’s AI agent doesn’t stop at detection. When it identifies a visibility gap or a competitor gaining ground on a specific prompt, it generates an action plan and can deploy it with a single click. No manual CSV export, no internal content request queue.

On the revenue gap: Topify introduced the Conversion Visibility Rate (CVR), a metric that estimates the probability of a specific AI response driving a user toward brand interaction, based on query type, placement position, and sentiment scoring. It’s the closest thing the industry currently has to a conversion metric for zero-click AI discovery.

Topify also addresses the source coverage problem directly. AI models are 6.5x more likely to cite content from third-party sites like Reddit, G2, and specialist media than from brand websites. If your tool only monitors your own domain, you’re missing 85% of where your AI visibility is actually built.

| Topify capability | G2 pain point it solves |

|---|---|

| CVR prediction model | Can’t prove AEO’s business value |

| Source analysis and gap detection | Knows ranking dropped but not why |

| Automated action layer | Stuck in the actionability gap |

| Rolling average scoring | Data distorted by query variance |

Topify’s Basic plan starts at $99/month, which covers 100 prompts and 9,000 AI answer analyses across four projects. That’s a meaningful entry point for mid-sized teams that don’t want enterprise pricing before they’ve validated the channel.

3 Questions to Ask Any AEO Tool Before You Sign

Based on what G2 reviewers collectively surface, these three questions cut through the marketing language faster than any feature comparison.

Is the data from real-time browser rendering or cached API responses?

Many tools use cached AI responses to reduce costs. In 2026, where AI model updates happen hourly, cached data means you’re always making decisions on yesterday’s reality. Ask the vendor directly: can they demonstrate distributed, multi-region, live browser capture?

Can it tell the difference between being mentioned and being recommended?

AI can mention your brand in a negative context, “Product X is expensive and prone to errors, but it’s an option,” and that mention counts as a citation in tools that don’t have sentiment analysis. You want a tool with Answer Placement Scoring and sentiment polarity detection, not just citation counting.

Does it track third-party source influence, not just your own site?

If the tool only analyzes your owned domain, it’s ignoring the majority of where AI citations actually come from. You need visibility into which external domains are shaping how AI describes your brand, so you can direct your digital PR resources effectively.

Conclusion

The G2 data from 2026 tells a consistent story: AEO has grown faster than the tools built to support it.

The 2,000% demand spike is real. So is the gap between what CMOs need and what most platforms deliver. The first generation of AEO tools was built to detect. The next generation is being built to act.

That gap, between visibility as a data exercise and visibility as an operational capability, is where the competitive advantage in this market will be won or lost. Brands that close it now, with platforms built for diagnosis and execution rather than just monitoring, will have a structural advantage that compounds as AI search continues to displace traditional discovery.

The G2 reviews don’t lie. They’re just telling you something most vendor decks won’t.

FAQ

What’s the difference between AEO and SEO in 2026?

SEO gets your brand into ranked link lists that users click through. AEO gets your brand synthesized directly into AI answers, often without any click happening at all. In a zero-click environment, AEO is how you influence decisions before a user ever visits your site.

Are G2 star ratings a reliable way to judge AEO tools?

Aggregate scores include noise, response time, onboarding support, and other factors unrelated to core capabilities. The more reliable signal is buried in the 1-to-3-star reviews: specifically, comments about data accuracy and platform coverage. Those two factors predict whether a tool will still be useful six months after you buy it.

What should a strong AEO tool stack include in 2026?

At minimum: real-time cross-platform monitoring, sentiment analysis, citation source mapping, and a clear action layer that translates insights into content changes. Platforms like Topify that unify monitoring and execution into one workflow, while offering business-level metrics like CVR, represent the current standard for teams serious about making AEO a measurable growth channel.