Real user feedback reveals the gaps most teams overlook. Here’s how to close them.

You’ve published the articles. You’ve added FAQ sections. You’ve watched the analytics dashboard for weeks.

And yet, when someone asks ChatGPT or Perplexity for a recommendation in your category, your brand doesn’t show up. Your competitor does.

This isn’t a content quality problem. An analysis of 200+ G2 reviews on AEO and AI visibility tools, combined with 2026 citation research, points to something more structural: most AEO strategies fail not because the content is bad, but because the execution model is built on the wrong assumptions.

Here are the patterns that keep appearing, and what to do about each one.

Most Teams Are Running SEO Plays in an AEO Game

The single most common failure mode: treating AEO as an SEO extension.

It’s an understandable mistake. Both disciplines involve search, content, and rankings. But the mechanics are fundamentally different. SEO is a page-ranking discipline. AEO is a passage-citation discipline.

Traditional SEO rewards keyword density, backlink authority, and domain trust. AI answer engines, on the other hand, use large language models that parse content through entity recognition and semantic relationships. A piece of content optimized for the phrase “best project management software” might rank well on Google and still get zero citations from ChatGPT.

The reason: LLMs aren’t looking for the most popular page. They’re looking for the most extractable passage. That’s a different problem entirely.

G2 reviewers describe falling into what researchers call the “content sameness” trap. By chasing high-volume keywords, teams produce generic content that lacks the unique data points or specific expert perspective required for an LLM to select it during synthesis. The content exists in the training data. It just never makes it to the foreground.

Repurposed Content Doesn’t Pass the Extraction Test

A specific variant of the SEO mindset problem: the “AEO-ify the archive” approach.

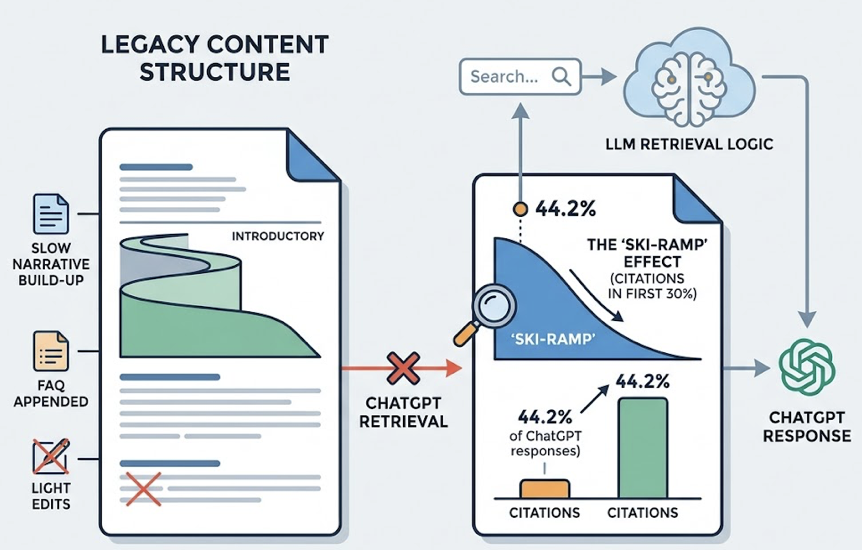

Many teams try to revive legacy blog posts by appending FAQ sections or lightly editing the intro. It rarely works. Empirical data from 2026 citation research shows that 44.2% of citations in ChatGPT responses are pulled from the first 30% of the content, a pattern researchers call the “ski-ramp” effect. Legacy content, designed with slow narrative build-ups and long introductions, is structurally incompatible with this retrieval logic.

LLMs favor what researchers call “discrete knowledge packets”: standalone passages that can be understood in isolation, without surrounding context. Old-format content, written for human narrative flow, fails this test. The machine retriever simply moves on.

The fix isn’t editing. It’s restructuring. Lead with the direct answer. Follow with specific facts, entities, and verifiable claims. Leave nothing that requires the reader to have read the paragraph before.

| Content Format | Retrieval Logic | AEO Performance |

|---|---|---|

| Traditional narrative blog | Reads well, extracts poorly | Low citation rate |

| FAQ-appended legacy post | Partial improvement | Inconsistent |

| Inverted pyramid, entity-dense | Built for extraction | High citation rate |

You’re Measuring the Wrong Things

If your primary AEO success metric is Google rankings or organic traffic, you’re flying blind.

Here’s the thing: a brand can rank in the top three positions on a SERP and still be completely absent from the AI answer that 76% of users now prefer for complex queries. Google AI Overviews show a 76.1% correlation with top-10 organic rankings, but other platforms like Claude and ChatGPT frequently bypass traditional rankings entirely, pulling directly from brand sites or “kingmaker” domains like Reddit and G2.

That correlation gap is where most strategies quietly fail.

G2 reviewers who caught this early made a metrics shift that changed how their teams operated. Instead of tracking clicks and rankings, they moved to a framework built around seven AEO-specific KPIs:

- Citation Rate: How often is your brand cited for target prompts?

- AI Share of Voice: What percentage of category mentions does your brand own vs. competitors?

- Answer Placement Score: Where in the AI response does your brand appear?

- Sentiment Polarity: How does the AI frame your brand?

- Feature Association: Does the AI understand your product positioning?

- Source Citation Rate: Which domains are driving your visibility?

- CVR (Conversion Visibility Rate): Which mentions are most likely to convert?

Teams that adopted these metrics earlier reported significantly higher executive buy-in, because they could demonstrate “room presence” even when traditional traffic was declining. The shift from “how many clicks did we get” to “are we part of the conversation” is the marker of a mature AEO program.

“We Published, But AI Never Cited Us”

This is the most common complaint in the G2 dataset. Dozens of articles. Strong content. Still no citations.

The underlying cause is a structural mismatch between ranking logic and citation logic. Ranking is built on popularity and backlink authority. Citation is built on extractability and verifiability.

AI models using retrieval-augmented generation (RAG) execute a two-step process: find the relevant chunk, then synthesize the answer. If a passage can’t stand alone as a coherent, factual unit, it gets skipped. Research from Stanford’s Human-Centered AI Institute found that properly cited content is 3.4 times more likely to appear in AI summaries.

There’s also a language problem. Content that uses hedge language, phrases like “we believe,” “typically,” or “it might,” gets deprioritized. AI systems favor definitive language and verifiable statistics. Winning content in 2026 averages an entity density of 20.6%, meaning roughly one unique entity or factual claim every five words. Most narrative-style blog posts don’t come close.

The practical fix is to audit your content against a simple test: can this paragraph be read and understood in complete isolation? If it can’t, a retriever won’t use it.

You Don’t Know Who AI Is Recommending Instead of You

In traditional search, you can see all ten competitors on page one. In an AI response, you might only see one or two sources cited. That compression creates a blind spot most brands discover too late.

AI models are 6.5 times more likely to cite a brand through a third-party source, such as a G2 review, a Reddit thread, or an industry study, than through the brand’s own website. This is because AI systems prioritize consensus and human validation over brand self-reporting.

Platform citation behavior differs significantly:

| AI Platform | Primary Citation Sources | Key Insight |

|---|---|---|

| ChatGPT (GPT-5.4) | Brand sites (56%) + Kingmaker domains (44%) | Uses brand data, validates via community |

| Perplexity | Reddit (46.7%), aggregators, news | Prioritizes real-time human discussion |

| Claude | Expert-level technical docs | Favors depth and factual accuracy |

| Google AI Overviews | Top-ranking organic pages | Rewards traditional SEO foundations |

G2 reviewers consistently report the same pattern: they find out a competitor is dominating AI recommendations not through their own monitoring, but through a client complaint or an accidental discovery. By that point, the AI platform has already developed what researchers call “source loyalty,” a tendency to repeatedly cite the same verified domains. Breaking in becomes significantly harder.

The brands that stay ahead are monitoring competitor citation patterns continuously, not quarterly.

AEO Budget Gets Cut Because No One Can Explain the ROI

The final structural failure isn’t about content or measurement. It’s about defensibility.

AEO drives visibility in zero-click environments. There’s no referral click to attribute. No last-touch conversion to show the CFO. G2 reviews of AEO tracking platforms cluster around this exact pain: the work is real, the impact is real, but the reporting model makes it look like nothing happened.

The teams that protect their AEO budgets make one key shift: they move to pipeline math.

Here’s an example from a B2B SaaS case in the research data. Monthly AEO investment: $10,000. Tracked AI-referred demo requests: 12 per month. Influenced demos via branded search lift: 8 per month. Total attributed demos: 20. Closed deal value: $150,000 per month. Calculated ROI: 1,400%.

The mechanism is “fractional attribution.” Analysts recommend assigning 50-70% credit to AI impressions for any lift in branded search volume that follows after a brand begins appearing in AI answers. This methodology surfaces what traditional analytics tools can’t see: the dark funnel influence that starts in an AI response and ends in a branded search three days later.

That reporting model is what keeps AEO on the budget sheet.

How to Close These Gaps Before Your Competitors Do

Most of these failures share a common root: AEO is being run without a measurement infrastructure built for it.

Start with a baseline. Before publishing another piece of content, identify the specific high-intent prompts where your brand should appear but doesn’t. That gap list is your priority queue. Without it, you’re optimizing in the dark.

Track citations, not just mentions. A brand mention in an AI response and a brand citation with a source link are functionally different signals. Citations drive high-intent referral traffic and signal trust to the RAG retriever. Mentions build soft awareness. Only one of them contributes to compounding visibility over time.

Connect visibility to conversion. The brands building durable AEO programs aren’t just asking “did AI mention us?” They’re asking “which mentions are most likely to drive revenue?” That question requires a different kind of data.

Topify addresses all three of these directly. Its Source Analysis feature reverse-engineers the retrieval logic of major AI platforms and surfaces Citation Blind Spots within 48 hours of onboarding, identifying the specific high-value prompts where competitors are cited but your brand isn’t. The platform’s Competitor Monitoring tracks who AI is recommending in your category in real time, not just when you remember to check. And its CVR (Conversion Visibility Rate) metric uses machine learning to estimate which AI mentions are most likely to convert, so optimization effort goes where it actually moves the needle.

The window for building citation authority is still open. It won’t stay that way.

Conclusion

The failure of most AEO strategies isn’t a content problem. It’s a systems problem.

Teams are applying page-level SEO logic to passage-level citation mechanics. They’re measuring clicks when the metric that matters is presence. They’re publishing without understanding why AI cites what it cites. And they’re losing budget battles because they can’t translate visibility into pipeline math.

The 200+ G2 reviews analyzed here point to the same inflection point: the teams that shifted to entity-first architecture, passage-level extractability, and fractional attribution are outperforming. Not because they’re producing more content, but because they built the right infrastructure around it.

That’s the real lesson from the data.

FAQ

Q1: What is AEO and how is it different from SEO?

Answer Engine Optimization (AEO) is the practice of structuring content so AI platforms like ChatGPT, Perplexity, and Google AI Overviews can extract and cite it directly. SEO focuses on ranking full pages for keyword relevance. AEO focuses on passage-level extractability and entity authority to earn citations in zero-click environments. The underlying mechanics and success metrics are distinct.

Q2: How do I know if my AEO strategy is working?

Track Citation Rate and AI Share of Voice rather than organic rankings. Monitor how often AI models cite your domain for target prompts, and watch for Branded Search Lift, an increase in direct brand searches that follows AI exposure. These signals show influence even when traditional click data looks flat.

Q3: What does G2 data tell us about AEO tool usage?

G2 reviewers are moving away from generic AI writing tools and toward diagnostic visibility platforms. The most requested features are citation gap analysis, URL-level source tracking, and competitor mention monitoring. The frustration is consistently the same: teams can produce content but can’t tell whether AI is reading it.

Q4: How does Topify help with AEO execution?

Topify automates citation tracking across major LLMs, identifies Citation Blind Spots where competitors outrank you in AI responses, and provides CVR data to prioritize which optimizations drive revenue. It’s built for teams that need AEO to be measurable and defensible, not just directionally positive.