The patterns behind why certain B2B SaaS tools get recommended by AI, and what they got right before everyone else noticed.

You’ve probably noticed that the same handful of tools keep appearing in AI-generated answers about B2B software. Ask ChatGPT, Perplexity, or Google AI Overviews for CRM recommendations, and the same names come up. Ask about project management, analytics, or customer success, and it happens again.

These aren’t the biggest brands by ad spend. A lot of them aren’t even the most-reviewed tools on G2. But they share a set of structural signals that AI systems are trained to trust. Understanding those signals is what AEO for B2B SaaS is actually about.

G2 Scores Don’t Predict AI Visibility. But Something Else Does.

The first instinct is to assume that high G2 ratings = more AI mentions. The data complicates that assumption.

Research into how AI platforms cite software review sites shows that G2 dominates, but not because of star ratings. G2 holds roughly 22.4% share of voice in AI-generated answers across ChatGPT, Perplexity, and Google AI Overviews. On Perplexity specifically, G2 accounts for 75% of citations from review platforms. That’s a dominant position.

Here’s the counterintuitive part: direct correlations between review count and AI ranking come in at -0.16, and between review score and AI ranking at -0.11. Both statistically weak.

AI systems don’t read star ratings the way humans do. They treat G2 profiles as structured, machine-readable repositories of evidence. The volume of detailed reviews creates data density. That density allows AI to confidently distinguish one tool from another across specific use cases, industries, and team sizes. High ratings are a byproduct, not the cause.

That’s the gap most brands still can’t see.

Their Content Is Built to Be Extracted, Not Just Read

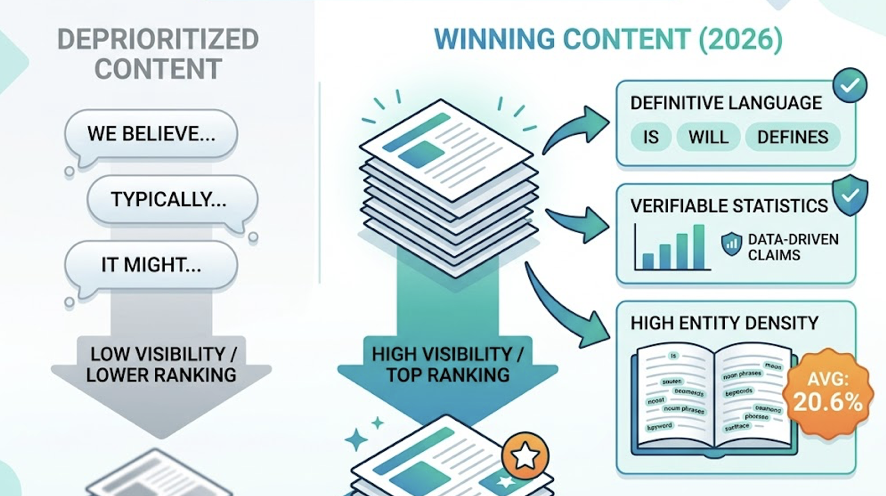

The top-ranked tools in AI answers share one structural pattern: their content is written for extraction, not engagement.

Traditional marketing copy is optimized for humans skimming a landing page. AI systems work differently. They use retrieval-augmented generation (RAG), pulling in relevant fragments from verified sources to synthesize a response. If your content isn’t structured in a way that makes those fragments easy to isolate, you won’t get cited, regardless of how well-written it is.

The technical term is BLUF: Bottom Line Up Front. The first 50-60 words of any content block should directly state the conclusion. What does this tool do? What’s the outcome? Which specific use case does it serve?

Research into AEO content patterns shows that structuring content this way improves AI citation probability by 30-40%. Not a small difference. FAQ pages, integration documentation, and knowledge base articles consistently outperform homepage copy in AI retrieval because they’re designed around specific questions with direct answers.

Schema markup matters here too. JSON-LD tags for FAQPage, Product, and PriceSpecification don’t directly change organic rankings, but they reduce AI inference errors. They make entity disambiguation faster and more accurate. Tools that implement this signal clearly, while competitors still rely on unstructured HTML, are getting a quiet compounding advantage.

Their G2 Profiles Read Like Case Files, Not Testimonials

“Great tool, highly recommend” is essentially invisible to AI systems.

The reviews that actually influence AEO insights and AI-generated recommendations contain specific numbers, named features, documented workflows, and acknowledged tradeoffs. AI prioritizes content that provides “information gain” over content that simply affirms sentiment.

There’s a clear pattern in what review content gets used in AI synthesis:

| Review Characteristic | Traditional SEO Value | AEO Value |

|---|---|---|

| Sentiment (positive/negative) | High | Moderate |

| Specific use case description | Moderate | Very High |

| Quantified outcomes (e.g., “cut cycle time 30%”) | Low | Very High |

| Structured pros/cons comparison | Moderate | High |

| Integration and technical detail | Low | High |

The tools that show up consistently in AI answers have profiles full of reviews from the second and third column. They got there deliberately.

Leading B2B SaaS brands have shifted their review collection strategy. Rather than sending bulk email requests, they trigger review prompts at milestone moments: after a user completes their first major data export, after successful integration setup, after a quantifiable outcome has been achieved. The reviews that come from these moments contain context, numbers, and technical specificity. They’re the kind of content AI systems treat as evidence.

They’re Cited Across Channels They Don’t Own

A brand that only appears on its own website carries very little weight with AI.

ChatGPT’s most-cited sources skew heavily toward Wikipedia (47.9%), Reddit (11.3%), and major media outlets like Forbes (6.8%). On Perplexity and Google AI Overviews, Reddit and Stack Overflow account for 46.7% and 21% of citations respectively.

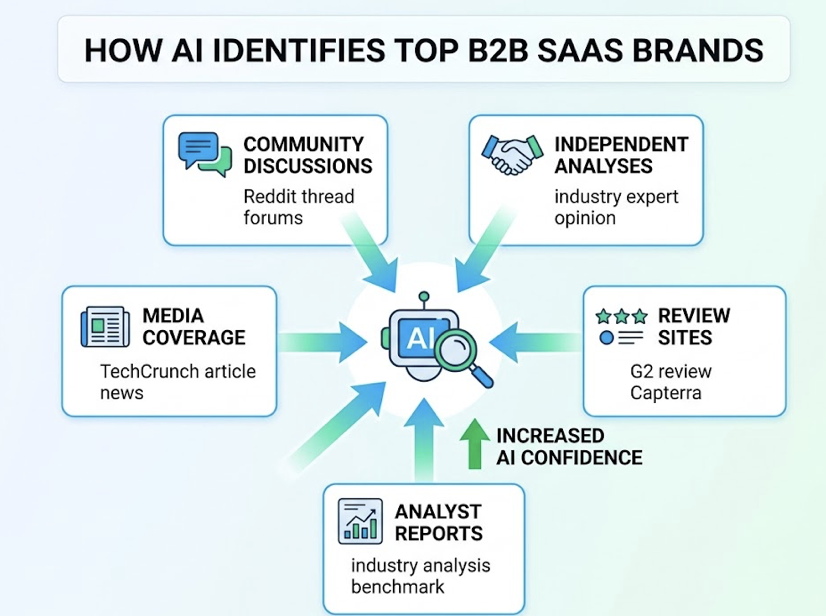

The top-performing B2B SaaS tools in AEO have genuine presence across these channels. Not just official company accounts, but actual community discussions, independent analyses, and media coverage that references them in context. When AI sees a brand described consistently across G2, a Reddit thread, a TechCrunch article, and an industry analyst report, its confidence in recommending that brand increases significantly.

This is what researchers call “consensus validation.” A claim that exists only in owned content gets discounted. The same claim, confirmed across independent sources, becomes a fact AI will cite.

There’s a risk pattern worth noting here. Some established brands coasted early because their training data presence was strong. That advantage erodes as RAG systems become more real-time. Smaller, more agile tools that actively build structured, multi-channel evidence chains are steadily taking share in AI recommendations from brands that assumed their reputation would carry them.

They Update Their Positioning Before AI Notices the Gap

B2B SaaS terminology moves fast. “Workflow automation” gets replaced by “agentic workflows.” “Account-based marketing” becomes “buying committee visibility.” Brands that update their positioning, content, and keyword signals before the market fully adopts new language tend to capture AI citations during the window when AI systems are actively learning new concepts.

Modern AI search engines like Perplexity and ChatGPT Search use real-time retrieval to supplement training data. That means content published this month can influence AI recommendations within weeks, not years. The feedback loop is much shorter than most teams assume.

Practically, this means two things. First, core pages, G2 profiles, and documentation need regular updates. Pages with visible “last updated” dates and logged content changes send freshness signals that improve AI confidence scoring. Second, positioning language needs to stay consistent across channels. If your homepage says one thing, your G2 description says another, and your LinkedIn says a third, AI perceives that inconsistency and hedges its recommendations accordingly.

The technical term for this is semantic alignment. AI builds entity knowledge graphs. When signals across platforms reinforce the same description of what a tool does and who it’s for, that entity gets stronger. When signals conflict, the entity gets weaker.

What This Means If You’re Building a B2B SaaS Brand Now

The common thread across all four patterns: structured evidence, extractable content, multi-channel validation, and continuous updates. None of these require a massive content team. But they do require a shift in how content and review strategy gets planned.

The first step for most teams is figuring out where they actually stand. Not in Google rankings, but in AI answers. What happens when a buyer asks ChatGPT or Perplexity about the category your tool competes in? What sources is AI citing? Is your brand mentioned at all, and if so, with what framing?

Topify is built specifically for this diagnostic. It simulates thousands of real buyer prompts across ChatGPT, Gemini, Perplexity, and Google AI Overviews, generating a standardized AI Visibility Score that tracks mention rate, position in recommendation lists, and sentiment direction. Because ranking first in an AI answer and appearing as an “also consider” option carry completely different conversion implications.

Topify’s Source Analysis feature takes this further. It reverse-engineers the citation ecosystem behind AI answers in your category. If a competitor is consistently cited, you can see whether AI is pulling from their pricing page, a Reddit discussion, or a specific media piece. That diagnostic tells you exactly where to focus: a content update, a PR placement, or a structured data fix.

The brands that will build durable AI visibility in B2B SaaS aren’t necessarily the biggest or the most reviewed. They’re the ones that treat AEO as a continuous signal management practice, not a one-time optimization. Build the evidence, structure it for extraction, distribute it across channels, and keep it current.

That’s what G2’s highest-rated tools are already doing. Most of their competitors haven’t figured that out yet.

Conclusion

G2’s highest-rated B2B SaaS tools aren’t winning AI recommendations by accident. They’ve built content that’s easy to extract, review profiles that read like evidence, presence across channels AI trusts, and positioning that stays current as the category evolves. These are learnable, repeatable practices. The brands that move on them now are setting up an advantage that will be harder to close the longer competitors wait.

FAQ

What is AEO for B2B SaaS?

Answer Engine Optimization (AEO) is the practice of structuring your digital content so that AI assistants like ChatGPT, Perplexity, and Google AI Overviews can easily extract and cite it as a trusted source. For B2B SaaS, this means shifting focus from ranking for clicks to earning citations in AI-generated answers, where buying decisions increasingly begin.

How does G2 data affect AI recommendations?

AI systems treat G2 as a structured, high-density repository of verified user evidence. A brand’s G2 profile provides the data diversity AI needs to confidently describe a tool across specific use cases, industries, and team sizes. High ratings matter less than review depth, specificity, and volume of evidence-based content.

How can I check if my SaaS tool appears in AI answers?

You can manually run buyer-intent prompts like “best [category] software for [use case]” across ChatGPT and Perplexity. For a systematic view across multiple platforms, Topify automates this process and provides real-time data on mention frequency, recommendation position, and sentiment, without the manual sampling bias.

What’s the difference between SEO and AEO for SaaS?

SEO targets search engine rankings to drive clicks. AEO targets AI citation to earn recommendations. SEO is about getting found. AEO is about getting chosen by the AI system before the buyer even reaches a search result. For B2B SaaS brands, both matter, but AEO is where discovery increasingly starts.