Your SEO dashboard is lying to you, and it’s not even wrong.

The rankings are stable. Impressions look healthy. But your brand doesn’t show up in a single ChatGPT response when someone asks which tool to use in your category. That’s not a ranking problem. That’s a measurement problem.

Answer Engine Optimization (AEO) runs on entirely different logic than traditional search. AI platforms don’t show your brand a list of links; they render a conclusion. And if you’re still reporting on impressions and CTR to justify your AEO investment, you’re measuring the wrong thing entirely.

Here’s what to measure instead.

Your Old Metrics Can’t See What’s Actually Happening

Traditional SEO tracked a clean linear path: keyword → impression → click → conversion. AI search breaks that chain at the first step.

When a user asks ChatGPT “what’s the best project management tool for remote teams,” they don’t see a list of links ranked by domain authority. They get an answer. If your brand isn’t in that answer, you don’t exist for that user, regardless of what your Google Search Console says.

Research shows organic CTR for queries that trigger an AI Overview has dropped 61% year-over-year. That number isn’t a warning. It’s already happened.

The “visibility paradox” is now a standard failure mode: teams report to leadership with green dashboards while their brand quietly disappears from the conversations that shape purchase decisions.

That’s the gap most brands still can’t see.

The 7 KPIs That Actually Measure AEO Performance

The following metrics don’t replace SEO reporting. They answer a different question: not “where do we rank,” but “what does AI say about us, and how often.”

1. AI Visibility Rate

This is the percentage of your target prompts that trigger a response that mentions your brand. Think of it as your “room presence” metric. If AI doesn’t mention you, nothing else matters downstream.

For B2B SaaS teams, a visibility rate between 10-15% across category-level prompts is a reasonable baseline. Category leaders typically clear 30-40%. Getting above 0% is a meaningful signal for newer brands.

Platforms like Topify track visibility rate across ChatGPT, Gemini, Perplexity, and other major AI platforms simultaneously, so you’re not making decisions based on a single engine’s output.

2. Citation Share

Being mentioned is awareness. Being cited is trust.

Citation Share tracks how often AI responses reference your owned domains as a factual source, not just your brand name. Brands are 6.5 times more likely to be cited through external sources, like Reddit threads, G2 reviews, or industry publications, than through their own websites.

That number forces an uncomfortable question: if AI trusts Reddit more than your homepage, where should your next content investment go?

Topify’s Source Analysis surfaces exactly which domains AI is citing when describing your brand, so you can close those gaps strategically rather than guessing.

3. Sentiment Score

AI doesn’t just list brands. It characterizes them.

A Sentiment Score measures whether the AI describes you favorably, neutrally, or with caveats. Scores typically range from 0 to 100. A positively framed AI summary increases purchase likelihood by 32%. Negative framing, on the other hand, causes what researchers call “silent churn,” users who form an opinion before they ever hit your site.

High mentions plus low sentiment is often worse than being invisible. You’re being seen, but the framing is doing active damage.

4. Answer Placement Score

In a numbered list from an AI recommendation, position one carries a weight of 1.0. Position five carries a weight of 0.2. That’s a 5x difference in downstream conversion probability, for the same query.

Answer Placement Score measures where your brand lands within AI responses when it does appear. Being in the “room” matters. Being first in the room matters more. Category leaders show up as the primary recommendation in 30% or more of relevant responses.

If your visibility rate is high but your placement score is low, you’re being mentioned as an alternative, not a recommendation.

5. Prompt Coverage

Traditional SEO tracks a finite keyword list. AI search doesn’t work that way.

The average AI query is 23 words long, compared to 4 words in traditional search. Users ask things like “what’s the most secure CRM for a 15-person remote startup that doesn’t need Salesforce-level complexity.” No keyword tool built this query.

Prompt Coverage measures how many distinct user intents, across discovery, evaluation, and comparison stages, produce a response that mentions your brand. Enterprise teams typically track 100+ prompts per category cluster to get statistically meaningful data. Topify continuously surfaces new high-value prompts as AI behavior evolves, so your tracked set doesn’t go stale.

6. AI Share of Voice

If your brand appears in 10% of category recommendations and your top competitor appears in 40%, that’s a 30-point mention gap. That gap is your market opportunity, or your market risk, depending on which side you’re on.

AI Share of Voice calculates your brand’s mentions as a percentage of total brand mentions across a query set. It’s the AEO equivalent of market share. And in an environment where AI satisfies roughly 60% of search queries, SOV is a direct proxy for future pipeline.AI SOV=∑Competitor MentionsBrand Mentions×100

This is also the metric executives actually understand. Topify’s Competitor Monitoring tracks SOV in real time, including emerging competitors who weren’t on your radar six months ago.

7. Conversion Visibility Rate (CVR)

AI traffic is often invisible in standard analytics setups. Assistants suppress referrers, strip UTM parameters, and don’t always show up cleanly in GA4. Actual AI influence is likely 2-3x what standard dashboards report.

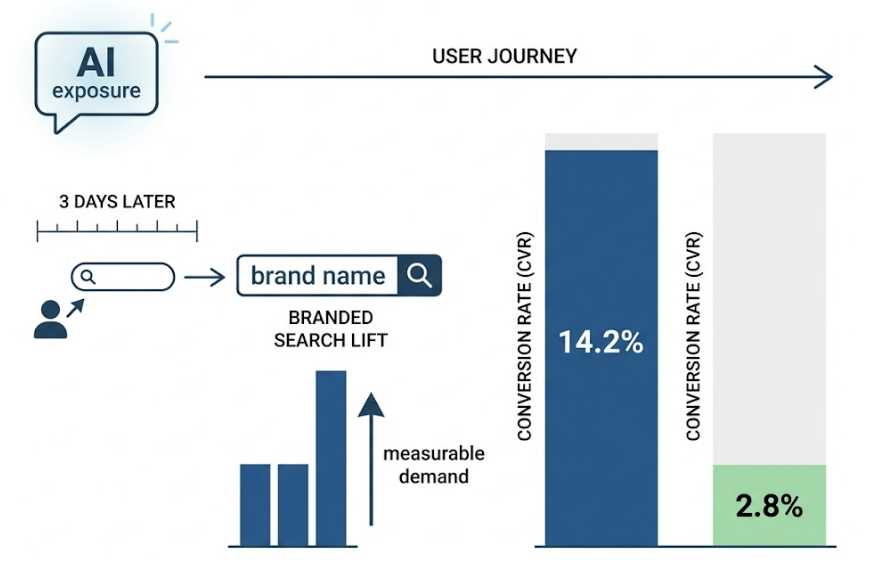

CVR works around this by tracking what happens after AI exposure. Users encounter your brand in a ChatGPT response, don’t click immediately, then search for you by name three days later. That branded search lift is measurable, and it’s the clearest signal that your AEO investment is generating demand. AI-referred traffic, when you can capture it, converts at 14.2%, compared to 2.8% for traditional organic search.

The Metrics Brands Get Wrong First

Getting the right KPIs is half the work. Using them correctly is the other half.

Mistake 1: tracking mention count in isolation. A high mention count is a liability if sentiment is negative. Modern AI sentiment analysis distinguishes between being praised for your interface and criticized for your pricing in the same response. Averaging those together produces a number that means nothing.

Mistake 2: treating AI referrals like organic traffic. If you’re measuring AEO performance purely in GA4, you’re undercounting by a significant margin. Include “AI Assistants” as a response option in post-conversion surveys. Check server logs for ChatGPT-User agents. The attribution gap is real and it’s currently making AEO look less effective than it is.

Mistake 3: only tracking branded prompts. “What does [Your Brand] do?” will always give you flattering visibility numbers. The real test is unbranded category prompts: “best analytics tool for B2B SaaS.” If you only appear when someone already knows your name, you’ve failed at the top of the funnel entirely.

How to Build Your AEO Reporting Framework in 3 Layers

Organizing these 7 KPIs into a reporting structure turns raw data into decisions. The framework has three layers, each answering a different organizational question.

Layer 1: The Visibility Layer Are we being retrieved at all?

KPIs: AI Visibility Rate, Prompt Coverage, Platform Distribution.

Report this weekly. Platform algorithm changes move fast. A week-over-week visibility delta tells you whether your optimization work is landing, or whether a competitor just pushed you out of a cluster of prompts.

Layer 2: The Quality Layer How is AI describing us?

KPIs: Sentiment Score, Answer Placement Score, Citation Source Share.

Report this monthly. Narrative drift, where AI gradually shifts from describing you as a “leading solution” to a “viable option,” rarely happens overnight. Monthly audits catch it before it becomes structural.

Layer 3: The Impact Layer Is AI presence driving demand?

KPIs: AI Share of Voice, Branded Search Lift, Lead Quality Shift (conversion rate and deal size of AI-referred visitors vs. organic).

Report this quarterly, alongside competitor benchmarking. This is the layer that justifies AEO spend to finance and leadership. Users arriving from AI recommendations convert at 14.2% and move through the pipeline 2-3x faster, because they’ve been pre-qualified by the AI’s recommendation.

What a Good AEO Report Actually Looks Like

C-suite audiences don’t want to reconstruct meaning from raw data. They want implication-first summaries that answer one question: “What does this mean for the business?”

A solid executive AEO report fits in four slides:

| Slide | Executive Focus | Key Data Point |

|---|---|---|

| Executive Summary | Recommendation-first | “We appear in 12% of category prompts. Competitor A appears in 38%.” |

| Competitive SOV | Current market standing | AI Share of Voice vs. top 3 competitors |

| Narrative Control | Brand reputation health | Sentiment Score trend + top AI qualifiers used |

| Impact / ROI | Pipeline connection | Branded Search Volume Lift + Lead Quality comparison |

The operational team needs the full 7-KPI breakdown. Leadership needs the business translation. Build both, from the same data set.

Setting Benchmarks When There’s No Industry Standard Yet

AEO is new enough that there’s no universal benchmark. The right target depends heavily on how often AI surfaces responses in your category at all.

| Industry | AI Overview Trigger Rate | Target Visibility Rate (Leader) |

|---|---|---|

| Healthcare | 88% | 40-50% |

| B2B Tech / SaaS | 55% | 30-40% |

| Finance | 21% | 15-20% |

| E-commerce | 13% | 10-15% |

| Real Estate | 4.4% | 5-10% |

A 10% visibility rate in real estate signals market leadership. In healthcare, that same number is a serious problem.

Start by tracking 30-50 unbranded prompts and establishing your baseline over 30, 60, and 90 days. Trend stability matters more than single-point snapshots. Your 90-day moving average is a more reliable signal than any single week’s report.

Conclusion

AEO is a shift from the link economy to the citation economy. The click, which once was the primary unit of digital marketing value, is being replaced by something less visible but more powerful: the authoritative mention inside an AI-generated answer.

The 7 KPIs in this framework don’t make AEO harder to report. They make it possible to report it honestly, with data that reflects what’s actually happening in the conversations shaping your buyers’ decisions.

AI Visibility Rate is the baseline. Sentiment Score is the health check. Answer Placement tells you how loud your voice is when you’re in the room. SOV shows you the competitive gap. CVR connects it all to revenue.

Start measuring what AI actually says about you. Then optimize from there.

FAQ

Q: What’s the main difference between AEO KPIs and traditional SEO KPIs?

SEO measures actions, clicks, traffic, and rankings. AEO measures influence and trust: citation frequency, sentiment framing, and presence within synthesized answers. One tracks what users do after seeing you. The other tracks whether AI includes you in the conversation at all.

Q: How often should I report on AEO metrics?

Weekly for visibility and prompt coverage (tracks fast platform changes), monthly for sentiment and placement audits (catches narrative drift), and quarterly for Share of Voice and pipeline impact (executive-level benchmarking).

Q: Which AEO KPI should I prioritize first?

AI Visibility Rate. You need to confirm you’re present in relevant AI responses before any other metric is worth optimizing. If your visibility rate is 0% for category-level prompts, that’s the only problem that matters right now.

Q: Can AEO performance be tied directly to revenue?

Yes, through Branded Search Lift and Lead Quality Shift. Users arriving from AI recommendations convert at 14.2%, compared to 2.8% for traditional organic search, and move through the sales cycle 2-3x faster because AI pre-qualifies them during the research phase.

Q: Why is sentiment analysis important in AEO?

Because AI doesn’t just list your brand name. It describes you. A high visibility rate combined with a low sentiment score means you’re being mentioned in ways that actively erode purchase intent. Sentiment is the difference between being recommended and being used as a cautionary example.