G2 reviews reveal more than star ratings. Here’s what AEO tool users actually say about tracking AI search visibility — and what those reviews consistently miss.

You’ve done your homework. You’ve read the G2 reviews, scrolled the star ratings, and shortlisted two or three AEO tools. But when you actually sit down to choose one, something feels off.

Everyone’s “Category Leader” claim sounds the same. The five-star reviews talk about clean dashboards and responsive support teams. Nobody seems to be talking about whether the tool actually tells you why ChatGPT recommends your competitor over you.

That’s the gap. And it’s a bigger problem than most teams realize.

G2 Reviews Track the Wrong Things in AEO

G2’s scoring algorithm is built for conventional SaaS. It weights “Ease of Use,” “Quality of Support,” and “Likelihood to Recommend” — all reasonable proxies for whether a CRM or project management tool is doing its job.

In the AEO space, those same proxies break down.

A tool that scored high on “Ease of Setup” might have gotten there by relying on shallow API snapshots rather than deep, multi-engine browser capture. Fast setup can actually be a warning sign: it often means the platform skipped the hard work of building a custom prompt matrix or analyzing real LLM retrieval behavior.

The result is what you could call the proxy paradox. Users rate tools on the visible parts — dashboard design, PDF export quality, how quickly the onboarding team responds to tickets. None of these tell you whether the citation data you’re looking at reflects what a real user sees when they ask ChatGPT which brand to buy.

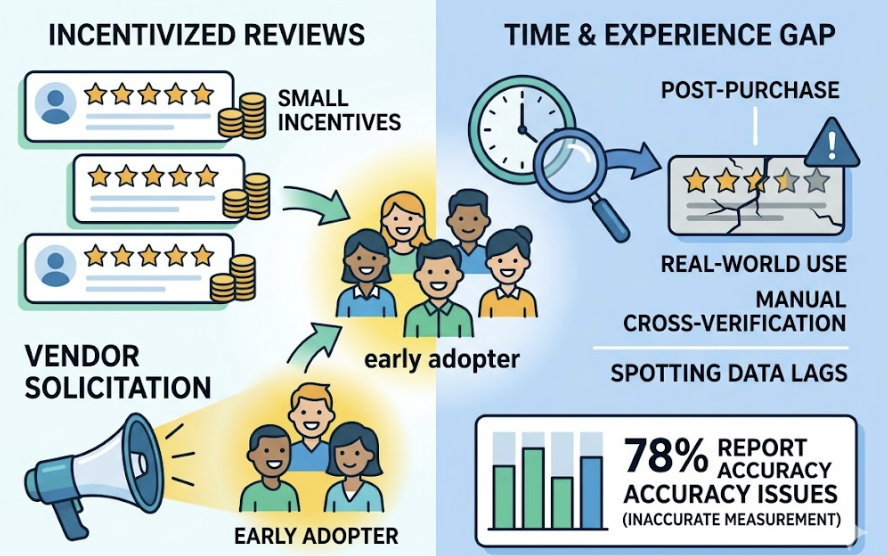

There’s a structural bias working against you here, too. G2 acknowledges it offers small incentives to reviewers to encourage volume. Vendors tend to solicit reviews from their most satisfied early adopters — the ones who haven’t yet done the manual cross-verification needed to spot data lags. In a category where 78% of practitioners report their current approach to measuring LLM visibility is inaccurate, those enthusiastic five-star reviews are often written before the cracks appear.

The AEO Metrics G2 Reviews Almost Never Mention

Read through enough AEO tool reviews on G2 and a pattern emerges. Users describe what they can see — not whether what they’re seeing is accurate.

Three technical metrics consistently go unexamined.

Prompt coverage. Traditional SEO tools track keywords. AEO tools track conversational intents — and those intents fragment in ways keywords never did. A buyer researching “email marketing software” might phrase that search dozens of different ways in an AI conversation. Research shows over 80% of AI prompts are phrased differently than Google searches on the same topic. An enterprise AEO program needs a prompt universe of 150–300 queries for category-level reporting, and up to 2,000 for multi-segment coverage. Most G2 reviews celebrate the “Aha!” moment of seeing any data. They rarely mention whether the tool supports the query volume needed for a defensible Share of Voice.

Citation rate vs. mention frequency. A brand “mention” is when an AI includes your name in its narrative. A “citation” is a structured source attribution — the kind that signals the LLM has learned your domain as an authority. These are not the same thing, and they don’t produce the same outcomes. Mentions matter for recall. Citations are what build authority and drive referral traffic. The benchmark for strong B2B SaaS companies is a 10–15% citation rate; market leaders exceed 30%. G2 reviews that praise “visibility” rarely specify which type they’re measuring.

Data refresh cycles. AI models update their retrieval patterns frequently. If an AI engine shifts its primary narrative about a category, your team needs to know within 24–48 hours to respond. A weekly refresh cycle — standard for many “Category Leader” tools — creates a blind spot that can waste significant resources. This data latency problem is one of the most technically significant complaints in the AEO space, yet it’s routinely buried beneath a high “Ease of Use” score.

What “Visibility” Actually Means in AEO Tool Reviews

When a reviewer says “I can see my brand is being mentioned,” they’re describing one specific thing. But AEO visibility has at least four distinct dimensions — and most tools (and most reviews) only capture one of them.

Mentioned Visibility vs. Measured Visibility

Mentioned visibility is qualitative. It tells you whether the AI is willing to include your brand in its response at all. That matters for brand recall, especially in “zero-click” environments where users never leave the AI interface.

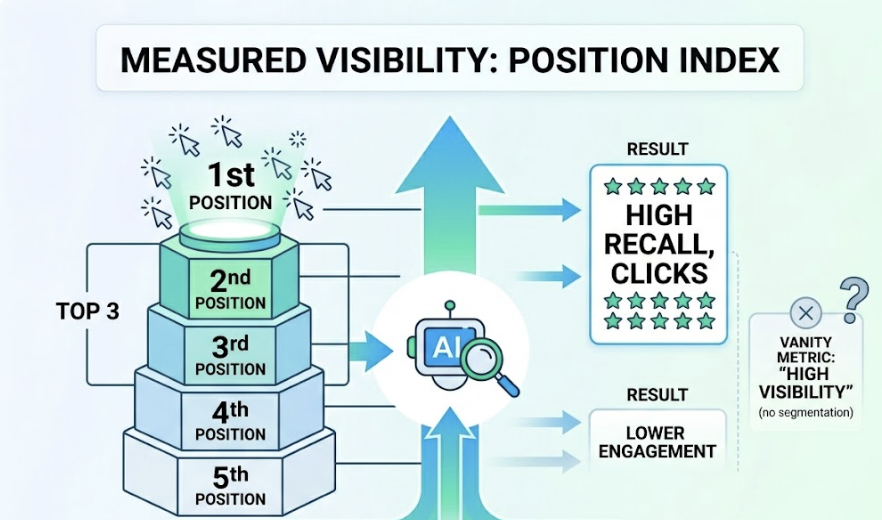

Measured visibility is something different. It tracks the Position Index: where in the response your brand appears. Being the first recommendation in a five-item list produces very different outcomes than being the fifth. Research shows brands in the top three positions are significantly more likely to be recalled or clicked. A tool that reports “high visibility” without segmenting by position is giving you a vanity metric.

Sentiment vs. Position: Two Very Different Signals

Here’s something most G2 reviews don’t account for: a high position in an AI answer doesn’t mean the AI is saying something good about you.

AI engines can include caveats — “users report frequent downtime,” “pricing is higher than competitors” — that undercut an otherwise prominent mention. That’s why sentiment analysis is a separate and necessary metric, not a subset of visibility. A brand with a high position but negative sentiment is experiencing a visibility crisis, not a success.

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Position Index | Where your brand appears in the narrative | Determines click probability and entity salience |

| Sentiment Score | How the AI frames your brand | Protects reputation and influences consideration |

| Citation Rate | How often your domain is a cited source | Signals authority, drives referral traffic |

| Share of Voice | Relative presence vs. competitors | Measures category dominance in AI ecosystems |

Platforms like Topify use a 0–100 sentiment scoring mechanism to capture these nuances. That level of granularity is rarely what G2 reviewers are evaluating — but it’s often what determines whether your AI visibility is actually working for you.

3 Patterns from G2 AEO Reviews Worth Paying Attention To

Aggregate patterns across the AEO category on G2 tell a more useful story than any individual review. Three patterns stand out.

Pattern 1: The “Aha!” moment of prompt discovery. The most satisfied G2 reviewers are consistently the ones who used AEO tools to find prompt opportunities they didn’t know existed. A B2B software company discovers they rank for “project management software” but are entirely absent from “project management tools for remote engineering teams using Jira.” That discovery — of lost prompts in adjacent, high-intent conversations — is the most cited pro in the AEO category. It provides immediate strategic value with almost no prior setup.

Pattern 2: The execution wall. The most common source of disappointment is what practitioners call the actionability gap. Users know they have visibility gaps. They don’t know how to close them. Many AEO tools provide the diagnosis but not the treatment. They’ll show you that a competitor is cited more frequently — but not that the competitor is winning because of a more detailed pricing table or a specific Reddit thread with high engagement. This frustration points to a real limitation: most tools were built as monitoring platforms, not optimization platforms.

Pattern 3: The coverage trap. A tool can receive five stars from a user who’s only tracking one AI platform. But visibility on ChatGPT doesn’t transfer automatically to Perplexity or Google AI Overviews. Research shows only 30% of brands maintain consistent visibility from one AI answer to the next. A tool that covers one or two engines is measuring a fragment of the picture. With 47% of users now switching between multiple AI tools, that fragmentation has real consequences.

What to Actually Look For Beyond the Star Rating

Ignore the aggregate star rating. Instead, run a five-dimension audit on any AEO tool you’re seriously evaluating.

| Dimension | Why It Matters | How Often G2 Reviews Mention It |

|---|---|---|

| Data accuracy method | Direct browser capture vs. API snapshots; the former catches “hidden” citations | Rarely — too technical for most reviewers |

| Platform coverage | Must track ChatGPT, Gemini, Perplexity, and AI Overviews simultaneously | Sometimes — usually in feature lists |

| Execution workflow | Does it connect to a CMS or provide one-click optimization agents? | Often — this is where the pain is most visible |

| Source analysis | Can it reverse-engineer why a competitor is being cited? | Rarely — advanced feature, few users test it |

| Sentiment precision | Does it distinguish a factual mention from a recommendation? | Sometimes — usually noted in reputation-focused reviews |

The last two dimensions — source analysis and sentiment precision — are where most tools fall short. They’re also where the actual competitive intelligence lives.

How Topify Fits Into the AEO Tool Picture

The AEO market currently divides into three segments: established SEO suites that added AI features as an afterthought, enterprise intelligence platforms built for Fortune 500 procurement cycles, and focused AEO execution engines designed for teams that need to move fast.

Topify sits firmly in the third category. It’s built for growth-oriented teams — SMBs, scale-up B2B companies, marketing agencies — that don’t have the bandwidth for manual analysis and need a tool that closes the loop between tracking and action.

A few specific capabilities are worth calling out in the context of what G2 reviews typically miss.

Topify’s Source Analysis feature directly addresses the execution wall. Rather than telling you a competitor is winning, it reverse-engineers the exact domains and URLs AI platforms are pulling citations from. If an AI is citing a competitor because of a specific case study or a well-structured landing page, that semantic gap becomes visible — and actionable.

The One-Click Agent Execution feature takes that a step further. Once a gap is identified, a lean team can generate the necessary content via an AI agent and deploy it in a single workflow. That’s the difference between an intelligence tool and an optimization platform.

Topify also tracks the seven KPIs that connect brand visibility to revenue: visibility, volume, position, sentiment, mentions, intent, and CVR (Conversion Visibility Rate). That last metric — estimating the probability that an AI recommendation leads to brand engagement — is the kind of signal that doesn’t show up in a G2 review but tends to matter a lot when you’re trying to justify the budget.

For teams evaluating options, the Basic plan starts at $99/month and includes tracking across ChatGPT, Perplexity, and AI Overviews with 100 prompts and 9,000 AI answer analyses per cycle.

Conclusion

G2 is a reasonable starting point for vetting an AEO tool’s vendor stability and service quality. It’s a poor guide for evaluating technical efficacy.

The reviews tend to cluster around what’s easy to describe: clean interfaces, helpful onboarding teams, satisfying “Aha!” moments. They underweight what’s actually hard to build: real-time citation tracking, multi-platform coverage, sentiment precision, and the ability to turn a visibility gap into a published piece of content.

The question isn’t which tool has the highest star rating. It’s which tool can tell you exactly what your competitors are doing to win citations — and give you a mechanism to beat them.

Those are different questions. The answer to the first one is on G2. The answer to the second one requires a different kind of evaluation.

FAQ

Q1: What is AEO Insight and how is it different from SEO tools?

AEO (Answer Engine Optimization) software tracks a brand’s visibility in AI-generated answers across platforms like ChatGPT, Gemini, and Perplexity. Unlike SEO tools, which focus on ranking URLs in a list of results to drive clicks, AEO tools measure how AI engines synthesize, reference, and recommend a brand within a single conversational response. The underlying data model is different: you’re not tracking positions on a result page, you’re tracking how an LLM has learned to represent your brand.

Q2: Are G2 reviews reliable for evaluating AEO tools?

G2 reviews are useful for evaluating user experience, customer support, and vendor reliability. They’re less useful for evaluating technical accuracy and data depth. AEO is a new field, and many reviewers are early adopters who haven’t yet cross-verified the tool’s data against live AI search results. Use G2 as a signal of vendor stability — not as a verdict on whether the tool’s citation data is accurate.

Q3: What metrics should I look for in an AEO tool beyond G2 ratings?

Prioritize four metrics: Citation Rate (how often your domain is a linked source in AI answers), Position Index (where you appear in the narrative), Sentiment Score (how the AI frames your brand), and Prompt Coverage (the breadth of conversational queries the tool tracks). A tool that can’t report on all four is giving you an incomplete picture.

Q4: Does Topify have G2 reviews or ratings?

Topify is positioned as a specialized execution tool for growth-oriented marketing teams, with its differentiation centered on citation accuracy and one-click optimization workflows. Its technical focus — particularly Source Analysis and automated content deployment — addresses the “execution wall” that shows up most frequently as a pain point in the AEO category on G2.