Your SEO dashboard is lying to you.

Not because the data is wrong, but because it’s measuring the wrong game. When a user asks ChatGPT “what’s the best project management tool for remote teams,” your Google ranking doesn’t matter. What matters is whether you’re in the answer at all, and how you’re described when you are.

That’s the core challenge of AEO and GEO measurement. The old stack — organic sessions, CTR, keyword rankings — was built for a world of blue links. In a world where AI synthesizes the answer directly, those numbers tell you almost nothing about brand influence.

This playbook breaks down 10 KPIs across three measurement layers: Visibility, Quality, and Impact. Each one maps to a specific question your team should be able to answer every week.

Why Your Old SEO Metrics Break Down in AI Search

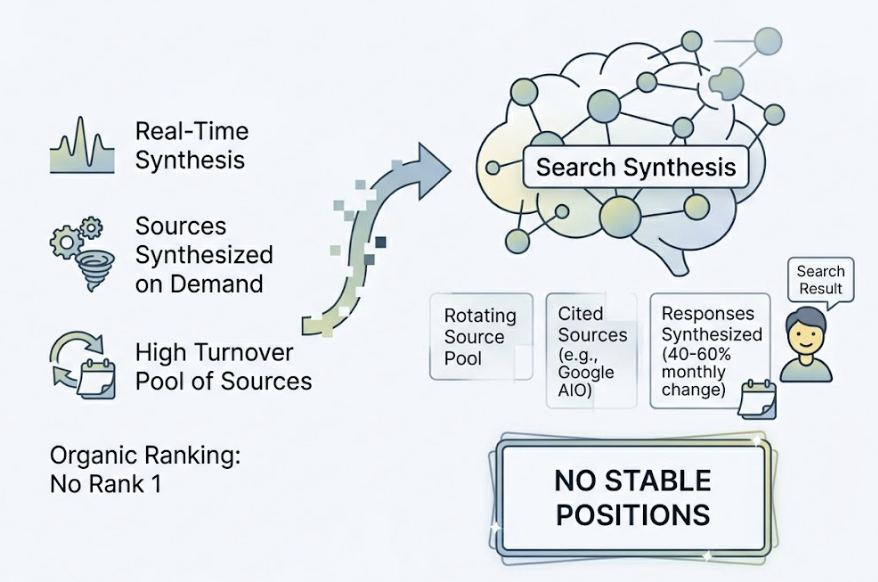

Traditional SEO worked because the output was consistent: a ranked list of links. You could measure position, click-through rate, and impressions. The relationship between effort and measurement was linear.

AI search doesn’t work that way. There are no stable “positions.” Responses are synthesized in real-time, drawing from a rotating pool of sources. Research shows that 40-60% of cited sources in Google AI Overviews change every month. You can rank #1 organically and still be invisible to ChatGPT.

Gartner projects a 25% drop in traditional search volume by 2026 as users shift to AI assistants. That traffic doesn’t disappear. It moves to a channel with a completely different measurement logic.

The 3-Layer Measurement Framework

Before tracking individual KPIs, you need a mental model for what you’re measuring. AEO performance breaks down into three distinct layers:

| Layer | Core Question | KPIs |

|---|---|---|

| Layer 1: Visibility | Is your brand in the AI’s response at all? | KPI 1-3 |

| Layer 2: Quality | How is your brand being described? | KPI 4-6 |

| Layer 3: Impact | Is AI visibility driving real business results? | KPI 7-10 |

Each layer answers a different question. Teams that skip straight to Impact without establishing Visibility baselines end up with attribution gaps they can’t explain.

Layer 1 — Visibility KPIs: Are You Even in the Room?

KPI 1: AI Mention Rate

The most fundamental AEO metric. It measures the percentage of target prompts where your brand appears in the AI’s response.

For B2B SaaS, a healthy baseline falls between 10-15% of relevant category queries. Category leaders typically exceed 30%. If you’re tracking 100 prompts and appearing in 12 of them, that’s your starting point, not your ceiling.

One distinction worth making: a “mention” means the AI knows you exist. A “citation” means your content actively grounded the response. Both matter, but for different reasons.

KPI 2: Prompt Coverage

Your brand might appear for “CRM tools” but disappear completely on “CRM for startups” or “CRM for sales teams under 10 people.” That gap is the prompt coverage problem.

Build a list of 50-100 high-value prompts that map to your buyer journey — including “Why,” “How,” and “What” question formats. Track coverage across that full set. Coverage below 50% on commercial-intent prompts is a signal that your content strategy has blind spots.

KPI 3: Platform Distribution

ChatGPT, Gemini, and Perplexity don’t behave the same way. They pull from different source types, apply different reranking logic, and serve different user demographics.

A brand that’s highly visible on Perplexity but invisible on ChatGPT has a platform concentration risk. Track mention rate separately by engine, not just as a blended average. The splits often reveal which platforms you’ve inadvertently optimized for and which you’ve ignored.

Layer 2 — Quality KPIs: How Are You Being Described?

Visibility gets you in the room. Quality determines whether the AI’s description of you builds trust or quietly erodes it.

KPI 4: AI Sentiment Score

AI platforms synthesize responses from hundreds of sources — including Reddit threads, G2 reviews, and forum discussions. If the consensus on those platforms is negative, the AI will reproduce that sentiment, faithfully.

Sentiment scoring uses NLP to classify AI-generated mentions as positive, neutral, or negative. A 0-100 scale works well in practice. A high mention rate with a low sentiment score is often worse than a low mention rate — you’re being seen, but the framing is working against you.

Watch for specific language patterns: being described as “expensive” or “complex” in AI answers doesn’t mean you’re invisible. It means you’re visible in the wrong way.

KPI 5: Brand Position in AI Answers

Not all mentions are equal. Being the first recommendation in a ChatGPT response is fundamentally different from being fifth in a list.

Position tracking uses a weighted formula: position weight = 1 / rank. First position carries a weight of 1.00; second is 0.50; fifth is 0.20. This matters because the gap between first and third recommendation in a high-intent AI response can translate to a 5x difference in conversion probability downstream.

Track your average weighted position across your core prompt set, and watch how it shifts week over week relative to competitors.

KPI 6: Citation Source Coverage

AI platforms don’t cite your website because you asked nicely. They cite it because it appeared in the sources they trust most.

Perplexity pulls nearly 47% of its top citations from Reddit. ChatGPT favors Wikipedia for around 48% of its responses. If your brand has no meaningful presence on those third-party platforms, your domain competes against a significant structural disadvantage.

Citation source analysis maps which domains the AI is using to ground its responses about your category. If a competitor’s blog or a user’s product review is shaping what the AI says about the problem your brand solves, that’s a content gap you can close.

Layer 3 — Impact KPIs: Is It Actually Working?

This is where AEO measurement gets interesting. AI referral traffic behaves very differently from organic search traffic, and the numbers justify the investment in a way that most marketing dashboards still don’t capture.

KPI 7: AI Search Volume Trend

AI search volume tracks how often users are querying AI platforms about your category over time. This isn’t your brand’s traffic — it’s the size and direction of the pool you’re fishing in.

Rising AI search volume for your core topics is a leading indicator of opportunity. Falling volume on topics you’ve invested heavily in is a signal to rebalance. Track the trend line, not just the snapshot.

KPI 8: Share of Voice vs Competitors

AI Share of Voice (AI SoV) measures your brand’s proportion of the total “answer real estate” in your category. The weighted formula accounts for position, not just presence:

AI SoV = (Sum of Your Brand’s Position Weights / Sum of All Brands’ Combined Position Weights) × 100

This is the closest AEO equivalent to market share. A competitor holding 40% AI SoV while you hold 8% in a growing category is a quantifiable revenue risk, not an abstract concern. Track this monthly against your top three to five competitors.

KPI 9: Conversion Visibility Rate (CVR)

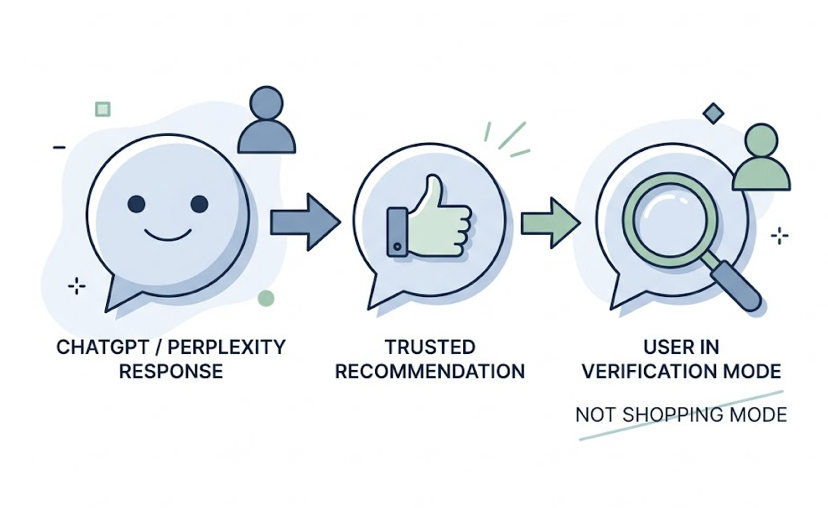

Here’s the data that justifies the entire AEO investment: AI referral traffic converts at 14.2% on average, compared to 2.8% for Google organic search. That’s a 5x conversion advantage.

For context, Claude referral traffic converts at up to 16.8% in B2B SaaS contexts. AI-sourced visitors show 67% higher lifetime value and convert 73% faster than traditional search visitors.

The mechanism is the “pre-qualified recommendation” effect. By the time a user follows a link from a ChatGPT or Perplexity response, they’ve already received a trusted recommendation. They’re in verification mode, not shopping mode.

CVR blends sentiment score, position weight, and prompt intent into a single estimate of how likely an AI mention is to drive a conversion-eligible visitor. It’s a composite signal, but it’s the most direct line between AI visibility work and revenue.

KPI 10: Week-over-Week Visibility Delta

Absolute numbers are less useful than directional momentum. A brand at 12% AI mention rate trending up 3 points week-over-week is in a better position than a brand at 22% trending flat.

WoW delta is the operational heartbeat of AEO measurement. It tells you whether your content and optimization efforts are working, and it gives you a fast signal when something breaks — a competitor launches a major content push, a third-party source changes its framing, or a new AI platform update reshuffles citation priorities.

Track the delta for at least four of your core KPIs on a weekly cadence, and build a simple threshold alert: if any metric drops more than 5 points in a week, investigate before it compounds.

Putting It Together: A Practical AEO Dashboard

An AEO dashboard doesn’t need to be complex. It needs to answer two questions at a glance: where do we stand, and where are we headed?

Here’s a workable structure for most teams:

| Review Cadence | KPIs to Track |

|---|---|

| Weekly | AI Mention Rate (WoW delta), Brand Position, Sentiment Score, Visibility Delta |

| Monthly | AI Share of Voice, Prompt Coverage, Citation Source Coverage, AI Search Volume Trend, CVR |

| Quarterly | Platform Distribution, Full competitor benchmark, Attribution modeling |

The monthly cadence matters particularly for citation source analysis. Because 40-60% of cited sources rotate monthly in major AI engines, a monthly audit catches drift before it becomes a structural problem.

Manual audits of a 100-prompt set typically take 8-12 hours per month. At scale, platforms like Topify automate this across ChatGPT, Gemini, Perplexity, and other major engines — tracking all seven core metrics (visibility, sentiment, position, volume, mentions, intent, and CVR) without manual query runs. Their Basic plan starts at $99/month and covers 100 prompts with 9,000 AI answer analyses across engines.

The One Mistake Most Teams Make

Most teams starting AEO measurement make the same error: they treat their own website as the primary lever.

It isn’t.

AI engines don’t view your website as the authoritative source. They view it as one node in a larger ecosystem. Vendor product pages account for a small fraction of actual AI citations. The majority of source weight comes from Reddit threads, Wikipedia entries, industry publications, and review platforms like G2.

A team that invests 80% of its resources into on-site optimization is effectively controlling only a fraction of the citation surface. The rest — the part that actually determines what AI says about your brand — lives off-site.

The practical fix is a “Search Everywhere” mentality. Track which third-party domains the AI uses to ground responses in your category. Then build an active presence there — not just as a content creator, but as an entity with consistent, accurate representation across every platform an AI might reference.

There’s also a common technical mistake: blocking AI crawlers in robots.txt to protect content from training data. This prevents real-time retrieval engines from seeing your most recent updates, causing the AI to describe your brand based on outdated information. Whitelisting GPTBot and OAI-SearchBot costs you nothing and keeps your entity data current.

Conclusion

AEO measurement isn’t about replacing your SEO dashboard. It’s about adding a second instrument panel for a channel that operates on completely different logic.

The 10 KPIs in this playbook — organized across Visibility, Quality, and Impact — give you the foundation to track what’s actually moving in AI search, explain it to stakeholders, and connect the work to revenue. Start with the Layer 1 visibility metrics, build your prompt list, and establish baselines before trying to optimize. The brands that win in 2026 won’t be the ones that publish the most content. They’ll be the ones that know, with precision, what AI says about them right now.

FAQ

What’s the difference between AEO KPIs and traditional SEO metrics?

Traditional SEO metrics (rankings, CTR, organic sessions) measure performance in a list-based environment where clicks are the primary signal. AEO KPIs measure brand presence in a synthesized, zero-click environment where the AI answer itself is the output. There’s no impression data, no stable rank, and no direct click attribution. AEO instead tracks mention rate, sentiment, position weight, and citation sources.

How many prompts should I track to get meaningful AEO data?

Most teams start with 50 prompts and expand to 100 once they’ve validated their core query clusters. The key is covering all intent types: “What is X,” “Best X for [use case],” “How to do X,” and comparison queries. A 100-prompt set audited consistently over 90 days gives you enough variance data to distinguish signal from noise.

How often should I review these KPIs?

Four of the 10 KPIs (mention rate, sentiment, position, WoW delta) warrant weekly review because they move fast and can reflect platform-level changes quickly. The remaining six are better suited to monthly review, where trend lines are more meaningful than week-to-week variance.