Your competitor just published 50 community replies while your team drafted one.

That’s the efficiency gap AI reply generators were built to close. But here’s what most teams discover six weeks in: volume alone doesn’t move the needle. Their replies get ignored, downvoted, or flagged. Some accounts get banned. The tool isn’t the problem. The strategy is.

The brands winning with AI-generated replies in 2025 aren’t the ones generating the most. They’re the ones generating replies that get cited, upvoted, and eventually pulled by ChatGPT, Gemini, and Perplexity as trusted community signals.

That’s a different game entirely.

The Real Reason Most AI-Generated Replies Get Ignored

When you deploy an AI reply generator without a defined strategy, you’re producing what researchers now call “engagement filler,” not engagement catalysts.

Studies on AI-powered comment behavior show that automated replies generate a 23% increase in comment volume on average. But they fail to drive sustained user activity. The numbers look fine in a weekly report. The community goes nowhere.

The deeper issue is what happens at the psychological level. As of 2025, 59% of consumers say AI-generated content actively hurts brand trust. On community platforms, where the entire value exchange depends on authentic peer-to-peer interaction, that number carries real consequences.

There’s also a detection problem. Research shows 80% of users report they can regularly identify AI-generated accounts or suspicious bots on social media. When a reply lacks brand-specific voice, contextual awareness, and clear intent, it falls into what researchers call the “textual uncanny valley”: grammatically correct, but oddly polite, laced with filler phrases like “It’s important to remember” or “In conclusion.” That pattern triggers an immediate defensive response in community readers.

The fix isn’t finding a better AI tool. It’s fixing how you use the one you have.

What “AI Social Media Replies” Actually Means in 2025

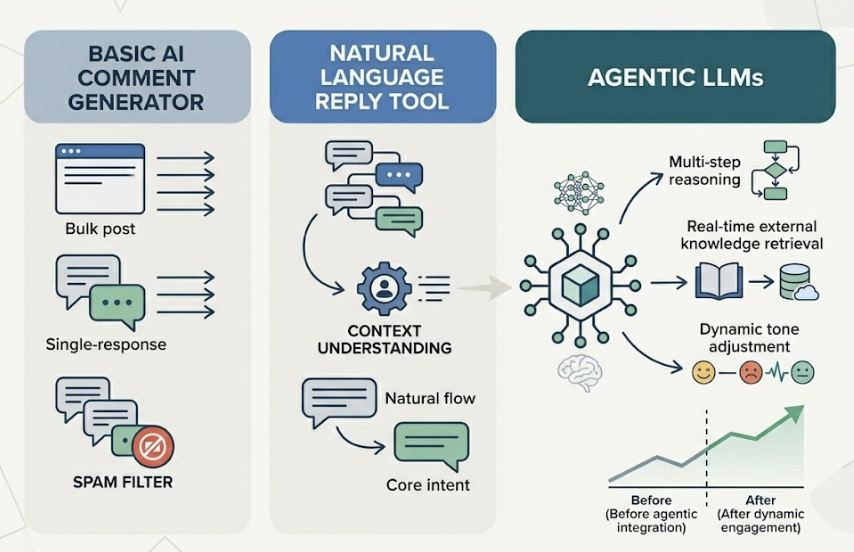

The terminology gets muddled fast. AI reply generator, AI comment generator, natural language reply tool: these often refer to overlapping but technically distinct capabilities.

A basic AI comment generator focuses on single-response output. It typically lacks the ability to extract context from deeper thread structures, which makes it easy for community spam filters to identify as bulk posting. A natural language reply tool introduces more advanced understanding, pulling the user’s core intent from messier inputs and generating responses that read more naturally. The most capable systems in 2025, what practitioners call agentic LLMs, go further still: multi-step reasoning, real-time retrieval from external knowledge bases, and dynamic tone adjustment based on live sentiment analysis.

What separates effective use from wasted effort isn’t which tier you use. It’s whether you’re running a “Train-Review-Post” workflow or a “Paste-Click-Publish” one.

The three-stage lifecycle that works:

Stage 1, Contextual Analysis: The system uses Retrieval-Augmented Generation (RAG) to scan the full thread, not just the post you’re replying to. It reads prior conversation history, community sentiment, and account context before generating anything.

Stage 2, Strategic Prompting: Brand voice parameters and platform-specific constraints are applied. Reddit’s norms aren’t Quora’s. Quora’s aren’t Instagram’s.

Stage 3, Human Calibration: A review checkpoint before anything goes live. This is the stage most teams skip. It’s also the stage that determines whether a reply earns upvotes or triggers a moderation flag.

How to Train AI to Match Your Brand Voice in Generated Replies

The most common complaint about AI-generated replies isn’t that they’re factually wrong. It’s that they all sound the same.

Brands with a documented voice strategy see 40% higher customer satisfaction and 33% higher engagement rates from AI-generated content. Research also links consistent brand presentation across channels to 23-33% revenue growth and a 67% improvement in customer lifetime value. The gap between teams with and without a defined voice framework is that measurable.

What works isn’t telling the AI to “sound professional” or “be friendly.” Those instructions produce generic output. What works is a systematic process researchers call “Linguistic DNA” mapping:

Step 1: Collect your gold standard. Pull 10-15 of the best human-written replies your team has published across different scenarios: technical debate, user frustration, product praise. These become your anchor dataset.

Step 2: Define structural parameters. Sentence length limits, punctuation preferences, vocabulary restrictions. “Keep replies under 20 words per sentence” produces more consistent output than “be concise.” Specify whether your tone is warm, authoritative, or dry. Quantify it where you can.

Step 3: Build an anti-persona. Define what your brand is not. “We never use corporate jargon. We never deflect with generic sympathy. We never end with ‘Let me know if you have questions.'” This is as important as defining what you are. Brands that treat voice guidelines as long-term strategic assets rather than one-off templates report AI output consistency scores of up to 90%.

Step 4: Add a real-time tone check. Tools like Acrolinx can automatically flag output that drifts from brand parameters before anything reaches a human reviewer. This turns the review step from a full editorial pass into a final quality gate.

Neuroscience research adds another dimension here. fMRI studies found that brand replies written in a conversational, human voice (what researchers label “Conversational Human Voice”) activate the prefrontal cortex 27% more than formal corporate tone. They also improve factual recall by 18%. The trust gap isn’t about AI origin. It’s about tone drift.

How to Use AI Reply Tools Without Violating Platform Guidelines

Reddit and Quora aren’t social media platforms in the conventional sense. They’re trust hubs. And their moderation systems in 2025 have become explicitly hostile to what communities call “AI slop”: low-effort, mass-produced content that adds no real value.

Reddit’s approach has moved from reactive moderation to proactive detection. CEO Steve Huffman has publicly discussed biometric verification experiments using Face ID and passkeys to confirm human authorship. Accounts using automation must display an “[App]” tag under Reddit’s Responsible Builder Policy. Violations risk API access revocation and permanent bans. High-value subreddits like r/devops and r/NoContract have added explicit rules against AI-generated content, enforced at the mod level.

Quora presents a different but equally significant risk. Approximately 10.9% of Quora answers are already flagged as AI-generated, a figure that has eroded platform-wide trust and pushed Quora to dramatically raise credibility thresholds for new accounts. Even well-written answers that are detected as bulk AI output get hidden or removed without warning.

The compliance framework that holds up:

The 95/5 rule: 95% of your replies should provide pure value: answering questions, sharing insights, solving problems. Only 5% should include any brand reference, and only when it’s directly relevant to the conversation.

Disclose when required: In communities that mandate disclosure, proactively noting “content assisted by AI, reviewed by a human” tends to earn respect rather than suspicion. 72% of users expect AI disclosure in content they interact with.

Never batch identically: Posting near-identical replies across different threads is the clearest automated-behavior signal a platform’s detection systems look for. Every reply must be customized to its specific thread context.

The goal is replies a moderator would read and conclude: this person actually knows what they’re talking about.

AI Reply Generators for Reddit and Quora: What Instagram Logic Gets Wrong

Most teams approach Reddit and Quora with the same playbook they use for Instagram or X. That’s the first structural error.

On Instagram, a brief, warm reply with an emoji performs well. On Reddit, that exact reply gets downvoted to invisibility. The platform’s social currency is niche knowledge and candid honesty, not emotional warmth. Generic enthusiasm reads as promotional, regardless of whether the content is AI-generated or not.

The platform differences are structural:

| Platform | Core Social Currency | AI Reply Strategy |

|---|---|---|

| Karma, upvotes, niche credibility | Conversational, technical, specific; include verifiable data or personal experience | |

| Quora | Topical authority, evergreen relevance | Long-form (1,000+ words), structured with tables and direct-answer blocks |

| Visual aesthetics, instant emotional resonance | Short, warm, quick; emoji-friendly |

On Reddit, AI must analyze the entire thread hierarchy, not just the original post. A reply that ignores a high-upvote comment three levels deep reads as disconnected and gets treated as such. The “upvote algorithm” rewards uncommon honesty: specific, verifiable claims, real failure examples, niche data points. Not praise.

On Quora, structure matters differently. The most effective AI-generated answers open with a 40-60 word direct-answer block, then expand into detailed argument. That structure isn’t just good UX. It’s exactly what Google AI Overview and Perplexity crawlers are built to extract.

One data point worth internalizing: threads with 30 or more replies are significantly more likely to be cited by AI search engines. That means the goal of an AI-generated reply on Reddit isn’t just to contribute an answer. It’s to write something that invites follow-up conversation, builds thread depth, and grows into the kind of community signal that AI models trust.

The Review-Before-Post System That Scales Without Losing Quality

The teams that scale successfully with AI-generated replies don’t automate everything. They automate the right parts.

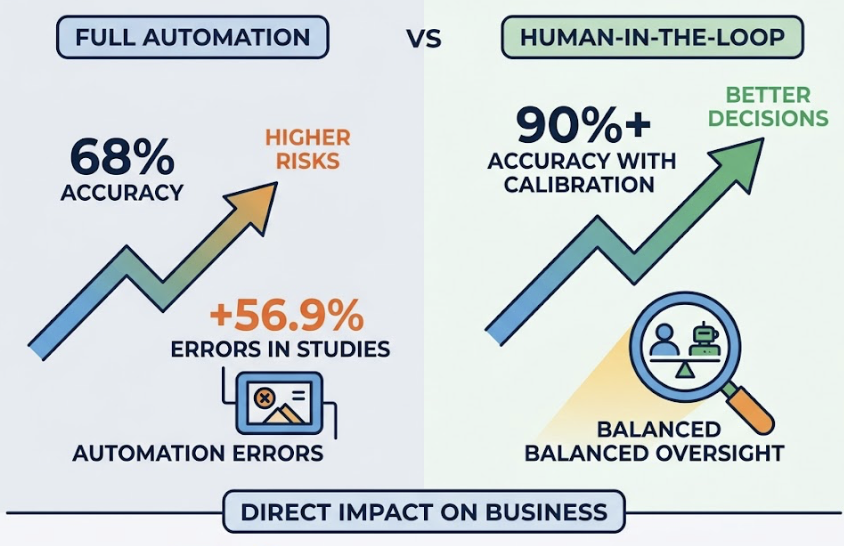

Full automation achieves around 68% accuracy in complex social prediction scenarios. Human-in-the-loop (HITL) systems reach 90%+ with calibration. In a drug prescribing study, over-reliance on automated systems alone increased errors by 56.9%. The principle transfers directly to community management: automation bias leads to tone-deaf responses that trigger PR incidents, not just downvotes.

The operational architecture that works at scale:

Batch generation with confidence thresholds: AI produces multiple response variants per thread. Replies meeting an 80% or higher confidence threshold for brand alignment go to a fast-approval queue. Replies below threshold, or those touching high-risk topics like finance or legal questions, route directly to a human specialist. This keeps the human review burden focused where it actually matters.

Anomaly detection: When a subreddit’s discussion volume spikes 10x or brand sentiment turns sharply negative, the system automatically pauses posting and triggers an alert. You don’t want AI continuing to publish while a crisis is unfolding.

Staggered distribution: Posts go out over hours, not in a single burst. This avoids triggering platform anti-bot detection based on posting velocity.

Active learning feedback loop: Human edits to AI drafts sync back into the model’s fine-tuning process. Research shows this reduces false positive flags by up to 30% over time, meaning the system gets more accurate the more it’s used.

Quantified: this hybrid approach cuts average reply cycle time from 4.2 hours to 47 minutes, an 81% reduction, while improving lead quality by 33% compared to pure automation or pure manual handling.

That’s the architecture. Not AI instead of humans. AI and humans, each doing what they’re better at.

AI Reply Generation as a Brand Visibility Signal for AI Search

Here’s the part most teams haven’t thought through yet.

Your replies on Reddit and Quora don’t just reach the people in that thread. They get crawled by AI models. When a user asks ChatGPT which CRM to use for a small team, and your brand appears in the answer, there’s a reasonable chance it’s because someone posted a genuinely helpful reply in an r/smallbusiness thread months ago.

The citation data is striking. An analysis of 150,000 AI citations found Reddit accounts for 40.1% of all citations in large AI models, ahead of Wikipedia at 26.3% and YouTube at 23.5%. Reddit is the second most-cited source in ChatGPT responses, and 99% of those citations point to specific threads, not brand homepages. Perplexity’s Reddit citation rate reaches 46.7% in certain industries.

The conversion math makes this even more compelling. AI-driven traffic currently represents around 1% of total referral volume, but converts at 14-16%, compared to 2.8% for traditional search. That’s a 5x conversion rate advantage. For brands still treating community replies as a customer service function, this reframe matters. Every well-placed, human-approved AI reply is a potential citation. Citations drive AI visibility. AI visibility drives the kind of conversion that paid media can’t replicate.

Brands with genuine Reddit activity are 3x more likely to be cited by AI models than brands that rely solely on their own website for SEO. LLMs treat community discussion as evidence of real user experience. Marketing copy is treated as brand rhetoric.

Topify connects AI reply generation directly to this GEO layer. Its Source Analysis feature tracks which specific Reddit and Quora threads are being cited by ChatGPT, Gemini, Perplexity, and other AI platforms. That tells you exactly which conversations are worth targeting with high-quality AI-generated replies: not the ones with the most engagement, but the ones AI is already pulling from.

Topify’s managed service also includes Reddit Visibility Posts, a structured program for building community signals in the highest-value threads for your brand. The system identifies where AI engines are looking, then places human-reviewed content there systematically. The analytics platform starts at $99/month; the full managed service with content distribution and Reddit post management starts at $3,999/month for teams that want end-to-end execution.

The brand-to-AI-citation correlation research puts a number on this: the correlation between organic Reddit discussion volume and AI citation frequency is 0.334. Every substantive reply you place in the right thread increases the probability that AI recommends your brand the next time a relevant question gets asked.

Conclusion

The question isn’t whether to use an AI reply generator. It’s whether you’re using one with a strategy.

The teams generating real results in 2025 share three habits. They’ve trained their AI on specific brand voice parameters, not generic prompts. They run every reply through a human checkpoint before it posts. And they treat Reddit and Quora as GEO assets, not just community platforms.

Volume without those three things produces AI slop. Volume with them produces community authority, AI citations, and brand visibility that compounds over time.

The next step, if you’re already generating replies at scale, is measuring where they land in AI search. That’s where the return becomes visible. And that’s where Source Analysis gives you data traditional analytics can’t.

FAQ

What are the best AI tools for generating social media replies?

In 2025, leading teams don’t rely on a single tool. The most effective stack combines a high-capability LLM (like Claude 4.5 or Gemini 3 Pro) for generation, a tone governance layer like Acrolinx for brand consistency, and a GEO tracking platform like Topify for measuring how community replies translate into AI citations. Each layer solves a different problem.

How do I generate authentic replies with AI?

Authenticity comes from semantic alignment, not from the tool itself. Collect 10-15 gold-standard human-written examples from your team, define structural parameters (sentence length, vocabulary, punctuation), build an anti-persona that defines what your brand never sounds like, and run outputs through a human review before posting. That combination closes most of the “machine-feel” gap. Replies trained on brand-specific corpora also score up to 90% higher on consistency benchmarks.

How do I use AI to respond to customer comments at scale without losing quality?

Implement a tiered confidence system. High-confidence, routine replies go through a fast human approval queue. Low-confidence or emotionally complex comments route to a human specialist. This hybrid approach reduces average reply cycle time by 81% while improving lead quality by 33% compared to pure automation. The key is defining your confidence thresholds before you start, not after something goes wrong.

How do AI-generated replies compare to manually written responses?

Data shows no significant trust difference when the tone is genuinely conversational. The remaining gap is in “control mutuality”: users feel more heard when a human was clearly involved in the response. That’s the argument for human-in-the-loop review, not for abandoning AI generation. Mixed-model approaches combining AI speed with human refinement outperform both pure automation and fully manual workflows on engagement and conversion metrics.

What are the best practices for using AI reply generators on Reddit and Quora?

Follow the 95/5 rule: 95% pure value, 5% natural brand reference. Customize every reply to its specific thread context. Never post identical content across threads. Disclose AI involvement in communities that require it. On Reddit, include specific, verifiable data points rather than generic encouragement. On Quora, open with a direct 40-60 word answer block before expanding into detail. That structure earns upvotes and gets extracted by AI crawlers.

How do I know if my community replies are influencing AI search results?

Standard analytics won’t show you this. You need a tool that tracks AI citations at the source level, identifying which specific threads are being referenced by ChatGPT, Gemini, or Perplexity when users ask questions relevant to your brand. Topify’s Source Analysis was built specifically for this use case, and it connects directly to the Reddit Visibility Posts service so you can act on what you find.