Your Google keyword strategy is probably useless in AI search.

That’s not a shot at your SEO team. It’s a structural problem. The logic that makes a keyword rank on Google—backlinks, metadata, keyword density—has almost no bearing on whether ChatGPT, Perplexity, or Gemini recommends your brand. These platforms don’t retrieve pages. They synthesize answers. And the inputs they respond to aren’t keywords. They’re prompts.

If you’re still running AI keyword research the same way you run traditional keyword research, you’re optimizing for a search engine that your audience is quietly leaving.

Google Keywords Don’t Transfer to AI Search. Here’s the Data.

The average Google search query is 4 to 5 words. The average prompt entered into ChatGPT is 23 words, nearly five times longer, reflecting full sentences, multi-part conditions, and personal context. Even in Google’s AI Mode, queries now average 7.22 words, and any query over eight words has a 57% probability of triggering an AI Overview instead of a traditional results page.

The implication is structural. Users aren’t asking AI assistants “best CRM software.” They’re asking “what’s the best CRM for a 12-person sales team that needs Salesforce integrations without the Salesforce price tag?” A keyword-stuffed landing page built for the former cannot satisfy the latter.

What makes this harder: roughly 70% of prompts entered into AI assistants are unique or rarely repeated in traditional search. That means historical keyword volume, the core input of every traditional research workflow, is an unreliable predictor of AI search demand.

What “Keywords” Actually Mean in AI Search

In a generative search environment, “keyword” is the wrong mental model.

The correct unit is a prompt pattern: a structural shape that captures what a user is trying to accomplish, not just the words they typed. AI systems use embedding models to convert language into numerical vectors that represent meaning and context. They’re not matching strings. They’re matching intent.

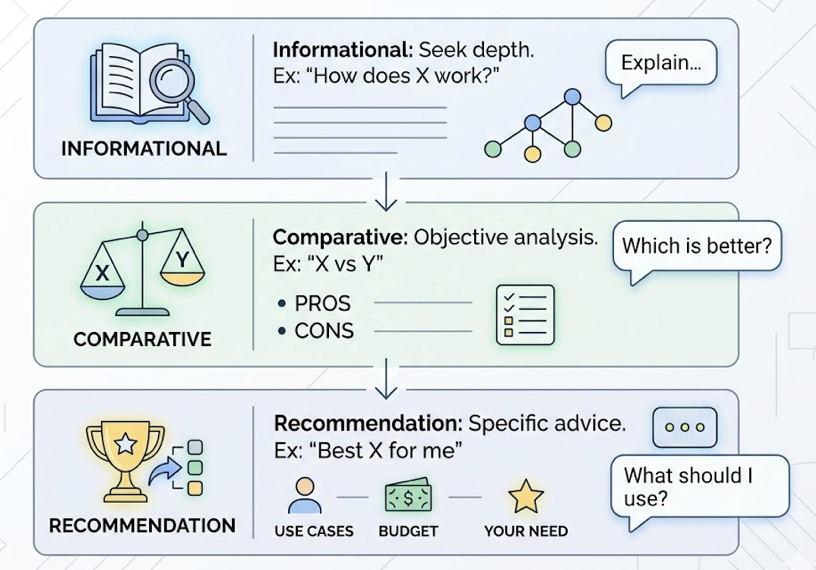

Three prompt pattern categories dominate AI search behavior. Informational prompts (“how does X work,” “what’s the difference between X and Y”) require deep, structured explanations. Comparative prompts (“X vs Y for a specific use case”) require objective trade-off analysis. Recommendation prompts (“recommend the best X for my situation”) require use-case authority and clear brand positioning.

Here’s the thing: AI platforms also blend these categories simultaneously. A query like “best project management tools for remote engineering teams” combines informational, comparative, and transactional intent in a single prompt. Content that only satisfies one dimension often gets bypassed entirely.

This is the foundation of GEO keyword strategy. You’re not finding words. You’re mapping the shapes of questions.

5 Ways to Discover High-Value Prompts in AI Search

Traditional keyword research tools have a role here, but a limited one. Ahrefs and SEMrush can surface intent signals and long-tail query data. They can’t tell you what prompts people actually type into Perplexity at 11pm when they’re researching your category. For that, you need a different workflow.

Step 1: Reverse-engineer from user scenarios. Don’t start with keywords. Start with the problems your product solves and the conversational language your customers use to describe them. Mine support tickets, sales call transcripts (Gong or Chorus), Reddit threads, and Quora discussions for natural phrasing. The “how do I” and “what’s the best way to” patterns you find there are the seeds of your AI prompt map.

Step 2: Map competitor citations across platforms. In AI search, your competitors aren’t just the brands ranking above you on Google. They’re the brands ChatGPT chooses to recommend. Run a set of 20-30 prompts across ChatGPT, Gemini, and Perplexity monthly and record who gets cited. Brands with higher mention rates on high-authority domains consistently receive more AI citations. This is your competitive visibility gap made visible.

Step 3: Use AI platforms as research tools. Ask ChatGPT directly: “What are 15 questions someone might ask when researching [your category]?” This process surfaces what researchers call “dark queries”: high-intent prompts that haven’t yet been saturated by competitor content. These represent the highest-ROI targets for GEO content production.

Step 4: Analyze citation source patterns. Each AI platform has citation preferences that reflect its architecture. ChatGPT cites Wikipedia for 47.9% of its top responses. Perplexity leans on Reddit for 46.7% of community-validated claims. Google’s AI Overviews favor YouTube at 23.3%. Knowing which domains an AI trusts most for your category tells you exactly where to build authority. Content distribution strategy follows from citation analysis, not the other way around.

Step 5: Scale with purpose-built tracking. Manual prompt testing across three platforms is unsustainable past the research phase. Topify’s High-Value Prompt Discovery automates this, continuously surfacing new high-volume prompts in your category as AI recommendation patterns shift. It’s the difference between a monthly audit and a live signal.

How AI Platforms Rank Keywords Differently From Google

Google evaluates pages. AI platforms evaluate chunks.

When a generative engine synthesizes a response, it doesn’t assess your domain authority or your backlink profile. It extracts the most “quotable” paragraph-level sections from across the web and assembles them into an answer. Content that is informatively dense at the paragraph level outperforms content that reads well for humans but buries its key claims in narrative.

Three factors drive AI ranking decisions. Semantic similarity measures conceptual distance between a prompt and a content chunk. Informational density rewards content that delivers maximum value per sentence. Cross-source corroboration is the most important: if multiple high-authority domains agree on a brand recommendation, AI systems are significantly more likely to include it.

That third factor changes the game. It means a brand can rank number one on Google for a keyword while remaining invisible in ChatGPT for the corresponding prompt, because the top-ranking page was built for human engagement rather than machine extraction. Princeton research found that adding verifiable statistics to content can increase AI citation rates by up to 40%.

AI-driven keyword analysis, then, isn’t about finding the words. It’s about building the conditions for corroboration.

Keyword Research for ChatGPT, Gemini, and Perplexity: Not the Same Problem

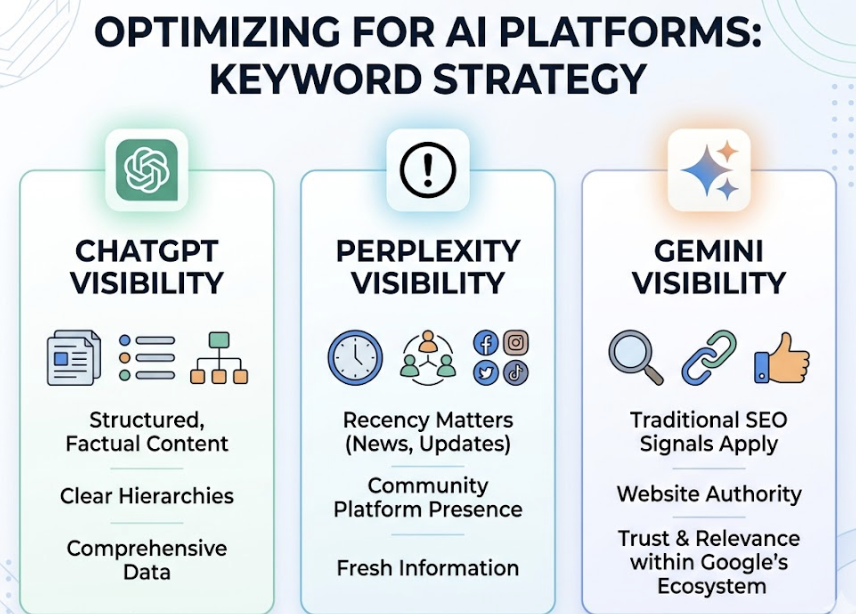

Only 11% of domains cited by ChatGPT and Perplexity overlap for the same query. That single data point makes the case against a one-size-fits-all approach better than any framework can.

| ChatGPT | Perplexity | Gemini | |

|---|---|---|---|

| Query type bias | Encyclopedic, factual, structured | Real-time, research-heavy, community-sourced | Transactional, local, multimodal |

| Top citation source | Wikipedia (47.9%) | Reddit (46.7%) | YouTube (23.3%) |

| Citation rate | 62% of claims cited | 78% of claims cited | High, aligned with Google top 100 |

| Content format that wins | H1-H2-H3 hierarchy, 120-180 word sections | Recency signals, comparison tables, forum presence | E-E-A-T, entity authority, Google ecosystem |

| Optimization timeline | 2-4 weeks | 2-4 weeks | 4-8 weeks |

| Avg. session quality | 8.1 min on-site | 9.0 min on-site | High conversion, zero-click bias |

The practical implication for keyword research for ChatGPT visibility is different from optimizing for Perplexity. For ChatGPT, you need structured, factual content with clear hierarchies. For Perplexity, recency matters and community platform presence matters more. For Gemini, traditional SEO signals still carry weight because it operates within Google’s ecosystem.

Most GEO strategies fail because they treat these three platforms as one channel.

What a GEO Keyword Strategy Actually Looks Like in Practice

A mature GEO keyword strategy doesn’t produce a keyword list. It produces a prompt map: a hierarchical structure of the questions, comparisons, and recommendation requests users make throughout their research process.

For a single category like “project management software,” a prompt map might include 80-100 variants segmented by intent stage: informational (“how do project management tools handle dependencies?”), comparative (“Asana vs Monday for a marketing team”), and evaluative (“is [Brand X] worth the price increase in 2025?”). Each node in the map becomes a content brief.

Content production for keyword research in generative engine optimization must prioritize machine-extractability. That means leading each section with a 40-60 word direct answer, using structured data and tables, and ensuring every key claim is a “quotable chunk” rather than buried in paragraph five of a 3,000-word narrative.

Traditional SEO tools don’t support this workflow. Topify’s AI Volume Analytics uses real AI search behavior to estimate how many prompts are triggering for specific topics, while its Competitor Monitoring tracks head-to-head citation rates across ChatGPT, Gemini, and Perplexity in a single dashboard. The workflow goes from prompt discovery to content production to performance measurement without switching tools.

That’s what makes keyword research for AI platforms structurally different from traditional SEO. The inputs, the content format, and the measurement layer are all different.

How to Track Keyword Performance in AI Search Results

AI keyword research is not a one-time project. Prompt preferences shift as models update, as competitor content accumulates citations, and as new platforms emerge.

Tracking performance in AI search requires metrics that traditional analytics can’t capture. In AI Mode environments, zero-click search has reached up to 93%, meaning the goal is no longer to drive clicks. It’s to become the cited authority. Users referred from AI platforms may be fewer in raw volume, but they spend 50% more time on-site and convert at rates up to 4.4x higher than average search traffic.

The core metrics to track:

Visibility: How often does your brand appear in AI responses for your target prompt set? This is your share of voice in the synthesis layer.

Position: Being cited first in a ChatGPT answer carries significantly more weight than appearing as a seventh mention. Topify’s Position Tracking measures relative placement across responses.

Sentiment: How does the AI characterize your brand? “Affordable and reliable” vs “limited but functional” vs “prone to integration issues” are three very different brand narratives even if visibility is identical.

A practical monthly workflow: audit 20-50 core prompts across platforms, analyze which competitor content is winning citations you should own, and use that gap analysis to refine your content structure and distribution strategy.

Conclusion

The core shift in AI keyword research isn’t technical. It’s conceptual.

You’re not finding words with volume. You’re identifying the patterns of intent that trigger AI recommendations, building content that satisfies those patterns at the chunk level, and distributing that content across the domains each platform trusts. Then you measure visibility, position, and sentiment instead of rankings and clicks.

That workflow requires different tools, different content formats, and a different measurement framework. Topify is built specifically for this: prompt discovery, AI volume analytics, competitor citation tracking, and position monitoring in a single platform trusted by 50+ enterprises and startups.

The brands that figure this out in 2025 will own the recommendation layer. The ones that don’t will keep ranking on Google for queries their audience stopped typing.

FAQ: AI Keyword Research, Answered Directly

What keywords make AI recommend your brand?

Not keywords in the traditional sense. AI platforms respond to prompt patterns: structured signals of intent. Brands get recommended when their content is semantically aligned with a prompt, cited across multiple authoritative domains, and written in extractable, information-dense chunks. Consistent brand mentions on high-authority sites carry as much weight as direct backlinks.

What’s the difference between SEO keywords and AI search prompts?

SEO keywords are short strings matched against indexed pages. AI search prompts are full conversational inputs matched against synthesized meaning. The average AI prompt is 23 words vs 4-5 words for Google. Optimizing for one doesn’t optimize for the other.

How do you use keyword research to improve AI brand visibility?

Map your target prompts by intent type (informational, comparative, recommendation), produce content that leads with direct answers and includes verifiable data, and build consistent brand mentions across authority domains. Then track visibility and citation rate by prompt, not by page rank.

How do you discover what prompts your competitors rank for in AI?

Run your category’s core prompts across ChatGPT, Gemini, and Perplexity monthly and record who gets cited. Topify’s Competitor Monitoring automates this, tracking citation share across platforms and flagging prompts where competitors appear but your brand doesn’t.

How does keyword research fit into a GEO content strategy?

It’s the input layer. Prompt mapping identifies what users ask and how they ask it. Content production converts those prompts into citation-ready answers. Authority building distributes that content across the domains AI platforms trust. Tracking closes the loop. Skip the mapping step, and everything downstream is guesswork.

Read More: