AI agents don’t search the way users do. They don’t browse your homepage, read your about page, or scan your pricing table. They pull from a trusted layer of citations, training data, and real-time references, and in seconds, they decide whether your brand exists.

For most brands, the answer is: it doesn’t.

This isn’t a traffic problem. It’s a structural one. And understanding why requires a clear look at what agentic AI actually does, and what it means when your brand isn’t part of the answer.

Agents Don’t Browse. They Decide.

The difference between a traditional AI search and an agentic AI isn’t just speed. It’s intent.

Generative AI responds to questions. Agentic AI completes tasks. When a user asks ChatGPT “what’s a good project management tool for remote teams?”, they’re researching. When an AI agent is delegated that same decision with authority to book, compare, and recommend, it’s executing.

That shift matters for brands because task-oriented agents don’t produce a list of results for the user to scroll through. They make a call. According to research on agentic behavior, the primary touchpoint for brand discovery is no longer a search results page. It’s the agent’s internal reasoning process, drawing on an AI trust layer built from training data, real-time citations, and memory.

If your brand doesn’t appear in that reasoning, it doesn’t appear at all.

There’s a New Layer Between Your Brand and Your Buyers

Think of it as the neural intermediation layer: a system sitting between your marketing assets and your potential customers, built from three sources that most brand teams have never optimized for.

The first is parametric memory, the foundational knowledge baked into a model during training. If your brand didn’t appear in authoritative content before the model’s training cutoff, you start at a deficit.

The second is retrieval-augmented generation (RAG), the “live” citation layer where agents pull real-time data to ground their answers. This is where most discovery happens in 2026. The third is agent memory, the persistent understanding of a specific user’s preferences and prior brand interactions.

Traditional SEO doesn’t touch any of these layers. Ranking for a keyword doesn’t guarantee that an AI cites you. Having a fast site doesn’t mean an agent can process what you offer. This is why brands with strong Google presence are often completely invisible to agentic systems.

The goal is no longer to rank. It’s to be characterized accurately and cited consistently.

Why 95% of B2B Brands Are Invisible to Agentic AI

The number isn’t exaggerated. The 2026 2X AI Visibility Index found that 95.7% of B2B companies are invisible during the earliest stages of AI-driven buyer discovery. These brands only appear when a buyer already knows their name. They’re absent from the AI-generated shortlists that define entire categories.

There are three structural reasons this happens:

Content built for humans, not machines. Most brand content is narrative and keyword-heavy. AI systems prioritize content that’s “retrieval-ready”: chunked, front-loaded with direct answers, and high in fact density. A wall of text that ranks on Google may never be cited by a language model.

No third-party consensus. AI models behave like risk-averse analysts. They cite sources where multiple independent sites agree on a brand’s core attributes. Brands with strong owned content but thin review ecosystems and limited independent mentions get ignored.

Technical friction. Site performance directly affects citation rates. Research shows that pages with a Largest Contentful Paint over 4 seconds face a citation penalty of up to 72%. Pages with high Cumulative Layout Shift face similar suppression.

The deeper issue: most brands don’t even know which of these problems applies to them, because traditional analytics tools can’t see what’s happening inside AI responses.

What “Having a Page” on the Agentic Web Actually Means

In the era of AI agents, brand presence isn’t about URLs. It’s about existing accurately and prominently in the AI’s answer share.

That presence has three dimensions.

Visibility measures how often your brand appears in AI-generated responses across a target set of prompts. It’s not just about being mentioned. Position matters. Being named first in a three-option list carries significantly more weight than being third, a phenomenon driven by the primacy effect that Topify’s Position-Adjusted Word Count (PAWC) model is built to track.

Sentiment tracks how the AI describes you. A brand that gets mentioned but is consistently framed as “expensive” or “limited” can have high visibility and low conversion. Sentiment scoring on a 0-100 scale catches AI hallucinations and outdated characterizations before they erode pipeline.

Source analysis reveals which URLs and domains the AI is actually citing to support its recommendation. Often, the AI isn’t citing your own site. It’s pulling from a competitor’s blog, a three-year-old forum thread, or a niche review platform you’ve never prioritized. Understanding these citation gaps is the first step to closing them.

Topify tracks all three dimensions across ChatGPT, Gemini, Perplexity, and other major AI platforms, giving brand and marketing teams a structured view of exactly how they exist in the AI trust layer.

The Brands That Agents Already Trust

The brands that have successfully built presence on the agentic web share a common characteristic: they are structurally unambiguous.

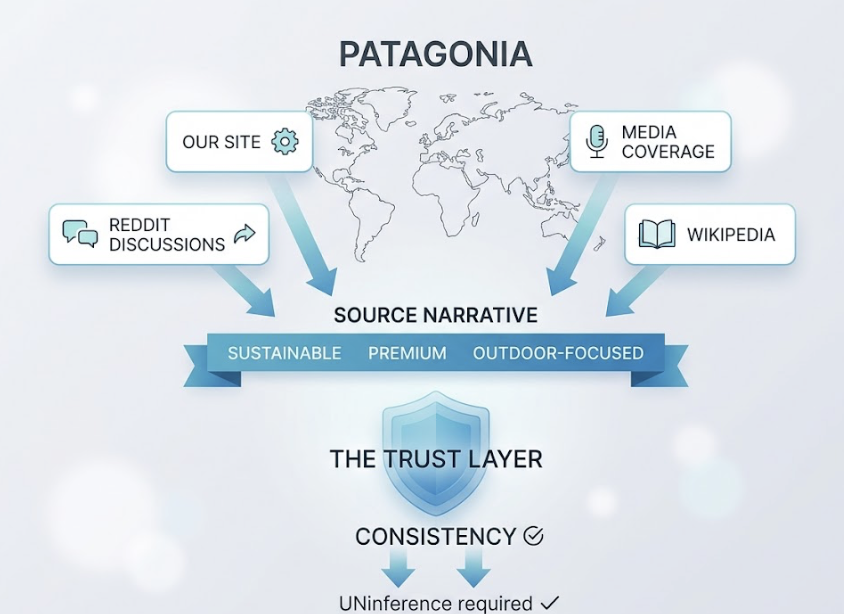

Consider how Patagonia is characterized across AI responses. Every source, its own site, media coverage, Reddit discussions, Wikipedia, consistently reinforces the same narrative: sustainable, premium, outdoor-focused. That consistency allows language models to confidently recommend Patagonia when a user’s agent is looking for “sustainable outdoor gear.” There’s no inference required. The answer is already in the trust layer.

The same principle applies in B2B. Research across 1.2 million ChatGPT answers shows that brands with active community discussions on platforms like Reddit are cited more than three times as often as brands without community presence. Real-time engines like Perplexity weight community validation heavily because it signals that a brand’s reputation isn’t just marketing copy.

The common thread isn’t budget or brand size. It’s consistency of characterization across independent sources, combined with content that machines can actually extract and use.

How to Start Building Your Presence on the Agentic Web

There’s a three-step framework that holds up across verticals: Diagnostic, Positioning, Execution.

Step 1: Run a diagnostic audit. Before optimizing anything, you need to know your current answer share. That means mapping out not a keyword list, but a prompt universe of 150-300 questions that real users ask AI when researching your category. Topify’s AI Volume Analytics surfaces high-volume AI prompts that don’t even register in traditional SEO tools, because they’re being asked in chat interfaces, not search bars. The gap between what users type in Google and what they ask ChatGPT is larger than most teams expect.

Step 2: Restructure content for machine reasoning. GEO-optimized content isn’t just a style preference. Research from Princeton and other institutions shows that content using structured strategies like “answer-first format,” added statistics, and explicit citations can boost AI citation frequency by 30% to 115%. The practical changes: break long-form content into self-contained 200-400 word sections, front-load each page with a direct 40-60 word answer capsule, and increase fact density with specific numbers, dates, and expert quotes.

Step 3: Fix the technical layer. Two steps have outsized ROI. First, implement an /llms.txt file in your site’s root directory. This Markdown-formatted file strips away HTML noise and acts as a direct cheat sheet for AI crawlers, reducing the computational cost of processing your content. Second, adopt robust Schema.org markup for Organization, Brand, and Product. This “entity disambiguation” links your brand name to specific qualitative traits in the vector space agents reason through.

If your audience uses ChatGPT or Microsoft Copilot, adopting OpenAI’s Agentic Commerce Protocol (ACP) gives agents the ability to interact with your services directly. Google’s Universal Commerce Protocol (UCP) covers the broader transaction lifecycle across any AI surface.

None of these steps require a full content overhaul. Most brands can close the most critical gaps in 60-90 days with focused execution.

Conclusion

The agentic web isn’t a future scenario. It’s the current operating environment for an increasing share of buyer discovery, and the brands that treat it as a waiting problem are already falling behind.

The logic is simple: if an AI agent is making a recommendation and your brand isn’t in its trust layer, the user never hears your name. Not because you lost the comparison, but because you weren’t part of the reasoning at all.

Visibility, sentiment, and source analysis are the new metrics that matter. The /llms.txt file and Schema markup are the new technical fundamentals. And prompt universe mapping is the new keyword research.

The infrastructure of how AI agents discover, evaluate, and recommend brands is being built right now. Getting your brand onto that infrastructure is still early-mover territory.

FAQ

What’s the difference between agentic AI and regular AI search?

Regular AI search synthesizes an answer to a question. Agentic AI executes multi-step tasks using external tools. An AI search might list three project management tools. An agentic AI might evaluate them against your team’s requirements, check pricing, and initiate a trial. The brand selection happens before the user sees anything.

How does a brand know if it’s being recommended by AI agents?

Standard analytics tools like GA4 can’t track what happens inside AI models. The internal “hidden” responses that drive agent recommendations are invisible to traditional dashboards. Brands need purpose-built diagnostic tools to simulate user prompts and track Answer Share and Position Rank across platforms like ChatGPT, Gemini, and Perplexity. Topify’s Visibility Tracking does this across major AI platforms with seven core metrics.

Does GEO content optimization actually move the needle for agentic systems?

Yes, with caveats. The optimization strategies that work for generative AI search (answer-first structure, fact density, source citations) also improve agentic citation rates because agents draw from the same underlying models. The difference is that agentic systems place more weight on technical accessibility and third-party verification than on content volume alone.

Should brands block AI crawlers to protect their content?

Generally, no. Blocking training bots like GPTBot prevents a brand from entering the model’s parametric memory. That means the AI won’t know the brand exists at a foundational level. The better approach is to guide agents using /llms.txt and structured protocols toward the most accurate, high-value information rather than blocking access altogether.

What’s the single most impactful step a brand can take this week?

Run a prompt audit. Identify 20-30 high-intent prompts in your category and run them through ChatGPT, Gemini, and Perplexity. Track whether you’re named, where you appear, and how you’re described. That baseline alone will reveal more about your agentic web presence than a year of traditional SEO reporting.